The Lindahl Letter

Weekly insights at the intersection of technology, artificial intelligence, and modernity—exploring how innovation shapes our world every Friday.

Dr. Nels Lindahl

Show overview

The Lindahl Letter has been publishing since 2022, and across the 4 years since has built a catalogue of 145 episodes. That works out to roughly 15 hours of audio in total. Releases follow a fortnightly cadence.

Episodes typically run under ten minutes — most land between 4 min and 6 min — though episode length varies meaningfully from one episode to the next. None of the episodes are flagged explicit by the publisher. It is catalogued as a EN-language Technology show.

There hasn’t been a new episode in the last ninety days; the most recent episode landed 4 months ago. The busiest year was 2022, with 47 episodes published. Published by Dr. Nels Lindahl.

From the publisher

Thoughts about technology (AI/ML) in newsletter form every Friday www.nelsx.com

Latest Episodes

View all 145 episodes

Welcome to 2026 and beyond

Thank you for tuning in to week 220 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Welcome to 2026 and beyond.”This last week has been about being a reflective practitioner and thinking about where we have been throughout the last year of the Lindahl Letter. This last year we covered research notes numbered from week 175 to 219. Back in June I did acknowledge a 56 day posting break in 2025 which is interesting to look back on now as an opportunity to reflect and build something substantial going forward. Toward the end of the year we got back into the groove of quality weekly missives which is good and something to continue. My focus on quantum, robotics, and AI seems to hold true to my roots of being generally interested in technology.Overall, my general interest in technology is what drives my interest in lifelong continuous learning. With that context being set it is probably easy enough to set the expectation that in 2026 and beyond the Lindahl Letter will be targeted toward the production of weekly research notes that are accessible, targeted, and focused. These missives will require less than 10 minutes of a reader’s time and should be a clear value add in terms of gaining knowledge, understanding, and context for complex technical content.Let’s establish the theoretical home base of this writing enterprise for 2026 which will be set on the foundation of digging into the edge of realized technology. That topic might sound familiar from week 212 of the Lindahl Letter. During that writing project we took a look at what technology is likely to be realized in the next 30 years. That coverage included looking at the metaverse, robotics, climate tech, space economy, biotech, synthetic biology, neurotech, and even fusion. I do believe that we will see quantum, robotics, and some AI mixed into that soup of potentially realized technology.All of that technology will see advancement and it will certainly be moving toward the edge of becoming realized technology. That is fundamental where it goes from being exploratory and research driven to being in production out in the wild where it will eventually become commoditized unless a clear winner breaks away and can hold onto a real advantage. I’m pretty skeptical about any of these technologies having a clear moat that allows that advantage. For the most part once a group of people know how to do these things the technology will be realized and break out into wider use.My primary weekly writing focus will be the Lindahl Letter and this is the place you will be able to find out what topics grab my attention and I consider to be worth sharing. My focus in the last 90 days has been heavily on quantum computing which is understandable due to how close it is getting to be a realized technology. We are on the edge of people figuring out how to demonstrate quantum supremacy for use cases and building these things into data centers as a clear value add for corporate customers and research labs that can afford to be a part of the journey. Outside of that, most of the major quantum computers that will be part of the early wave demonstrating the technology will be tied to either a research lab or corporate R&D group.Those early systems are starting to really scale up focus on specific advances in the quantum space. My research project in that space helped me to focus on open-access nanofabs, national laboratories, commercial foundry services, and captive industrial fab sites. Each of those groups has different advantages and research interests. We will see where the ultimate breakthroughs end up coming from as the story unfolds toward realized quantum technology.That is where we are heading throughout 2026. Thank you for being here for the journey and I look forward to learning more about technology and digging into the frontier of what will be realized this year. Overall the state of the Lindahl Letter is strong and we should be able to continue moving forward on our weekly journey of exploration into technology.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead! This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

2025 End of Year Recap

Thank you for tuning in to week 219 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “2025 End of Year Recap.”Thank you for being here! The Lindahl Letter this week started out as a Merry Christmas and Happy Holidays post and ended up just being an end of year recap. As the year comes to a close, I am taking a brief pause from publishing this week to spend time with family, recharge, and reflect on the remarkable conversations and ideas we have explored together throughout the year. If you are reading this one, then you certainly learned about AI/ML/AGI, robotics, and quantum computing this year. The Lindahl Letter will return to its regular schedule next year, and I am grateful for your continued readership, curiosity, and engagement. I wish you and yours a happy holiday season and a thoughtful, restorative start to the new year.My top 5 posts of 2025 included:What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead! This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

Nested learning and the illusion of depth

Thank you for tuning in to week 218 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Nested learning and the illusion of depth.”Just for fun with this nested learning paper we are evaluating today, I downloaded the 52 page PDF and uploaded it to my Google Drive to have Gemini create an audio overview of the paper. That is just a one button request these days. We have reached a point where we can easily listen to a paper recap with very little friction. It’s actually harder to get a complete reading of the PDF as an audio file. I had tried the Adobe Acrobat read aloud feature and I don’t really like the robotic output. Sometimes, I would rather listen to a paper than read it when I am trying to really think deeply about something. The 5 minutes of podcast audio Gemini spit out about the paper are embedded below. It’s interesting to say the least how quickly Gemini turned that paper into a short podcast. It’s entirely possible that my analysis might be less entertaining than the podcast Gemini created on the fly. You will be the judge of that one. This is a paper I actually printed out 2 pages per page using the double sided setting. That is how I used to read papers during graduate school. This paper had a few color elements that is something my graduate school papers never really had. They were all monochromatic. I had to put on my reading glasses and hold the paper a little closer than I used to with the 2 pages per page printing. I’ll have to remember to just print using single page spacing next time around. I really only print out papers I want to keep in my stack of stuff. This one certainly fits that criteria.Trying to make content that is accessible is one of the reasons that I have been recording audio for the Lindahl Letter. Sometimes listening to something is a great unlock. Other times due to complexity and the diagrams included you just have to read academic papers. I try to bring things forward without complex charts in a highly consumable way. My take on research notes is that they need to be generally understandable and communicate a clear take on whatever topic is being covered. The content has to be condensed into something that can be considered in 5-10 minutes. To that end I’m going to do my best to bring this paper on nested learning to life today.This paper matters, it really does, because the research presented undermines one of the core assumptions driving modern AI investment and the endless LLM building and training that has been occurring, namely that stacking more layers reliably produces qualitatively better intelligence [1]. The mantra to just keep scaling maybe will fade away. If many so-called deep models collapse into shallow equivalents during training, then reported gains attributed to architectural depth may instead be artifacts of data scale, regularization, or optimization heuristics rather than true representational progress.This has direct implications for benchmarking, since comparisons that reward parameter count or depth risk overstating advances that do not translate into more robust reasoning or generalization. It also affects hardware and infrastructure strategy, because enormous resources are being allocated to support depth that may not deliver proportional returns. At a deeper level, the result forces a reconsideration of what meaningful learning progress actually looks like, shifting attention from surface complexity toward mechanisms that introduce genuinely new inductive structure and adaptive behavior.Maybe the long term impact of this call out is likely to be gradual rather than abrupt, but it meaningfully shifts the intellectual ground beneath current AI narratives [1]. The paper in question provides a formal vocabulary for a concern many researchers have held intuitively, that architectural depth has become a proxy metric for progress rather than a principled design choice. Over time, this reframing may influence how serious research groups evaluate models, placing more weight on identifiably distinct learning mechanisms, training dynamics, and robustness properties instead of raw scale.It is unlikely to immediately change the minds of investors or vendors whose incentives favor larger systems, but it can shape academic norms, reviewer expectations, and eventually benchmark construction. Historically, results like this matter most not because they halt a paradigm, but because they constrain it, narrowing the space of credible claims and forcing future advances to justify themselves on grounds other than appearance and size.This argument intersects directly with my broader concerns about interpretability and generalization. I am still curious about creating a combiner model, but this might change the mechanics of how that might ultimately work. If performance gains arise primarily from optimization dynamics rather than architectural expressivity, then claims about le

The great 2025 LLM vibe shift

Thank you for tuning in to week 217 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “The great 2025 LLM vibe shift.”Vibe shifts came and went. People are certainly adding the word vibe to all sorts of things as the initial meaning has ironically faded. Casey Newton in the industry standard setting Platformer newsletter wrote about a big silicon valley vibe shift in 2022 [1]. It was a big thing; until it wasn’t. The really big completely surreal LLM shift has happened toward the tail end of 2025. We went from extreme AI bubble talk to very clear, rational, and thoughtful perspectives on how LLMs won’t realize the promises that have been made. Keep in mind the market fears of an AI bubble are different from the understanding that LLMs might be the technology that ultimately wins. All of the spending in the marketplace and the academic argument may get reconciled at some point, but we have not seen that happen in 2025.The backward linkages of how potential technological progress regressed may not have been felt just yet, but the overall sentiment has shifted. The ship has indeed sailed. Let that sink in for a moment and think about just how big a shift in sentiment that really happens to be and how it just sort of happened. As OpenAI and Anthropic move toward inevitable IPO, that shift will certainly change things. Maybe the single best written explanation of this is from Benjamin Riley who wrote a piece for The Verge called, “Large language mistake: Cutting-edge research shows language is not the same as intelligence. The entire AI bubble is built on ignoring it” [2]. I owe a hat tip to Nilay Patel for recommending and helping surface that piece of writing.I was skeptical at first, but then realized it was a really interesting and well reasoned read. I’ll admit at the same time, I was also reading a 52 paper from the Google Research team, “Nested Learning: The Illusion of Deep Learning Architecture” around the same time which was interesting as a paired reading assignment [3]. More to come on that paper and what it means in a later post. I’m still digesting the deeper implications of that paper.Maybe to really sell the shift you could take a moment and listen to some of the recent words from OpenAI cofounder Ilya Sutskever. I’m still a little shocked about the casual way Ilaya described how we moved from research and the great AI winter, to the age of scaling, and finally back to the age of research again. The idea that scaling based on compute or size of corpse won’t win the LLM race is a very big shift and Ilya makes it pretty casually during this video.You will notice I have set the video to play about 1882 seconds into the conversation:Maybe a video with a really sharp looking classic linux Red Hat fedora in the background featuring a conversation between Nilay Patel and IBM CEO Arvind Krishna can help explain things. Don’t panic when you realize that the CEO of IBM very clearly argues with some back of the envelope math that all the data center investment has no real way to pay off in practical terms or an actual return on investment. Try not to flinch when it is described that within 3-5 years the same data centers could be built at a fraction of the current cost. Technology does just keep getting better. The argument makes sense. It is no less shocking based on the billions being spent.I set the video to start playing 502 seconds into the conversation.The argument that I probably prefer in the long run is how quantum computing is going to change the entire scaling and compute landscape [4]. The long-term argument that may end up mattering the most suggests that quantum computing will transform the economics of scale and ultimately reset expectations about what is computationally feasible. Former Intel CEO Pat Gelsinger recently framed quantum as the force likely to deflate the AI bubble by altering the fundamental relationship between compute and capability, a claim that is gaining analytical support across the research community. We may see it be an effective counter to the billions being spent on data centers for a late mover willing to make a prominent investment in the space or it could just end up being Alphabet who is highly invested in both TPU and quantum chips [5].What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead!Footnotes:[1] Newton, C. (2022). The vibe shift in Silicon Valley. Platformer. https://www.platformer.news/the-vibe-shift-in-silicon-valley/[2] Riley, B. (2025). Large language mistake: Cutting-edge research shows language is not the same as intelligence. The entire AI bubble is built on ignoring it. The Verge. https://ww

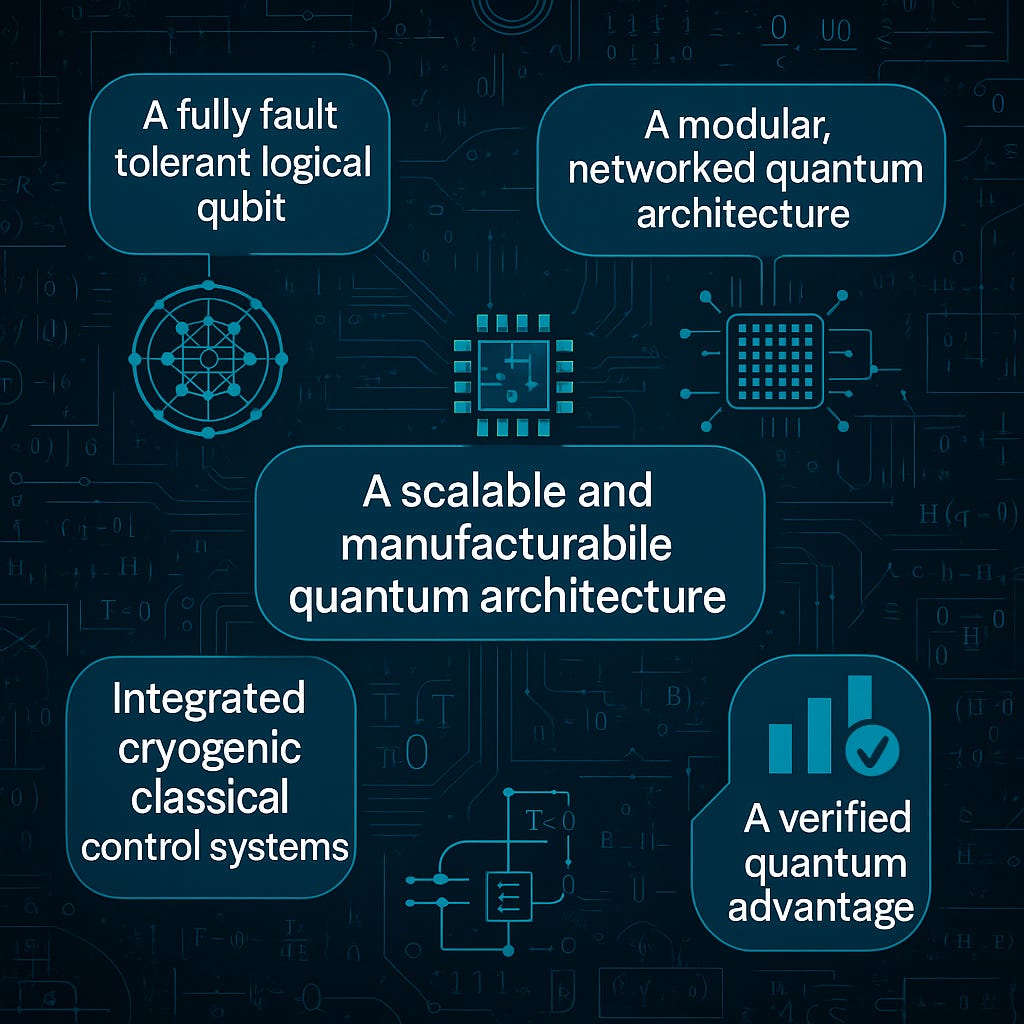

The 5 biggest unsolved problems in quantum computing

Thank you for tuning in to week 216 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “The biggest unsolved problems in quantum computing.”The field of quantum computing has accelerated rapidly during the last decade, yet its most important breakthroughs remain incomplete. The core research challenges that stand between today’s prototypes and large scale, industrially relevant systems are now visible with unusual clarity. I think we are on the path to seeing this technology realized. These challenges are increasingly framed not as incremental milestones but as structural bottlenecks that shape the entire trajectory of the field. This week’s analysis focuses on the five most critical problems that must be solved for quantum computing to reach fault tolerant, economically meaningful operation. These gaps define where research investment, national strategy, and competitive advantage will be determined in the coming decade.1. A fully fault tolerant logical qubit with logical error rates below thresholdThe first and most fundamental problem is the absence of a fully fault tolerant logical qubit. I know, I know, people are getting close, but this technology is not fully realized just yet. Theoretical thresholds for fault tolerance are well studied, and progress has been reported through surface codes, low density parity check codes, and recent advances in magic state distillation. Several groups have demonstrated logical qubits whose performance exceeds their underlying physical qubits, and some trapped-ion experiments now show better than break-even behavior under repeated rounds of error correction. However, no team has yet realized a logical qubit that maintains below-threshold logical error rates in a fully integrated setting that combines encoding, stabilizer measurement, real time decoding, and continuous correction across arbitrarily deep circuits. Experiments such as the University of Osaka’s zero level magic state distillation results and Quantinuum’s recent logical circuit demonstrations illustrate meaningful progress, yet a complete fault tolerant logical qubit build rolling off the assembly line has not been achieved [1]. This missing element prevents reliable execution of deep circuits and stands as the central research challenge of the field. I am also tracking a leaderboard of efforts aimed at increasing the number and stability of logical qubits as new systems emerge [2].2. A scalable and manufacturable quantum architecture that supports thousands of high fidelity qubitsThe second unsolved problem is the absence of a scalable, manufacturable quantum architecture capable of supporting thousands of high fidelity qubits. Superconducting platforms continue to face wiring congestion, cross talk, and fabrication variability across large wafers, which limits reproducibility at scale. Trapped-ion systems achieve some of the highest gate fidelities reported, but their physical footprint, control volume, and relatively slow gate speeds constrain system growth. Neutral atom arrays offer large qubit counts, yet they have not demonstrated uniform, high fidelity two qubit gates across arrays large enough to support fault tolerant codes. Photonic and spin qubits continue to advance but remain earlier in their development for universal, gate based architectures. Across all platforms, the transition from laboratory systems to repeatable, wafer scale manufacturing has not occurred. Most resource estimates indicate that tens of thousands of physical qubits will be required for practically useful, error corrected applications, and no architecture is yet positioned to deliver this scale with consistent fidelity. I am tracking universal gate based physical qubit leaders closely, and I expect to see significant shifts in 2026 as fabrication strategies evolve [3].3. Integrated cryogenic classical control systems capable of real time decoding at scaleThe third unsolved problem concerns the integration of classical control systems capable of operating efficiently at cryogenic temperatures. Quantum processors rely on classical electronics to generate precise control pulses, read measurement outcomes, and perform real time decoding. As devices grow, these classical requirements become a dominant engineering bottleneck. Current systems depend on extensive room temperature hardware and thousands of coaxial lines, an approach that is not viable for scaling beyond a few hundred qubits. Research into cryogenic CMOS, multiplexed readout architectures, and fast low noise routing has shown meaningful progress, and prototype decoders have demonstrated sub microsecond performance. However, the field still lacks a fully integrated classical to quantum control stack that can operate near the device, support large scale decoding throughput, and eliminate the wiring overhead required for million channel systems. Solving this challenge is as essential a

Process capture and the future of knowledge management

Thank you for tuning in to week 215 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Process capture and the future of knowledge management.”The history of knowledge management has been shaped by repeated attempts to store, retrieve, and reuse organizational insight. So much institutional knowledge gets lost and discarded as organizations change and people shift roles or exit. People within organizations learn through the every day practice of getting things done. It’s only recently that systems are augmenting and sometimes automating those processes. Early systems focused on document repositories, and later platforms emphasized collaboration, tagging, and collective intelligence. We now find ourselves in a period where knowledge management converges with automated workflows and computational assistants that can observe, extract, and generalize decision patterns. We are seeing a major change in the ability to observe and capture processes. Systems are able to capture and catalog what is happening. This creates an interesting inflection point where the system may store the knowledge, but the users of that knowledge are dependent on the system. That does not mean the process is understood in terms of the big why question. Scholars have noted that the operational layer of organizational memory is often lost because it resides in informal practices rather than formal documentation. The shift toward embedded and automated capture offers a remedy to that problem.The rise of agentic AI and workflow-integrated assistants alters the knowledge landscape by making it possible to synthesize procedural knowledge in real time. Instead of relying on teams to manually update wikis or define operating procedures, modern systems can extract key steps from repeated actions, identify dependencies, and flag anomalies that deviate from observed patterns. This transforms knowledge management from a static library into a dynamic computational environment. What exactly happens to this store of knowledge over time is something to consider going forward. Supervising the repository will require deep knowledge of the systems which are now being maintained systematically. Maintaining and refining it will be the difference between sustained institutional knowledge or temporary model advantages that drop with the next update. Recent studies on digital trace data argue that high fidelity observational streams can significantly improve the accuracy of organizational models. When this data flows into agents capable of modeling tasks, predicting outcomes, and recommending actions, the role of knowledge management shifts from storage to orchestration.Process capture also introduces new opportunities for long-horizon learning systems. This is the part I’m really interested in understanding. The orchestration layer has to have some background learning and storage that runs periodically. When workflows are automatically translated into structured representations, organizations can run simulations, perform optimization, and enable higher levels of task autonomy. These capabilities begin to resemble continuous improvement environments that merge human judgment with machine-refined operational insight. Researchers have observed that structured process models can improve downstream automation and decision support, particularly in complex enterprise settings where procedures evolve rapidly. This suggests that the next phase of knowledge management will involve systems that not only store information but also refine it through computational analysis and real world feedback. It’s in that refinement that the magic might happen in terms of real knowledge management.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead!Links I’m sharing this week!https://www.computerworld.com/article/4094557/the-world-is-split-between-ai-sloppers-and-stoppers.htmlThis video is a super interesting look at a number we don’t normally question on a daily basis. The delivery style is a bit bombastic, but the fact check on the argument is interesting. You know I enjoy numbers and was really curious how this was calculated. That video referenced this widely shared analysis from Michael W. Green on Substack. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

The great manufacturing reset

Thank you for tuning in to week 214 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “The great manufacturing reset.”Boston Dynamics captured public imagination when they introduced Spot the dog-like robot back in 2016. Things have changed. Robots that walk around are beginning to enter the commercial landscape, and new entrants continue to appear. A humanoid robot product from Russia built by the company Idol surfaced last week [1]. Other companies such as Agility Robotics (USA), Figure AI (USA), Boston Dynamics (USA), UBTECH (China), and 1X Technologies (Norway/USA) are all working toward delivering humanoid robots. Optimus, the Tesla bot introduced conceptually in 2021 and now in its third-generation prototype which remains part of an internal program and has not yet reached commercial deployment is also being talked about.The stage is now set, and we are at a point where robotics, autonomous fabrication systems, and advanced materials are converging into a new industrial baseline. The last decade brought low-cost filament printers into hobbyist and commercial spaces at massive scale, and the next decade is poised to move far beyond that early wave. Industrial additive manufacturing has already expanded into metals, composites, and high-performance polymers, with global revenue expected to accelerate over the coming years. At the same time, the field is absorbing rapid advancements in AI-enabled calibration, defect detection, and real-time optimization, allowing machinery to tune production parameters autonomously. That capability shifts what it means to operate a modern fabrication workflow. Things are changing rapidly.Alongside these developments, humanoid and semi-autonomous industrial robots are transitioning from research demonstrations to contract manufacturing deployments. Several builders are scaling up pilot programs in which general-purpose robots support assembly, materials handling, and repetitive manufacturing tasks. These systems benefit from advances in reinforcement learning, enhanced sensors, and cloud-based model updates. Industrial robotics shipments are increasing rapidly, driven by global demand for flexible production lines and labor-augmentation strategies. The supply side of robotics is not only expanding but also becoming modular and more interoperable across fabrication environments.The most significant shift may come from the emergence of machines that build machines. That is a topic I’m focused on understanding. Historically, tooling design required long lead times, significant manual labor, and specialized expertise. Today, automated CAM pipelines, printable tooling, adaptive CNC systems, and robotically tended fabrication cells allow factories to generate and regenerate their own production processes. Some aerospace and automotive facilities already deploy these closed-loop systems to create fixtures, jigs, and replacement components internally. This form of self-manufacturing reduces dependency on external suppliers and removes friction from engineering iteration cycles. We are moving toward a world where design, testing, and tooling are all integrated within an AI-guided, robotics-driven feedback loop. That integration is the foundation of the great manufacturing reset.For the United States, these technologies open a realistic path to reshoring custom and small-batch manufacturing in ways that were not economically viable during the offshoring wave of the late twentieth century. Rising labor costs in traditional manufacturing hubs, geopolitical risk, and supply chain disruptions have already encouraged firms to reconsider where they build things. Additive manufacturing and flexible robotics change the cost structure by reducing reliance on large minimum-order quantities, expensive hard tooling, and long logistics chains. A factory that can print tooling on demand, deploy modular robots, and run AI-optimized production scheduling can serve shorter runs and more specialized designs while remaining geographically close to end customers. In effect, the United States can replace scale-driven arbitrage with speed, customization, and resilience. That is why we are at the inflection point for the great manufacturing reset.Policy and infrastructure are beginning to support this transition. Federal programs such as Manufacturing USA and its associated network of advanced manufacturing institutes are working to diffuse next-generation production technologies across domestic firms and regions [2]. Investments in semiconductor fabrication, battery plants, and clean-energy hardware have already catalyzed billions of dollars in new onshore manufacturing commitments. The same capabilities that support large facilities can extend to mid-market and smaller manufacturers through shared tooling libraries, regional robotics integrators, and standardized digital design pipelines. Universities and community

Why a “combiner model” might someday work

Thank you for tuning in to week 213 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Why a “combiner model” might someday work.”Open models abound. Every week, new open-weight large language models appear on Hugging Face, adding to a massive archive of fine-tuned variants and experimental checkpoints. Together, they form a kind of digital wasteland of stranded intelligence. These models aren’t all obsolete; they’re simply sidelined because the community lacks effective open source tools to combine their specialized insights efficiently. The concept of a “combiner model” offers one powerful path to reclaim this lost potential. Millions of hours of training, billions of dollars in compute, and so much electricity have been spent. Sure you can work by distillation to capture outputs from one model into another, but a combiner model would be different as it overlays instead of extracts.A combiner model represents a critical shift away from the assumption that AI progress requires ever-larger single systems. Instead of training another trillion-parameter monolith, we can learn to combine many smaller, specialized models into a coherent whole. The central challenge lies in making these models truly interoperable. The challenges form from questions around how to merge or align their parameters, embeddings, or reasoning traces without degrading performance. The combiner model would act as a meta-learner, adapting, weighting, and reconciling information across independently trained systems, unlocking the latent knowledge already encoded in thousands of open weights. Somebody at some point is going to make an agent that works on this problem and grows stronger by essentially eating other modals.This vision can be realized through at least three technical routes. The first involves weight-space merging. Techniques such as Model Soups and Mergekit show that when models share a common base, their weights can be effectively averaged or blended. More advanced methods, like TIES-Merging, learn adaptive coefficients that vary across layers, turning model blending into a trainable optimization process rather than a static recipe. In this view, the combiner model becomes a universal optimizer for reuse, synthesizing the gradients of many past experiments into a single, functioning network.The second approach focuses on latent-space alignment. When models differ in architecture or tokenizer, their internal representations diverge. Even so, a smaller alignment bridge can learn to translate between their embedding spaces, creating a shared semantic layer, or semantic superposition. This allows, for example, a legal-domain model and a biomedical model to exchange information while their original knowledge weights remain frozen. The combiner learns the translation rules, effectively building a common interlingua for neural representations that connects thousands of isolated domain experts.The third approach treats the combiner not as a merger but as a controller or orchestrator. In this design, the combiner dynamically decides which expert model to invoke, evaluates their outputs, and fuses the results through its own learned inference layer. This idea already appears in robust multi-agent frameworks. A true combiner model or maybe combiner agent would internalize this orchestration as a core part of its reasoning process. Instead of running one model at a time, it would simultaneously select and synthesize outputs from many experts, producing complex, context-aware intelligence assembled on demand. This approach is the most immediately viable and is already being used in sophisticated production systems today.If such systems mature, the economics of AI will fundamentally change. Rather than concentrating resources on a few massive, proprietary models, research will shift toward modular ecosystems built from reusable parts. Each fine-tuned checkpoint on Hugging Face will become a potential building block, not an obsolete artifact. The combiner would turn the open-weight landscape into an evolving lattice of knowledge, where specialization and reuse replace the endless cycle of frontier retraining. This vision is demanding, but the promise remains compelling: a world where intelligence is assembled, not hoarded; where the fragments of past experiments contribute directly to future understanding. The combiner model might not exist yet, but its underlying logic already dictates the future of open source AI.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead!Links I’m sharing this week!This is the episode with Sam Altman that everybody was talking about. This is a public ep

The edge of realized technology

Thank you for tuning in to week 212 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “The edge of realized technology.”Welcome to the start of season 5. Don’t panic, we are still covering advancing technology including quantum, robotics, and artificial intelligence within the Lindahl Letter. I’ll be writing about the intersection of technology and modernity until the singularity. For better or worse, modernity’s shadow will continue to be the edge of realized technology. We are on the path to seeing a bunch of different technologies end up being realized in the not so distant future. That is why I’m so focused on the path toward realizing robotics, quantum, and agentic. That is where season 5 of the Lindahl Letter is going to pick up and start to dig into those topics at the edge of realized technology. To that end, I started to make a graphic of the timeline of major financial bubbles and extended it out to emerging technologies expected to deliver before 2045 [1]. You can modify the Python visualization code for this one if you want, I shared an executable version of it on GitHub.Within that visualization I started to sketch out the next 10 most likely technologies we will see realized. Within each path toward realization is where private investment and ultimately retail investors will crowd into the market before it gets commoditized to the point where the initial leaders in the space have no first mover advantage and some type of bubble ensues. That does not mean these things won’t be game changing. I’m just expecting some type of financial crowding followed by pressure against expected profits that won’t be realized. Resulting from that would be some type of financial bubble which might very well be led by a huge windfall of some sort. People made money on tulips and pepper before those markets crashed out.* 2026, “AI Bubble”, “Tech”* 2028, “Metaverse and XR Bubble”, “Tech/Speculative”* 2029, “Robotics Bubble”, “Tech”* 2031, “Climate Tech Bubble”, “Climate Tech”* 2032, “Space Economy Bubble”, “Space Economy”* 2033, “Biotech and Longevity Bubble”, “Biotech/Longevity”* 2034, “Synthetic Biology and Food Tech Bubble”, “Synthetic Bio/Food Tech”* 2035, “Quantum Bubble”, “Tech”* 2035, “Neurotech and BCI Bubble”, “Neurotech/BCI”* 2040, “Fusion Energy Bubble”, “Energy”These edges of technology realization might not be in the right order or tied exactly to the right year, but I do think that directionally this list will prove to be an accurate prediction of when technology will be achieved and we will see meaningful changes to modernity. Futurist considerations abound for what might end up happening. This was my swing at predicting what’s next. Only time will tell if it was an accurate swing or it will be disrupted by some other emerging technology.Going forward you are going to see my weekly writing efforts get split into 4 distinct buckets. My general weekly think pieces will stay here within the relative safety of the standard Lindahl Letter publication, writing about civics, civility, and civil society will be over on the Civic Honors domain, blogging will be done within the Functional Journal, and my hope is to resume daily posting back over on the nels.ai domain. Ideally, enough content will be generated in the major domains that only a small amount of blogging will occur. Going forward it is far better to produce meaningful work than to complete passages of extended navel gazing. Sure being a reflective practitioner and blogging has its place, but sometimes all that writing about the process ends up being more circular than forward looking.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead!Links I’m sharing this week!Footnotes:[1] https://github.com/nelslindahlx/Data-Analysis/blob/master/TimelineofMajorFinancialBubbles.ipynb This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

Spooky Halloween edition: When Satoshi-Era Wallets Wake Up

Happy Halloween everybody! Thank you for tuning in to week 211 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “When Satoshi-Era Wallets Wake Up.”Seriously, Bitcoin is weird. It has an enigmatic and anonymous founder. The origin story of how this cryptocurrency came to be is pretty much ineffable. Roughly a third of all bitcoin has never moved [1].These dormant or maybe abandoned coins shape both the scarcity and the psychology of the network. Now, some of those early wallets are coming alive again, and their reawakening reveals a deeper story about profit, security, and the bleeding edge of quantum cryptography. Maybe some of these cutting edge massive quantum computers are being used to run Shor’s algorithm and factor some of these older wallet keys [2]. That seems more likely to me than somebody remembering they had some old bitcoin after a decade and moving it around. We could write a really spooky short story about people waking up to old bitcoin wallets getting cracked by quantum computers running Shor’s algorithm. That is the type of short story that could move from fiction to non-fiction with one scientific breakthrough. It’s even possible it has already started to happen. By possible, I think it probably already is happening.Speculation aside, it’s true that an estimated thirty percent of all mined bitcoin has been untouched for more than five years [3]. That is shocking. About seventeen percent of bitcoins have not moved in a decade [4]. Those figures mean that even as mining nears completion, a huge fraction of the network’s supply remains functionally absent or potentially abandoned. This long-term dormancy amplifies Bitcoin’s scarcity, turning lost or forgotten coins into a silent deflationary force. Yet in 2025, something shifted. Several ancient wallets, first active during Bitcoin’s infancy, have begun to stir after twelve to fourteen years of silence. Their movements are rare, deliberate, and full of meaning.Some of these wallets trace back to 2010 and 2011, a time when bitcoin traded for less than a dollar. In July, eight early addresses moved roughly eighty thousand bitcoin in a coordinated set of transfers [5]. That is wealth that once totaled a few thousand dollars but is now worth billions. Somebody made some shocking profits. Later, a miner-era wallet from 2010 moved four hundred bitcoin after twelve years of dormancy [6]. In October, an early 2011 wallet that had accumulated four thousand bitcoin sent a small test transaction of 150 coins before going quiet again [7]. None of these events caused market disruption, but each drew immediate attention. Every time an ancient wallet moves, it feels like a fragment of Bitcoin’s early history is stepping into the present.Why are these early coins moving now? The first reason is straightforward economics. With bitcoin surpassing one hundred thousand dollars, even small transfers yield generational wealth. Another reason is technological maturity. Over the past decade, wallet recovery methods have improved, and holders who once misplaced keys or old software backups can now retrieve them. Security has also evolved. Many early wallets were built with primitive address types that expose their public keys, leaving them theoretically vulnerable to a future cryptographic breakthrough. This leads to the third and most forward-looking motivation: the quantum threat. That is the part I’m super curious about. Some of the larger quantum systems that I shared in my leaderboard could be active here, but we don’t really know.Quantum computing is still developing, but progress is steady. Bitcoin relies on elliptic-curve digital signatures that would be mathematically vulnerable to sufficiently powerful quantum machines. The earliest wallets used formats that make this risk more immediate, because they reveal public keys on-chain once a transaction occurs. If quantum computing advances far enough, those exposed keys could allow attackers to derive private keys and spend the coins. Experts estimate that a quarter of all existing bitcoin resides in such legacy formats. That reality has not escaped early holders. Some of the recent awakenings may reflect quiet migrations of classic wallet cold coins being moved to SegWit, multi-signature, or even post-quantum-resistant wallets to protect them from future compromise. These reactivations might not be about profit at all. They could be acts of defensive foresight from people who understand how close technology may be to challenging the foundations of digital security.There are also practical motivations. Estate planning, custodial audits, and consolidation are all normal parts of managing large digital holdings. After more than a decade, early miners are updating their records, creating inheritance plans, and transferring assets to institutional custodians. The act of moving coins from an old address is sometimes less a finan

AI Is Burning Through Graphics Cards

Thank you for tuning in to week 210 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “AI Is Burning Through Graphics Cards.”Generational wealth is being invested into data centers for AI. It’s so prevalent that you hear about it on the nightly news and municipalities are dealing with the power demands. The clock is ticking on graphics cards being used for AI inference. The current generation of GPUs was never designed to run around the clock under inference loads. These chips were originally built for bursts of rendering, not continuous model execution at scale. What we are seeing now is an industry trying to stretch gaming hardware into a role it was never meant to fill. The result is heat, power consumption, and a ticking clock based on the inevitable wear.Each graphics card has a limited operational lifespan. These are not like bricks being used to build a house; they are just expensive computer hardware. The more intensive the workloads, the shorter that lifespan becomes. Fans fail, thermal paste dries out, and the silicon itself begins to degrade. Inference tasks, particularly when stacked across large fleets of GPUs, magnify this effect. The relentless pace of AI workloads accelerates the failure curve, turning once-premium cards into temporary consumables. I’m actually really curious what is going to happen to all of them at the end of this cycle. A secondary market does exist for these used devices and companies like Iron Mountain will help data centers with secure disposal.By most reasonable estimates, there are now between 3.5 and 4.5 million NVIDIA data-center GPUs actively deployed in production environments. Hyperscalers such as Meta, Microsoft, and Google each operate hundreds of thousands of units, while smaller data centers fill out the rest of the global total. Each GPU represents a remarkable amount of compute density, but also a constant thermal and economic liability. Even with optimized cooling, sustained inference loads drive high thermal stress and power draw that shorten component life. These systems were never meant to run 24 hours a day, 365 days a year.Under heavy duty cycles, many GPUs experience significant degradation within one to three years of continuous operation. The warranties often match that window, which reflects a design expectation rather than coincidence. Silicon aging and persistent thermal cycling all take their toll. Even when the hardware technically survives longer, it becomes economically obsolete as new architectures quickly double efficiency and throughput. The pace of improvement ensures that by 2027 or 2028, most of today’s fleet will either be retired, resold, or relegated to low-priority inference tasks. Right now TSMC would have to make the chips to replenish this fleet of GPUs which would be outrageously expensive. Both NVIDIA and TSMC manufacturing teams could be looking at a huge impending need for production or a shift to a new type of technology.That replacement cycle has massive implications. The cost of refreshing millions of GPUs every few years is enormous, and the environmental impact of manufacturing and disposing of that much silicon is even harder to ignore. As AI inference continues to scale, this churn becomes unsustainable. Companies are already exploring purpose-built accelerators, ASICs, and FPGAs that can deliver better efficiency and longer service life. These designs aim to handle continuous inference without the same thermal or aging limitations that plague graphics cards.Sustainability will define the next phase of AI infrastructure. The transition away from general-purpose GPUs is underway, but what comes after silicon remains uncertain. Research into photonic computing, quantum processors, and neuromorphic architectures offers glimpses of what a post-GPU world might look like. Each of these alternatives seeks to break free from the limits of traditional chips while extending useful lifespans. The next leap in AI hardware will not be measured by sheer speed, but by how well it can endure the relentless demands of inference at scale.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead!Links I’m sharing this week! This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

Social media stopped being social

Thank you for tuning in to week 209 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Social media stopped being social.”Before we get going this week. I need to provide an update about last week’s post. I take full responsibility, as the principal writer here, that last week my writing efforts were just not up to par within the 208th Lindahl Letter publication. You have come to expect better from me and last week I just delivered a dud of a post. It’s the first post in a long time that actively drove people to leave the Lindahl Letter. It’s pretty easy to see the signal within the noise when something was bad enough to drive people away and I take responsibility for delivering that subpar effort.That being noted, let’s pivot back to the main topic at hand related to social media.I’m not sure if social media was ever really about togetherness and being social. Those are things after the fact that I want to ascribe to it. Let’s blame it on nostalgia. Communities tend to align with place, interest, or circumstance. Certainly online communities that are highly focused and targeted on a distinct community probably work. Later in a different essay it might be worth digging into the pocks of working online communities. That side of the coin however is not the focus of this missive.Things were different back when Twitter arrived in 2006 and ultimately became popular during South by Southwest in 2007. During the initial development and discovery of these applications for social media sharing things were different and maybe that newness is now something to be nostalgic about. Social media now is fragmented and stopped being social the moment algorithms learned how to predict the things that would hold our attention better than we could possibly direct it.What started the social media ball rolling as a digital gathering of friends slowly transformed into a system of engineered consumption. The feed no longer reflects our relationships. It reflects what the platform believes will keep us scrolling. In the process, the human layer was optimized out of existence. I am hoping the Substack experience ends up being different. Right now Substack is really my only active social media platform. It’s full of actual readers and writers for the most part. I’m trying to get into the swing of using Substack Notes, but that just seems to be an ongoing process of trying to figure it out. Previously, I tried to get into posting on Bluesky and I’ll admit that during Colorado Avalanche games it did feel like some level of community existed. Outside of gametime I just never really got much out of the Bluesky experience.Let’s take a step back from where we are now to consider history for a moment. Things were different for the first wave adopters. The first generation of social networks were built around connection. You followed people you knew, saw what they were doing, and commented because you cared. The platforms of today are not built for connection, but instead of being factored around community they are built for amplification. The more content flows, the more data moves, and the more ads get served. The mechanics of community were replaced by the logic of engagement.That shift changed the culture. Ultimately, it spawned the influencer movement. Maybe it’s a moment or it could be a watershed change away from public intellectuals to something else more product centric. People began curating identities instead of sharing moments. Every post became a performance. Every response was an opportunity for algorithmic reinforcement. What once felt like a conversation now feels like an audition. Social validation metrics turned communication into competition. The ultimate winners being the people who ended up making a career within this new flow of attention online.As that dynamic took hold, the real social behavior moved into the shadows. Private group chats, invite-only communities, and niche networks quietly took over the role that public timelines once held. The visible web is now dominated by content farms and brand influencers. The meaningful conversations happen elsewhere, often out of reach of recommendation systems. What used to feel like a town square has become a noisy digital strip mall.Social networks have become media networks. In some ways they are just the next generation of broadcast television or radio. It’s just more targeted and in some ways a lot more divisive. They are not spaces for dialogue but for distribution. Every interaction is mediated through a system that values attention over authenticity. That is why the average user now feels less connected than ever, even as they scroll through an endless feed of “content.” The core function of social media has inverted. It no longer connects people directly instead connecting people to platforms.We may be entering a post-social era online. Connection is returning to smaller spaces: g

Building with constant model churn

Day after release update: I guess it was the 208th post where we hit the proverbial wall with a dud of a post. This post in retrospect turned out to be one of my weaker efforts. I thought it was a strong take about dealing with the rate of change in model development, but it was just not focused and targeted based on delivering quality and insights.Thank you for tuning in to week 208 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Building with constant model churn.”Developers have spent a lot of time in the past patching software. That happens based on vulnerabilities, edge cases, and performance issues. All this vibe-coded content and things built on models are not getting any patches to make them better going forward. You may get a new release or a new model, but that patch to save you from vulnerabilities is not being developed and is not on the way. It is the nature of modern development. The ecosystem of dependencies is real. However, the pace of model development has created an unusual environment for anyone trying to build durable systems.You cannot really hot swap models within production systems. That just does not work. In the last five years, we have seen large language model releases from OpenAI, Anthropic, Google, Meta, Mistral, Cohere, and several open-source groups. Each iteration has been faster, larger, and sometimes more efficient than the one before. What has not been stable is the interface between models and the systems people build around them. Even seemingly small changes in context window size, output quality, or API availability ripple outward and cause redesigns, migrations, and sudden pivots. Sometimes these changes happen with no warning whatsoever.For builders, this creates a paradox. The potential upside of adopting a newer model is undeniable: better reasoning, lower costs, and expanded capabilities. At the same time, the risk of betting on an API or framework that may be deprecated in months is a constant concern. Some developers chase every release, weaving the newest model into their applications as quickly as possible. Others step back, building abstractions and wrappers that allow for switching models without disrupting core workflows. Neither path offers complete insulation from this wave of almost continuous churn.The history of technology offers parallels. Software engineers have long had to deal with shifting operating systems, frameworks, and libraries. What makes this moment different is the velocity of change and the sheer dependency of emerging applications on model behavior. The model is not just another dependency, it is the foundation of the system. When that foundation shifts, everything built on top of it must be reconsidered.There is also a deeper strategic question. Should builders lean into constant change and accept churn as a feature of the landscape? Or should they try to design in ways that minimize dependency, focusing more on proprietary data pipelines, unique integrations, and distinctive user experiences? Both strategies reflect an awareness that stability is not guaranteed in this ecosystem. The companies that endure will be the ones that treat churn not as an annoyance but as a design constraint.Things to consider:* The lack of patching for AI models makes long-term maintenance difficult.* Model churn introduces structural instability into modern systems.* Abstraction layers help, but they cannot prevent cascading change.* Treating churn as a core design constraint is a pragmatic approach.* Builders must balance innovation speed with long-term stability.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead! This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

Enforcing AI standards without exception

Thank you for tuning in to week 207 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Enforcing AI standards without exception.”Standards are something we need to spend more time talking about. That is a general statement and not a special argument. Years ago, I actually witnessed a physical desk sign at an office that said, “We either have standards or we don’t.” It’s not a great mystery how that particular leader felt about standards. That type of adherence to standards is not all that common. In our LLM sponsored chat by prompt first and ask questions later world; people just keep prompting. Allowing models to just keep generating without standards is how we ended up where we are right now. Those tokens are being burnt at prodigious rates. All of those burnt tokens yield nothing reusable or even effectively carried forward. Mostly they are highly siloed outputs to an audience of one. They are all spent and the electricity and compute used will never be recovered. They are just an expense on somebody else’s balance sheet.Everything about the open web is pretty much in rapid decline. I would argue that enforcing standards without exception is the only way the end user can truly control the agenda or hope to manage the ultimate outcome when working with AI. It might even help us save the internet. That cause however might have already been lost. One of the great ironies of generative AI is that it demands more discipline from the human interacting with it to get quality outputs, not less. Sure prompt engineering has become a hands on the keyboard type of sport, but my best guess is everything ends up being more conversational in the end. You would expect a machine to be the enforcer of rules, to deliver outputs with mechanical precision. Instead, the responsibility ultimately falls back on the end user to enforce standards at every turn. The system will generate endlessly, but unless you control the agenda, it will wander away from the very standards that define your work. A lot of people are also just creating AI slop and potentially worse AI generated workslop.This is not a trivial annoyance. It is the defining challenge of using AI effectively. You might tell a system: no em dashes, strict numeric citations, Substack-compatible footnotes. And for a moment, it will comply. Then, in the next draft, it slips back into its defaults. Suddenly the citations are misplaced, the formatting is broken, or the output is square when you clearly require 14:10. It doesn’t matter how many times you’ve said it for some reason the system’s memory for discipline is shallow. If you do not enforce the standard without exception, the drift takes over. For an organization, that can mean tens or thousands of drifting lines of argument and fragmented results.That is why the end user must step into a role that looks less like automation’s promise and more like quality assurance. You are not simply a writer or a collaborator. You are the auditor, the rule enforcer, the one who stops the drift. We either have standards or we don’t. Allow one exception, and you have taught the system that exceptions are acceptable. Enforce the standard every time, and you create a boundary strong enough to shape consistent results.This relentless enforcement becomes the core of collaboration. Without it, the system defaults to “plausible” instead of “correct,” “close enough” instead of “aligned.” You cannot rely on the machine to protect the integrity of your work or really even to have solid consistent outputs. That responsibility is yours. The human must guard the agenda with vigilance and insistence. Outside of ruthlessly enforcing standards without exception the path forward is just full of slop.Over time, this process builds more than consistency. It builds identity. A body of work that holds together across hundreds of posts or thousands of outputs does so because the user enforced the standards that give it coherence. We may very well look at the internet archives before all the LLM training as untainted and everything after that point with skepticism. I’m not arguing that everything in that first tranche of content was high quality or even accurate, but it was before the models. Without that enforcement, the work would fracture into a mix of styles, structures, and shortcuts. Enforcing standards without exception is exhausting, but it is also the only way to produce work that reflects your agenda rather than the system’s defaults.Things to consider:* AI will always drift back toward its defaults unless the user enforces rules consistently.* The promise of automation is inverted: the human enforces discipline, not the machine.* Exceptions teach the system the wrong lesson and erode consistency.* Vigilant enforcement is what turns scattered outputs into a coherent body of work.* Control of the agenda belongs to the end user, or it is lost altogether.What’s

The Great Tokenapocalypse

Thank you for tuning in to week 206 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration is “The Great Tokenapocalypse.”As large language models reach deeper into consumer devices, the cost of running them becomes the real bottleneck. So many tokens get burned with no ROI or use case for the company burning them; it's really out of control. Almost as out of control as the sunk cost of data centers that will probably be regretted at some point in the next 5 years. It’s sort of the unspoken reality of an arms race where building data centers that just depreciate and spending compute resources without any plan for recovering the cost is happening. This week explores how token economics is silently shaping the deployment strategies of Google and Apple.You may have noticed something strange about the rollout of generative AI: despite Google’s global reach and technical infrastructure, Gemini is not yet present on every device. It isn’t quietly running in the background on your Nest Hub, it doesn’t summarize content on your Pixel Watch, and it hasn’t taken over the always-on interactions that dominate the smart home experience. On paper, Gemini could power all of this: but in practice, it doesn’t. The reasons are not technical, but economic.It’s the tokens. Each time a large language model like Gemini processes a prompt or generates a response, it consumes tokens which are effectively a unit of computation that translate directly into cost. This cost is not abstract. It is real-time, metered, and at scale becomes wildly continuous with enough uses. When you ask Gemini to summarize an email or rewrite a paragraph, you’re triggering a live cloud inference cycle that draws directly on Google’s TPU infrastructure. At a small scale, these requests are manageable. But when deployed across millions of devices, in billions of micro-interactions, the financial and infrastructure burden becomes extreme. What looks like product restraint is actually cost containment. Google is avoiding what could become a tokenapocalypse which would be a runaway escalation of inference demand that outpaces both compute supply and operating budget.Gemini was designed for centralized, high-performance environments. It was not optimized for low-power edge devices or offline operation. Its rollout has been concentrated in strategic, high-leverage use cases: Workspace productivity, Pixel exclusives, and experimental features inside Search Labs. These are high-value zones where the cost per token can be justified. Gemini has not been deployed ambiently in the wild on smart speakers, in Android Auto, or on lightweight wearables mostly because those endpoints offer little to no margin against token cost. The model cannot run constantly without triggering exponential cloud expenditure. Until inference becomes drastically cheaper or edge-native Gemini variants emerge, Google is likely to continue rationing its deployment to protect against economic overextension.Apple, by contrast, has chosen an entirely different path forward. They elected a path that avoids the token problem from the outset. Its 2024 rollout of “Apple Intelligence” emphasized a local-first architecture built around on-device models. Instead of sending every prompt to the cloud, Apple routes the vast majority of inference through its A-series and M-series silicon. This strategy means that users can rewrite notes, summarize messages, or interact with Siri entirely offline, with zero token cost to Apple. When tasks exceed the capability of local models, they are sent to Apple’s “Private Cloud Compute” system, but this fallback is used selectively, with strict privacy and latency guarantees.Apple’s approach isn’t just a branding play. It reflects a fundamental architectural decision to avoid the economics of inference altogether. Apple doesn’t operate a hyperscale public cloud business, so it has no incentive to absorb or monetize cloud-based generative AI usage. Its profits come from hardware margins and platform services. This gives Apple the freedom to constrain usage, limit interaction complexity, and push AI to the edge. A strategy they can get away with, ultimately without incurring the compounding costs that Google faces. It’s a token-avoidant strategy, and it may prove to be the more sustainable one.Where Google builds outward from a full-stack cloud foundation, Apple builds inward from a controlled edge. Google’s strategy scales across models and modalities, but each expansion amplifies cost. Apple’s strategy constrains functionality but keeps economics stable. Both are reacting to the same underlying pressure: token costs are rising faster than monetization models can support. The more embedded the model becomes, the more tokens flow. A stark reality comes into existence where it becomes more urgent to rethink deployment patterns. This isn’t just a question of technical feasibility. It’s a matter of financial survivabi

Apple’s Hidden AI Strategy

Thank you for tuning in to week 205 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Apple’s Hidden AI Strategy: Waiting it out with token avoidance as a first principle.”Apple’s relative restraint in deploying large-scale generative AI isn’t just about privacy posturing or design philosophy. Maybe it is just the latest supply chain management initiative in terms of managing tokens. It may reflect a deliberate avoidance of token-expensive cloud inference which is an infrastructural and financial commitment that Apple has historically chosen not to make. This choice is akin to keeping supply chain costs down; this type of effort fits with the general operating model. Right now engaging token usage would just eat profits.Apple’s approach to “Apple Intelligence,” announced in 2024, hinges on three pillars:* On-device first: Apple designed its models (small language models and transformer variants) to run locally on A17+ and M-series chips. This dramatically reduces reliance on cloud GPUs and token accounting. If you generate 200 tokens on your phone, there’s no inference cost to Apple. This method avoids cloud costs, but makes the hardware the tipping point.* Private Cloud Compute: For tasks that exceed the capabilities of on-device models, Apple routes requests to its proprietary cloud using Secure Enclaves. But this only happens for high-value or infrequent tasks. That would include things like summarizing a document, generating email replies, or rewriting notes. This keeps cloud token loads minimal and predictable.* Selective rollout: Apple isn’t putting generative models everywhere. The system isn’t always listening, and “AI” is offered as an opt-in assistant across Mail, Notes, Safari, and Siri. There’s no ChatGPT clone embedded system-wide, and certainly nothing ambient like Gemini could theoretically become.You can see based on the bottom line and balance sheet concerns why Apple’s caution probably makes financial sense in the long run. Apple sells hardware, not compute. Even some of the cloud forward vendors might operate at a loss at Apple scale. Unlike Google or Microsoft, it doesn’t have an economic engine tied to cloud usage. If it gave every iPhone user unlimited generative AI access via the cloud, it would have to subsidize trillions of tokens per year without monetization return. Nothing in the workflow has any ROI for Apple where the hardware is a sunk cost and they have not offered a standalone monthly AI service. They let everybody else spend billions on hardware, data centers, and electricity.Instead, Apple wants:* Efficiency over scale.* Local inference over cloud latency.* Sporadic usage over daily token floods.In short: Apple is playing defense against the tokenapocalypse before it ever hits. That token apocalypse will happen when billions of devices become token hungry.What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead! This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit www.nelsx.com

Context window garbage collection

Thank you for tuning in to week 204 of the Lindahl Letter publication. A new edition arrives every Friday. This week the topic under consideration for the Lindahl Letter is, “Context window garbage collection.”Here we are this week contemplating how to clean up the mess from all these celebrated LLM chat sessions. It’s a disjointed mess that lacks federation or dare we say portability. We are at the point where we need to think about how context window garbage collection explores the deeper process based idea of how large language models might manage overflowing histories, selectively pruning, discarding, or compressing tokens to maintain efficiency without losing coherence. Certainly my thoughts on this is that we would all benefit from portable knowledge sharding, but that is just one way to look at the potential set of solutions that need to be built. Another way to slice the apple up and put just the best parts back together again would be to build out some context window garbage collection.When you open a long conversation with a large language model, the context window eventually fills with tokens from your prompts and the model’s replies. These windows have strict limits, whether 128k tokens, 200k tokens, or more, and yet our usage tends to grow indefinitely. As prompts expand, sessions become inefficient and costly, sometimes degrading in coherence as irrelevant or outdated details pile up. We have all run into hallucinations and just weird output from models. At this point in the experience the next best question then becomes very clear. We have to evaluate how models should manage their overflowing context windows at the end or during a chat session.In programming, garbage collection has long been the answer to similar problems. Long garbage collection problems have literally kept me up at night. Computer systems with finite memory must constantly decide what to keep and what to discard. Techniques such as reference counting, mark-and-sweep, and generational garbage collection have been developed to handle this challenge. The analogy we can build out here is very straightforward: in a world where context is the working memory of LLMs, garbage collection could provide the rules and processes for pruning, summarizing, or discarding tokens without breaking continuity.Several strategies already hint at how this could work. Some systems automatically prune less relevant history, while others compress sections of text into summaries or embeddings that can be retrieved later. I would run a knowledge reduce function based on my previously shared research, but I always think that is the answer. User-directed pinning, where important content is marked as permanent, is another possible feature. In longer interactions, models could run background “cleanup passes,” automatically condensing earlier exchanges into portable knowledge shards. Each of these approaches mirrors classic computing strategies while being adapted to the new problem space of language models.The risks are obvious. Things could go sideways. We face a direct computing time cost associated with this effort. Poorly designed garbage collection could lead to subtle context loss, missing small but crucial details. Summarization may introduce semantic drift or hallucinations. I would argue that properly structured context will actually reduce drift or hallucinations. Users may also resist invisible pruning, questioning whether they can trust a model that silently discards information. The challenge lies in balancing efficiency, fidelity, and transparency, ensuring that garbage collection makes interactions smoother rather than introducing new points of failure.Looking forward, context window garbage collection could become a fundamental layer of model architecture. Standardized processes and even some APIs might emerge to expose garbage collection logs to users or allow customization of pruning strategies. Entire ecosystems of agents could share compressed or pruned context shards across models, creating interoperability where today there is only fragmentation. Just as garbage collection enabled more scalable and reliable programming environments, context window garbage collection may become the invisible backbone of scalable AI interaction.Things to consider:* Should context garbage collection be visible and user-controllable?* Can models balance efficiency with fidelity when pruning?* What lessons from programming garbage collection apply directly to LLMs?* Does context GC make interoperability between models easier or harder?What’s next for the Lindahl Letter? New editions arrive every Friday. If you are still listening at this point and enjoyed this content, then please take a moment and share it with a friend. If you are new to the Lindahl Letter, then please consider subscribing. Make sure to stay curious, stay informed, and enjoy the week ahead! This is a public episode. If you would like to discuss this with other subscribers or get access

Portable knowledge sharding