Show overview

The AI podcast for product teams has been publishing since 2024, and across the 2 years since has built a catalogue of 45 episodes. That works out to roughly 40 hours of audio in total. Releases follow a monthly cadence.

Episodes typically run thirty-five to sixty minutes — most land between 46 min and 54 min — and the run-time is fairly consistent across the catalogue. None of the episodes are flagged explicit by the publisher. It is catalogued as a EN-language Business show.

The show is actively publishing — the most recent episode landed 2 days ago, with 6 episodes already out so far this year. The busiest year was 2024, with 23 episodes published. Published by Arpy Dragffy.

From the publisher

Podcast and newsletter for product teams looking to deliver innovative AI products and features productimpactpod.substack.com

Latest Episodes

View all 45 episodesGovernance, Context, and the Org-Design Reckoning

The Most Important Data Points in AI Right Now

Your AI Strategy Is a Pile of Demos

Let’s stop pretending. Most AI strategies are just a collection of pilots that nobody had the courage to kill. The data this period is brutal: 95% of genAI pilots stall. Only 11% reach production in financial services. Microsoft — the biggest company in the world, with the best distribution on the planet — just reorganized Copilot because nobody internally could agree on what it was supposed to be. And while enterprises burn cycles debating governance frameworks, a new class of startups is quietly replacing entire job functions. Not assisting. Replacing. The gap between the people who get this and everyone else isn’t a skills gap. It’s a courage gap. This edition is about which side you’re on.What You’ll Learn in This EditionThis edition confronts the uncomfortable reality that most AI investments are producing demos, not outcomes — and the structural reasons why.* 🎙 Why agents are automating your thinking, not just your tasks — and why that distinction matters more than any model release* ✍️ Copilot’s identity crisis is the most important product failure of 2026 so far* 👉 The single variable that predicts AI maturity 7x better than technology choices* 1️⃣ Why advertising AI use is now a financial liability for professional services firms* 2️⃣ The inference cost crisis that threatens every AI business model — including OpenAI’sEpisode 4: The Era of Agents — Your Cognition Is the Product NowWe mapped three years of AI evolution in this episode and landed somewhere uncomfortable. Era one gave us wrong answers. Era two gave us wrong context. Era three — agents — is giving us wrong actions. And the stakes compound with each era because AI is no longer just saying things. It’s doing things.Brittany brought the number that should haunt every product leader: only 6% of organizations have fully deployed any kind of agent. Copilot hit 30% weekly active usage after six months — meaning 70% of enterprise users basically stopped opening it. The tools are moving at an extraordinary pace. Almost nobody is keeping up.We profiled four startups winning the point-solution war that most people haven’t heard of. But the real conversation was about what happens when you hand your thinking to an agent. Not your typing. Not your scheduling. Your thinking — the research, the monitoring, the analysis, the synthesis. Something changes in you when you do that. And most people haven’t reckoned with what that means.“We’ve trained generations of people to think linearly. Step one, step two, step three, fill out this form, follow this process. Agents don’t work like that. Agents require you to think in terms of outcomes, connections, and context.” — ArpyListen now: Spotify | Apple Podcasts | YouTubeYou’re invited to join the AI Strategy Experiments Zoom call todayToday (March 27) at 1pm ET we’re hosting a small group of strategists and builders and designers sharing their experiments and questions. Register here.$490 billion in enterprise AI spending is delivering nothing. That’s not a technology failure. It’s a value creation failure. AI Value Acceleration exists to close that gap — diagnosing where AI value stalls and building playbooks that actually work. Value Assessment in 3 weeks. Value Amplification to go deep. Value Acceleration to prove what works. aivalueacceleration.comCopilot Didn’t Fail. It Succeeded at Not Knowing What It Is.Bloomberg reported that internal confusion over Copilot’s role, personality, and strategy has prompted a reorganization at Microsoft.Read that again. Internal confusion. Not external competition. Not technical limitations. The people building Copilot couldn’t agree on what it was for. Microsoft had everything a product could dream of — billions in funding, integration into every Office app, the largest enterprise distribution network on earth, and access to the most powerful models available. It didn’t matter. Without a clear product identity, all that distribution just delivered confusion at scale.The uncomfortable truth: most AI products shipping today have the same disease. They’re a bundle of capabilities searching for a purpose. They demo beautifully. They onboard poorly. They get abandoned quietly. If the biggest company in the world can’t brute-force its way to product-market fit for an AI assistant, what makes you think your team can skip the hard work of defining what your AI product is actually for?BCG: Why Usage Is Up but Impact Is NotEmployee-centric organizations are 7x more likely to be AI mature. Not 7% more likely. Seven times. Employee-centricity explains ~36% of variance in AI maturity outcomes. Model selection explains almost none of it.Over 85% of organizations remain stuck at basic task assistance. Fewer than 10% have reached anything resembling semiautonomous collaboration. The teams pulling ahead didn’t start with better tools. They started with cultures where people felt safe to experiment, fail, and teach each other what they learned. HBR confirmed it separately: peer influenc

75% of Enterprise AI Fails. The Fix Isn't a Better Model.

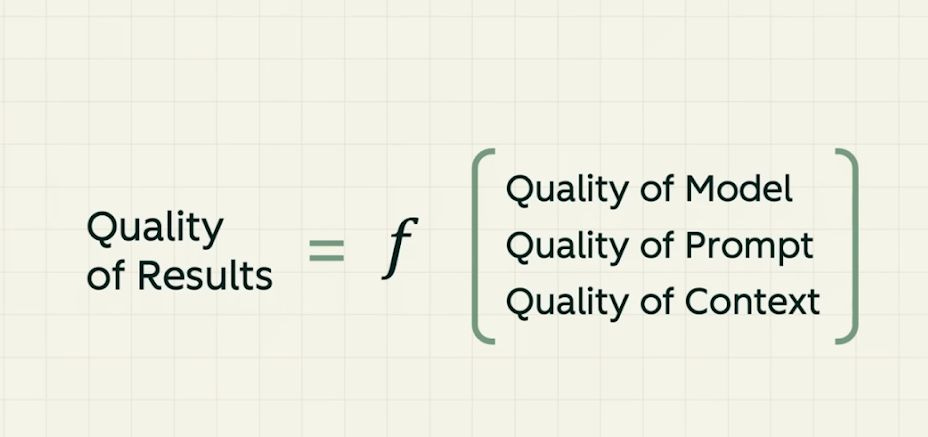

Every influencer is drooling over Claude Code skills files. Every product team is chasing the next model release. But for two years, the data has been screaming the same thing: capability isn’t the bottleneck. Context is. This edition unpacks what that actually means — why structured business knowledge is the highest-leverage investment a product team can make, what the “context wars” look like from the inside, and why the teams winning aren’t the ones with the best models. They’re the ones whose AI actually understands their business.What You’ll Learn in This EditionThis edition confronts the structural reason most AI products fail — they’re missing the context that makes capability useful.* Why Juan Sequeda from ServiceNow says “hope is not a strategy” — and what to build instead of better prompts* The three-layer knowledge framework that gives AI a shared language across your entire organization* CNBC’s “silent failure at scale” investigation reveals why 91% of AI models degrade without anyone noticing* Microsoft just adopted ontology — the same concept Juan has championed for 20 years — as the foundation of its agentic AI architecture* Citadel Securities data shows software engineer job postings rising 11% YoY despite the displacement narrativeEpisode 3: Context Is the New Moat — Why Your AI Needs Business Knowledge, Not Better PromptsEvery influencer is drooling over skills files and prompt templates. Juan Sequeda, Principal Scientist at data.world (acquired by ServiceNow), has spent 20 years proving that none of it works without structured business knowledge underneath. In this episode, Juan breaks down the three-layer framework — business metadata, technical metadata, and the mapping layer that creates real semantics — and explains why the teams investing in ontology today will compound value across every AI use case they build next. His blunt assessment of skills files as a production strategy: “Hope is an interesting strategy. It’s not one that I add to my strategy.”“If you just edit in skills, I don’t think that’s gonna be the solution to your problem. You’ll have a great POC. It’ll work for the use cases you tested on. Are you willing to put your career on the line and put that in production?” — Juan SequedaListen on Spotify | Apple Podcasts | YouTubeContext isn’t a nice-to-have. It’s the architecture layer that determines whether your AI product delivers consistent, measurable value or drifts into silent failure. PH1 built this framework to illustrate what Juan Sequeda has been researching for two decades: intent, background, examples, and templates aren’t prompt engineering tricks — they’re the structural foundation that transforms an AI system from a “forever intern” into a strategic partner. Without them, you’re hoping the model figures out what “order” means in your business. Hope, as Juan puts it, is not a strategy.RAG Was the Answer. Now It’s a Symptom of the Real Problem.RAG dominated for two years as the default way to give LLMs context. But as context windows expanded from 8K to a million tokens, the question shifted. This video breaks down when RAG still matters — vast, dynamic datasets and cost efficiency — and when long context windows make the retrieval layer unnecessary. The strategic implication for product teams: RAG was always a workaround for a deeper problem. The real question was never “how do I retrieve the right document?” It was “does my system actually understand my business?” That’s the context layer Juan Sequeda is building — and it sits beneath RAG, long context, and every other implementation detail.In spite of the displacement signals, software engineer job postings are up 11% year over year. But read the fine print: a posting titled “Software Engineer” increasingly means “engineer who can operate LLMs in production” or “build RAG pipelines.” The title stayed the same — the job changed. If your team hasn’t redefined what “engineering” means in the context of AI-augmented workflows, you’re hiring for yesterday’s role.Product Impact ResourcesThe pattern across these resources is consistent: the teams pulling ahead are the ones investing in context, knowledge, and governance infrastructure — not chasing the next model release. Capability is table stakes. The moat is how deeply your product understands the business it serves.* Gartner predicts 80% of enterprises pursuing AI will use knowledge graphs by 2026 to enhance context and reasoning. The shift from “better prompts” to “structured knowledge” is no longer theoretical. The Role of Knowledge Graphs in Building Agentic AI Systems* Microsoft adopted ontology as the foundation of its agentic AI architecture — Fabric IQ, Foundry IQ, and Work IQ create a semantic layer that gives agents shared business understanding. Microsoft Adopts Ontology-Based IQ Layer for Agentic AI* Nathan Lasnoski argues that enterprise knowledge graphs are the foundation for moving from vibe coding to scalable agentic development — without semanti

The teams pulling ahead aren't the ones with the best models

AI products are shipping faster than ever. But shipping isn’t impact. The teams pulling ahead aren’t the ones with the best models — they’re the ones who can prove their product moves the business. This edition is about that gap. How to measure what matters, where the biggest barriers to impact are hiding, and what the latest research says about getting AI products to actually drive growth. Because the real competitive advantage isn’t AI. It’s knowing whether your AI is working.What You’ll Learn in This EditionThis edition cuts through the noise to focus on the measurement gap — the difference between shipping AI and proving AI drives growth.* The Power/Speed/Impact/Joy bullseye — a calibration framework for AI products that actually drive growth* A Nature paper reveals why removing friction from AI may be destroying the learning your team needs* John Maeda on why design teams are being hollowed out — and why PMs are next* Benedict Evans on why even OpenAI can’t solve product-market fit with capability alone* Research that should change how your team thinks about AI-assisted skill buildingThanks for reading Product Impact | AI Strategy, Value Creation, AI UX! This post is public so feel free to share it.Episode 1: Why Your AI Metrics Are Lying to You - Framework for improving AI product performanceYour AI product might be fast, capable, and technically impressive — and still not drive the growth your business needs. In this episode, Brittany Hobbs and I introduce the Power, Speed, Impact, and Joy bullseye — a calibration framework borrowed from F1 racing. The teams winning aren’t shipping more features. They’re measuring different things entirely. We break down a three-layer eval approach and why most completion metrics are hiding the signals that matter.“Success does not mean satisfaction. If someone stops engaging, does that mean they solved their problem — or that they were frustrated and left?” — Brittany HobbsListen on Spotify | Apple Podcasts | YouTubeYour Role Isn’t Shrinking. It’s Being Hollowed Out.John Maeda — Three major tech companies have restructured design teams into “prompt engineering pods.” Maeda’s #DesignInTech 2026 calls it what it is: the elimination of design judgment from the product process. “When you replace a designer with a prompt, you don’t lose the pixels. You lose the questions that should have been asked before anyone opened a tool.” This applies to product managers too — if your PM’s job becomes prompt-wrangling instead of deciding what to build and why, you’ve automated the wrong layer. The roles aren’t disappearing. The judgment inside them is.Featured Resource: Strategy for Measuring & Improving AI ProductsThe gap between what AI products ship and what they prove is where growth stalls. This framework moves teams from tracking activity — token counts, completion rates, session length — to defining and measuring the outcomes that actually drive business impact. Most teams ship features and assume engagement means success. It doesn’t. If your team can’t answer “is this AI feature making the business better?” with data, you’re flying blind. The framework covers product discovery through scale, with concrete steps for building measurement into your AI product from the start — not bolting it on after launch.Read the full resource at ph1.caWaterfall: we’ll build you a car in 18 months. Agile: here’s a skateboard, we’ll iterate. AI: here’s a photorealistic render of a Lamborghini that doesn’t start. We’ve never made it easier to build something that looks incredible and does absolutely nothing. AI development doesn’t need more iteration — it needs someone asking “does this thing actually drive?”If your team is celebrating demos instead of outcomes, you’re already behind the teams that measure first and ship second.Two years of capability gains. Almost no reliability improvement. This is the chart that should be on every product team’s wall — because it explains why your AI demos brilliantly and fails in production. Capability without reliability isn’t a product. It’s a liability.If your team can’t name which type of AI they’re building, they can’t measure whether it’s working. Six categories that force precision. — Narain JashanmalProduct Impact ResourcesThe resources in this edition make one thing clear: the teams investing in measurement and deliberate friction are pulling ahead, while the ones chasing capability are stalling. These resources challenge the assumption that faster and more capable automatically means better outcomes.* Removing struggle from AI workflows destroys the learning that builds expertise. Teams should audit which friction to keep and which to cut. Against Frictionless AI — Inzlicht & Bloom in Nature* AI users learned 17% less without any efficiency gains. How your team uses AI matters more than whether they use it. How AI Impacts Skill Formation — Shen & Tamkin RCT* Two years of capability gains with only modest reliability improvement. The barrier to gr

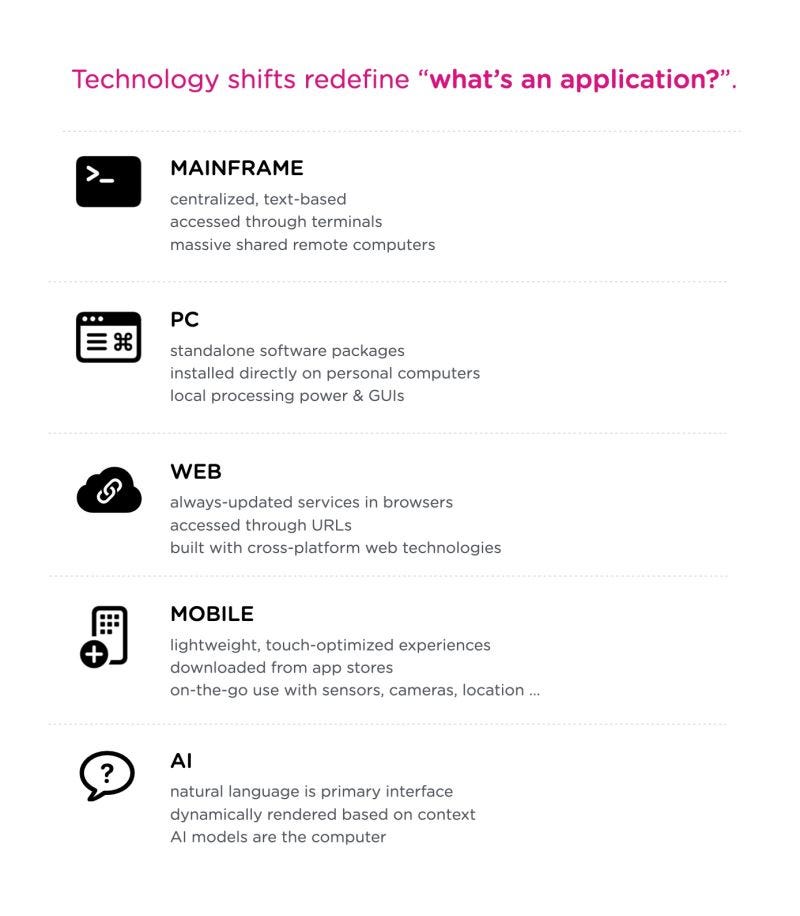

What Happens to Your Product When You Don’t Control Your AI?

AI was supposed to help humans think better, decide better, and operate with more agency. Instead, many of us feel slower, less confident, and strangely replaceable.In this episode of Design of AI, we interviewed Ovetta Sampson about what quietly went wrong. Not in theory—in practice. We examine how frictionless tools displaced intention, how “freedom” became confused with unlimited capability, and how responsibility dissolved behind abstraction layers, vendors, and models no one fully controls.This is not an anti-AI conversation. It’s a reckoning with what happens when adoption outruns judgment.Ovetta Sampson is a tech industry leader who has spent more than a decade leading engineers, designers, and researchers across some of the most influential organizations in technology, including Google, Microsoft, IDEO, and Capital One. She has designed and delivered machine learning, artificial intelligence, and enterprise software systems across multiple industries, and in 2023 was named one of Business Insider’s Top 15 People in Enterprise Artificial Intelligence.Join her mailing list | Right AI | Free Mindful AI Playbook Why 2026 Will Force Teams to Rethink How Much AI They Actually NeedThe risks are no longer abstract. The tradeoffs are no longer subtle. Teams are already feeling the consequences: bloated tool stacks, degraded judgment, unclear accountability, and productivity that looks impressive but feels empty.The next advantage will not come from adding more AI. It will come from removing it deliberately.Organizations that adapt will narrow where AI is used—essential systems, bounded experiments, and clearly protected human decision points. The payoff won’t just be cost savings. It will be the return of clarity, ownership, and trust. This is going to manifest first with individuals and small startups who were early adopters of AI. My prediction is that this year they’ll start cutting the number of AI models they pay for because the era of experimentation is over and we’re now entering a period where deliberate choices will matter more than how fast the model is. Read the full article on LinkedIn. Do You Really Need Frontier Models for Your Product to Work?For most teams, the honest answer is no.Open-source and on-device models already cover the majority of real business needs: internal tooling, retrieval, summarization, classification, workflow automation, and privacy-sensitive systems. The capability gap is routinely overstated—often by those selling access.What open models offer instead is control: over data, cost, latency, deployment, and failure modes. They make accountability visible again. This video explains why the “frontier advantage” is mostly narrative:Independent evaluations now show that open-source AI models can handle most everyday business tasks—summarizing documents, answering questions, drafting content, and internal analysis—at levels comparable to paid systems. The LMSYS Chatbot Arena, which runs blind human comparisons between models, consistently ranks open models close to top proprietary ones.Major consultancies now document why enterprises are switching: predictable costs, data control, and fewer legal and governance risks. McKinsey notes that open models reduce vendor lock-in and compliance exposure in regulated environments.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! Subscribe for free to receive new posts and support my work.What Happens When “Freedom” Becomes an Excuse Not to Set Boundaries?We’ve confused freedom with capability. If a system can do something, we assume it should. That logic dissolves moral boundaries and replaces responsibility with abstraction: the model did it, the system allowed it.When no one owns the boundary, harm becomes an emergent property instead of a design failure.What If AI Doesn’t Have to Be Owned by Corporations?We’re going to experience a rise in AI experts challenging the expectations that Silicon Valley should control AI.What if AI doesn’t need to be centralized, rented, or governed exclusively by corporate interests?On-device models and open ecosystems offer a different future—less extraction, fewer opaque incentives, and more meaningful choice.Follow Antoine Valot as him and Postcapitalist Design Club explore new ways of liberating AI.Are We Using AI for Anything That Actually Matters?Much of today’s AI usage is performative productivity and ego padding that signals relevance while eroding self-trust. We’re outsourcing thinking we are still capable of doing ourselves.AI should amplify judgment and creativity. Use this insanely powerful technology to make you achieve greater outcomes, not deliver a higher amount of subpar work to the world.If We Know the Risks Now, Why Are We Still Acting Surprised?The paper “The AI Model Risk Catalog” removes the last excuse.Failure modes are documented. Harms are mapped. Blind spots are known.Continuing to deploy without contingency planning is no longer innovation—it’s neglige

When AI Isn’t the Answer, It’s the Problem

In Episode 48 of the Design of AI podcast, we unpack why the most common AI promises are collapsing under real market pressure. AI was meant to unlock strategic work, expand opportunity, and elevate creativity. Instead, UX and design roles are disappearing, agencies are cutting creative staff while buying automation, and freelance work is being devalued as execution becomes cheap.This episode is not about panic. It is about reality. Value still exists, but it is concentrating among those who can integrate AI into real systems, navigate ambiguity, and own outcomes rather than outputs.🎧 Apple Podcasts🎧 SpotifyKey Insights About AI at WorkWhat the evidence shows once the optimism is removed.MIT Media Lab: ChatGPT Use Significantly Reduces Brain Activity (2025)Early AI use reduces attention, memory, and planning, weakening independent thinking when models lead the process.Wharton / Nature: ChatGPT Decreases Idea Diversity in Brainstorming (2025)AI-assisted brainstorming narrows idea diversity, producing faster output but more uniform thinking across teams.Science Advances / SSRN: The Effects of Generative AI on Creativity (2024)AI improves fluency and polish while consistently reducing originality and conceptual depth.arXiv: Human–AI Collaboration and Creativity: A Meta-Analysis (2025)Human-led AI collaboration improves quality slightly, but AI reduces diversity without strong framing and judgment.arXiv: Generative AI and Human Capital Inequality (2024)AI disproportionately benefits those with systems thinking and judgment, widening gaps between experts and generalists.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! This post is public so feel free to share it.Realities of Being AI Early AdoptersThe Raised Floor Trap by Hang XuAI makes baseline output easy. What it doesn’t make easy is integration, orchestration, or delivery inside real teams. Most people reach adequacy. Very few compound value. We’re not able to generate the type of value we’re sold on.👉 Follow Hang Xu for insights about the realities and challenges of the job marketAI UX as a Growth BarrierAI systems are far more capable than they appear, but their UX blocks growth. They don’t know how to help unless you know how to ask, structure, and specify intent. So even after hours of work trying to grow your AI abilities, you’ll often hit a ceiling because these systems can’t interpret our capabilities and gaps.👉 Follow Teresa Torres for expert Product Discovery strategies and tactics.Help Shape 2026We’re planning upcoming episodes on career resilience, AI adoption, and where durable value still exists.Take the 3-minute listener survey and tell us what would actually help you next year.Which Skills Are Being Replaced by AI?AI is not replacing jobs all at once. It is removing pieces of them.Execution, summarization, and surface analysis are increasingly automated. What remains defensible are skills rooted in judgment, accountability, synthesis across messy contexts, and decision-making under uncertainty.Shira Frank & Tim Marple: Cubit — Task-Level Reality Check (2025)Cubit breaks jobs into discrete tasks, revealing where LLMs already substitute human labor and where judgment, context, and accountability still hold. It makes visible how roles erode gradually, not all at once.MIT Sloan: Why Human Expertise Still Matters in an AI World (2024)AI performs well in structured domains but consistently fails in ambiguity, ethics, and long-horizon tradeoffs. These limits define why senior expertise remains defensible, but only when it is exercised, not delegated.Harvard Business School: Why Judgment Remains a Competitive Advantage (2023)AI can generate options and recommendations, but it cannot own outcomes. Responsibility, consequence, and decision accountability remain human burdens and human moats.Lots of News This WeekCopilot didn’t fail. It succeeded at the wrong thing.Microsoft proved AI can clear security, compliance, and procurement at massive scale. But Copilot hasn’t changed behavior. Universal assistants optimize for adoption, not dependence.🔗 https://www.linkedin.com/posts/adragffy_copilot-didnt-fail-it-succeeded-at-the-activity-7406719225714855936-G9H3AI credit limits aren’t a pricing tweak. They’re a reckoning.Credit caps expose the real problem. AI has marginal cost, and teams must now prove ROI per call, not ship more features.🔗 https://www.linkedin.com/posts/adragffy_ai-activity-7407130709678567424-IzG-AI trust is breaking faster than adoption.AI chat logs expose identity, not transactions. Scale without support erodes trust, loyalty, and long-term value.🔗 https://www.linkedin.com/posts/adragffy_llm-ai-customerexperience-activity-7408835025787461633-j56YAI ROI isn’t what Anthropic says it is.Anthropic claims 80% of organizations have achieved AI ROI. They haven’t. They’ve reached table stakes. The report shows gains concentrated in efficiency, faster tasks, and internal automation, while only 16% reach end-to-end,

The Creativity Recession and Why Product Leaders Must Reverse It Now

Our latest guest is Maya Ackerman — AI‑creativity researcher, professor, and author of Creative Machines: AI, Art & Us (Wiley), as well as founder of WaveAI and LyricStudio (View recent colab with NVidia).Maya’s perspective is not just insightful — it’s a necessary reality check for anyone building AI today. She challenges the comforting narrative that AI is a neutral tool or a natural evolution of creativity. Instead, she exposes a truth many in tech avoid: AI is being deployed in ways that actively diminish human creativity, and businesses are incentivized to accelerate that trend.Her research shows how overly aligned, correctness-first models flatten imagination and suppress the divergent thinking that defines human originality. But she also shows what’s possible when AI is designed differently — improvisational systems that spark new directions, expand a creator’s mental palette, and reinforce human authorship rather than absorbing it.This episode matters because Maya names what the industry refuses to admit. The problem is not “AI getting too powerful,” it’s AI being used to replace instead of elevate. Businesses are applying it as a cost-cutting mechanism, not a creative amplifier. And unless product leaders intervene, the damage to creativity — and to the people who rely on it for their livelihoods — will become irreversible.Listen to the Episode on Spotify, Apple Podcasts, YoutubeWe’re engineering a global creative regression and pretending we aren’t.Generative AI could radically expand human imagination, but the systems we deploy today overwhelmingly suppress it. The literature is unequivocal:* AI boosts creative output only when tools are intentionally designed for exploration, not correctness.* When aligned toward predictability, AI drives conformity and sameness.* The rise of “AI slop” is not an insult — it’s the logical outcome of misaligned incentives.* New evidence shows that AI-assisted outputs become more similar as more people use the same tools, reducing collective creativity even when individual outputs look “better.”* Homogenization is measurable at scale: marketing, design, and written content generated with AI converge toward the same tone and syntax, lowering engagement and cultural diversity.* Repeated reliance on AI weakens human originality over time — users begin outsourcing ideation, losing confidence and capacity for divergent thought.Resources:* The Impact of AI on Creativity: https://www.researchgate.net/publication/395275000_The_Impact_of_AI_on_Creativity_Enhancing_Human_Potential_or_Challenging_Creative_Expression* Generative AI and Creativity (Meta-Analysis): https://arxiv.org/pdf/2505.17241* AI Slop Overview: https://en.wikipedia.org/wiki/AI_slop* Generative AI Enhances Individual Creativity but Reduces Collective Novelty:https://pmc.ncbi.nlm.nih.gov/articles/PMC11244532/* Generative AI Homogenizes Marketing Content:https://papers.ssrn.com/sol3/Delivery.cfm/5367123.pdf?abstractid=5367123* Human Creativity in the Age of LLMs (decline in divergent thinking):https://arxiv.org/abs/2410.03703 BOTTOM LINE: If your product optimizes for correctness, brand safety, and throughput before originality, you are actively contributing to the global collapse of creative quality. AI must be designed to spark—not sanitize—human imagination.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! This post is public so feel free to share it.Award-winning creative talent is disappearing at scale, and the trend is accelerating.The global creative workforce is shrinking faster than at any time in modern history. Companies claim AI is “enhancing creativity,” yet most restructuring reveals the opposite: AI is being deployed primarily to cut labor costs. In general, layoff announcements top 1.1 million this year, the most since 2020 pandemic.What’s happening now:* Omnicom announced 4,000 job cuts and shut multiple agencies — Reuters reporting: https://www.reuters.com/business/media-telecom/omnicom-cut-4000-jobs-shut-several-agencies-after-ipg-takeover-ft-reports-2025-12-01/* WPP, Publicis, and IPG executed multi-round layoffs across design, writing, strategy, and production.* Digiday interviews confirm AI is used mainly to eliminate junior and mid-level creative roles: https://digiday.com/marketing/confessions-of-an-agency-founder-and-chief-creative-officer-on-ais-threat-to-junior-creatives/The most important read on the future & destruction of agencies comes from Zoe Scaman. She always brings a powerful and necessary mirror to the shitshow that is modern corporate world. Read it here:Freelancers and independent creatives are being hit even harder:* UK survey: 21% of creative freelancers already lost work because of AI; many report sharply lower pay — https://www.museumsassociation.org/museums-journal/news/2025/03/report-finds-creative-freelancers-hit-by-loss-of-work-late-pay-and-rise-of-ai/* Illustrators, motion designers, and concept artists report declining commissions as clie

The Real Reason Tech Products Fail

Our latest episode features Jessica Randazza Pade, Head of Brand Activation & Commercialization at Neurable. Named to Campaign US’s 40 Over 40 and ELLE Magazine’s 40 Under 40, Jessica is an award-winning global digital marketer, business leader, and storyteller. She explains why AI is not a value proposition, how to turn vague use cases into measurable outcomes, and why making technology invisible is often the strongest competitive advantage.“If the user can’t articulate what’s different in their life because of your product, you’re selling a vitamin—not a painkiller.”Listen on Apple Podcasts | SpotifyShape Our 2026 ResearchWe’re mapping where teams are struggling with AI adoption and what tools, frameworks, and support they need in 2026. Your input directly shapes our annual research and the topics we cover.Take the survey → https://tally.so/r/Y5D2Q5AI has lowered the cost of prototyping but raised the bar for adoption. Most AI products fail because they launch demos instead of durable workflows, rely on large models where small ones would work better, ignore trust, or sell “time savings” instead of business outcomes. Organizations resist tools that feel risky, inaccurate, unproven, or misaligned with real workflows. Complicated architecture, poor UX, weak personalization, and unclear ROI all compound the problem. Here’s a sample of it:#3: Your product doesn’t actually learn. Fake personalization destroys trust.#4: One hallucination can end adoption permanently.#8: “Saving time” is not a business case—outcomes are.#11: Organizational silos suffocate AI products.#17: Without a workflow and measurable ROI, you don’t have a product.AI will not save your product. Only reliability, trust, workflow clarity, governance readiness, and measurable value delivery will.Read the full article → https://ph1.ca/blog/why-your-AI-product-will-failsThe Year of AI ValueThis video covers why 2026 marks a turning point where AI is judged not by novelty or intelligence but by measurable ROI, workflow impact, and operational reliability. It explains why businesses are shifting from “AI features” to fully redesigned AI-enabled systems.We are past the point of buying AI based on promisesAI buyers no longer invest because the tech is impressive. They invest when it:* delivers measurable ROI* reduces operational and compliance risk* integrates into existing workflows* produces consistent results* overcomes organizational resistance and silosIf you’d like us to create a full episode on why AI products fail, add a comment to this post.The AI Adoption Curve Is About to FlipThis video explains how organizations are moving from experimentation to structural integration, redesigning roles, responsibilities, and workflows around AI. It also highlights early signals that distinguish “tool usage” from true operational adoption.Watch →Featured Thinker: Stuart Winter-TearThis week we’re spotlighting the insightful work of Stuart Winter-Tear, founder of Unhyped. His writing reframes LLM inconsistency as a reflection of the chaotic and contradictory data ecosystems they’re trained on—challenging assumptions about rationality, coherence, and system behavior.LinkedIn | Substack Featured Reads1. The GenAI Divide: Why 95% of enterprise GenAI projects failMIT’s 2025 State of AI in Business report finds that 95% of GenAI pilots generate no measurable ROI, mainly due to lack of workflow integration and unclear value metrics.https://mlq.ai/media/quarterly_decks/v0.1_State_of_AI_in_Business_2025_Report.pdf2. Apple Mini Apps and the new distribution frontierGreg Isenberg outlines how Apple Mini Apps may redefine onboarding, distribution, and reach across the entire consumer ecosystem.https://x.com/gregisenberg/status/19893414608947118383. Calum Worthy’s “2wai” and the ethics of selling the unimaginableThe actor launched an app enabling people to generate AI avatars of deceased relatives—a revealing look at how AI now commercializes ideas once considered unthinkable.https://www.businessinsider.com/calum-worthey-2wai-ai-dead-relatives-app-launch-2025-14. The Complete Guide to Building with Google AI StudioMarily Nika provides a comprehensive, practical guide to building production-ready applications with Google’s AI ecosystem.5. SNL’s Glen Powell AI Sketch: When satire becomes a warningThe Atlantic unpacks how SNL’s AI sketch captures the cultural moment—where AI shifts from hype to comedic critique, signaling deeper public skepticism.https://www.theatlantic.com/culture/2025/11/snl-glen-powell-ai-sketch/684944/Coming Up on the PodcastOur upcoming guests include:* Ovetta Sampson — Chief Human Experience Officer & AI Design leaderhttps://www.ovetta-sampson.com/* Dr. Maya Ackerman — Generative AI researcher and creativity systems experthttps://maya-ackerman.com/* Leonardo Giusti, Ph.D. — Head of Design, Archetype AIhttps://www.archetypeai.io/If you haven’t participated yet, please take our 2026 survey and help shape where our research goes next: https://tally.s

Designing Agents That Work: The New Rules for AI Product Teams

Our latest episode explores the moment AI stops being a tool and starts becoming an organizational model. Agentic systems are already redefining how work, design, and decision‑making happen, forcing leaders to abandon deterministic logic for probabilistic, adaptive systems.“Agentic systems force a mindshift—from scripts and taxonomies to semantics, intent, and action.”🎧 Listen on Spotify🍎 Listen on Apple PodcastsAnd if you want to go deeper, check out Kwame Nyanning’s book, Agentics: The Design of Agents and Their Impact on Innovation. It’s the definitive field guide to designing agentic systems that actually work.Most striking for me was when discussed that we need to move from pixel-perfect to outcome-obsessed. Designers and product teams have for so long been more obsessed on the delivery of the output and now is time to be most concerned on the impact on customers.The hard truth: Most organizations are trying to graft AI onto brittle systems built for predictability. Agentic design demands something deeper: ontological redesign, defining entities, relationships, and intents around customer outcomes, not internal structures. If you can’t model intent, you can’t build an agent.Key takeaway: Intent capture is the new UX. Products that succeed will anticipate user context, detect discontent, and adapt autonomously.Featured Articles: Where Reality Collides with AmbitionAI Has Flipped Software Development — Luke WroblewskiWroblewski lays out how AI has upended the software stack. Interfaces now generate code. Designers define the logic while engineers review and govern it. The result? Faster cycles but a dangerous illusion of progress. Design intuition becomes the new compiler, and prompt literacy replaces syntax. The real risk is velocity without comprehension; teams ship faster but learn slower.Takeaway: Speed isn’t the problem; blind acceleration is. Governance, evaluation, and feedback loops are now design disciplines.Agentic Workflows Explained — The Department of ProductThis piece exposes what it really takes to build functioning agents: memory, planning, orchestration, cost control, fallback logic. If your “agent” doesn’t break, it’s probably not learning. Resilient systems require distributed cognition, agents reasoning and retrying within boundaries. Evaluation‑first design becomes the only safeguard against chaos.Takeaway: If your agent never fails visibly, it’s not thinking deeply enough. Failure is how agents learn.Featured Videos: Cutting Through the NoiseThis viral video sells the dream—agents at the click of a button. The reality? Building bots has never been easier, but building agents remains brutally hard. Real agents need long‑term memory, adaptive interfaces, and feedback loops that learn from success and failure. Wiring APIs is not design; it’s plumbing. Until agents can reason, reflect, and recover, they’re glorified scripts.Reality check: The tools are improving, but the discipline is not.A rare honest take. This one focuses on the HCI, orchestration, and reliability problems that still plague agentic systems. We’re close to autonomous task completion, yet nowhere near persistent agency. The real challenge isn’t autonomy—it’s alignment over time.Takeaway: Advancement is fast, but coherence is slow. Designing for recovery and evaluation is the new frontier.Join Our Next WorkshopIf you want to turn these insights into action, join our upcoming Disruptive AI Product Strategy Workshop. You’ll learn how to pressure‑test AI ideas, model agentic systems, and build products that survive beyond the hype. There’s a special 2‑for‑1 offer at the link—bring a teammate and cut the noise together.Recommended Resource: AI & Human Behaviour — Behavioural Insights Team (2025)BIT’s report is a must‑read for anyone designing human‑in‑the‑loop systems. It dissects four behavioural shifts: automation complacency, choice compression, empathy erosion, and algorithmic dependency.Their experiments reveal that AI assistance can dull cognition—users who relied most on recommendations learned less and questioned less. They also found that friction builds trust; brief pauses and explanations improved comprehension and retention. The killer insight? Transparency alone doesn’t work. People often overestimate their understanding when systems explain themselves.Takeaway: Don’t make users “trust AI.” Make them verify it. Design friction that protects judgment.Recommended Reads: What to Study Next* Computational Foundations of Human‑AI Interaction — Redefines how intent and alignment are measured between humans and agents.* Understanding Ontology — “The O-word, “ontology” is here! Traditionally, you couldn’t say the word “ontology” in tech circles without getting a side-eye.”* The Anatomy of a Personal Health Agent (Google Research) — A prototype for truly personal, proactive AI systems that act before users ask.* What is AI Infrastructure Debt? — Why ignoring the invisible architecture behind agents is the next form of

Play, Prompts, and the Perils of Incrementalism

In our latest episode, Michelle Lee (IDEO Play Lab) makes the case that play unlocks the next billion-dollar AI market. She reminds us that kids don’t stop at answers—they ask what if and turn shoes into cars or planes. That divergent mindset is exactly what product teams have lost.“Play is one of the best ways to challenge the norms, to think wide, imagine new possibilities.”Michelle shares:* How IDEO discovered billion-dollar opportunities (like PillPack, later acquired by Amazon) by staying curious.* Why teams should sometimes use older, glitchier versions of AI tools, because the “mistakes” spark better ideas.* Why incrementalism burns teams out and how designing for attitudinal loyalty beats chasing short-term metrics.🎧 Listen here → Play unlocks the next billion-dollar AI marketUncomfortable Truth: Most “AI strategies” today are adult strategies — converging too quickly, chasing predictability, and mistaking incremental progress for innovation. That’s why the breakthroughs are happening elsewhere.Product Workshop: Find your Disruptive PathIf your roadmap looks like everyone else’s, you’re already behind. Our next AI Product Strategy Workshop (Oct 30) is built for teams who want to:* Go beyond features and efficiency to discover truly disruptive opportunities.* Use LLMs as intelligent sparring partners to pressure-test fragile ideas before they waste time and budget.Spots are limited → Register hereHard-Cutting Take: If your roadmap reads like your competitors’, it’s not strategy—it’s risk management dressed up as vision.Incrementalism is the Silent KillerWe’ve all felt it: the slow grind of incremental product decisions that look safe but quietly kill ambition. My new piece argues that incrementalism is the silent killer of AI products—a trap for teams rewarded for predictability instead of progress.Read it on LinkedIn → Incrementalism is the Silent Killer of AI ProductsUncomfortable Truth: Incrementalism feels safe because it rarely fails spectacularly. But it guarantees mediocrity—and in AI, mediocrity is indistinguishable from irrelevance.AI Launches to WatchA wave of new releases will reshape how we design and ship AI products:* OpenAI: Stripe/Shopify integrations + new pre-designed prompts for professionals.* Anthropic: Chrome plugin + Claude 4.5 Sonnet, a faster, cheaper model that expands prototyping and evaluation capabilities.* OpenAI Sora 2: Newly launched today, unlocking endless possibilities for video and creative storytelling, signaling a profound shift in how generative tools will shape the creative industries.These aren’t just upgrades—they’re reshaping commerce and the browser itself. The integration of Stripe and Shopify signals AI’s deepening role in transactions, while Anthropic’s Chrome plugin points to a future where the browser becomes a true intelligent workspace. It’s likely why Atlassian just acquired The Browser Company (maker of Arc and Dia). These moves aren’t incremental improvements; they’re like a rushing river, pushing the entire industry forward whether teams are ready or not.The next frontier isn’t who has the biggest model—it’s who controls the browser as the operating system for work. And then when we looking beyond, it will be who controls our real world experiences… (more on that soon with an upcoming guest)When Projects Go Off the RailsEven as the models improve, they’re only as good as the prompts and evaluations behind them. We’ve seen how easily “comprehensive business cases” collapse when fabricated ROI, vendor costs, and timelines are passed off as fact.It’s the Wizard-of-Oz problem: behind the curtain, most AI projects are stitched together with fragile assumptions.Uncomfortable Truth: Most AI decks aren’t strategy—they’re theater. And like any stage play, the curtain eventually falls.Hidden Pitfalls of AI Scientist SystemsA new paper, “The More You Automate, the Less You See: Hidden Pitfalls of AI Scientist Systems” (arXiv, Sep 10, 2025), warns about the risks of fully automated science pipelines. By chaining hypothesis generation, experimentation, and reporting end-to-end, teams risk producing results that look authoritative but mask invisible errors and systemic failures. (arxiv.org)Uncomfortable Truth: Automation without visibility doesn’t accelerate discovery—it accelerates blind spots.Articles & Ideas We’re Tracking* Prompts.chat → A growing open library of prompt patterns that shows why better prompt design, not just better models, is becoming the key differentiator for teams.* AI in the workplace: A report for 2025 (McKinsey) → McKinsey highlights that while adoption is accelerating, most organizations hit cultural and skills barriers long before technical ones.* The Architecture of AI Transformation (Wolfe, Choe, Kidd, arXiv) → This 2×2 framework shows why most companies get stuck in incremental “legacy loops” rather than unlocking transformational human-AI collaboration.* TechCrunch: Paid raises $21M seed to pioneer results-based billing with AI

AI Product Strategy FAQ, Minus the Bullsh*t

Our latest episode features Nicholas Holland (SVP of Product & AI at HubSpot) and explains how AI is actually changing go-to-market teams:* AI cuts rep research time and turns calls into structured insight* “AI Engine Optimization” (AEO) is becoming the new SEOThis conversation isn’t speculative—it’s a blueprint. Listen to Episode 42 on Apple Podcasts🚨 Upcoming Workshop: Sept 18 — AI Product Strategy for Realists Use promocode pod30 at checkout to get 30% off your registration!Join us for a live 90-minute workshop that goes beyond the hype. We’ll walk through real frameworks, raw mistakes, and how to make AI product strategy actually work—for small teams, scale-ups, and enterprise leaders.👉 Save your seat nowAI Product Strategy FAQ, Minus the Bullsh*tOver the past few months, we’ve been collecting the most common—and most misunderstood—questions about AI product strategy. What we found were recurring patterns of confusion, hype, and hope. This article breaks down those questions one by one with honest answers, uncomfortable truths, and hard-won lessons from teams actually building and shipping AI products.Each section includes:* A blunt reality check (“Uncomfortable Truth”)* A strategic lens for tackling it* A sticky insight to anchor your messaging* A practical takeawayThis is not a “how AI works” explainer. This is how to make it useful—inside a real product.Q1: How do we choose the right use case for AI in our product that actually delivers value?Uncomfortable Truth: The best use cases might be internal—not flashy or customer-facing. If you’re just “adding AI” for the optics, you’re already off-track.Strategic Frame: Don’t chase the cool feature—hunt down the messiest workflow and blow it up.Always Remember: Your AI should solve a problem your users complain about—not a problem your team finds interesting.Research This: Map the top 10 recurring tasks inside your product (or across your internal ops). Which of them have the highest time cost and lowest user satisfaction? That’s your AI opportunity space.Real Example: Altan (natural language app builder); internal fraud detection automation; AI for helpdesk triage.Takeaway: Pick the ugliest, least scalable problem your users hack around with spreadsheets. Then automate that.Q4: How do we handle data privacy and ethics when integrating AI features?Uncomfortable Truth: Most tools don’t offer true privacy—they use your data to train their models. That’s not a technical flaw—it’s a business choice.Strategic Frame: If trust is central to your brand, bake it into the infrastructure. Build sandboxes. Offer guarantees. Publish your governance.Always Remember: You don’t get to ask users for their data and their forgiveness.Research This: Ask your legal, compliance, or procurement partners what requirements would be non-negotiable for adopting a third-party AI tool. Then apply those to your own product.Example Guidance: Make “zero training from user data” a tiered feature—or your default.Takeaway: If you’re targeting enterprise buyers, your AI feature won’t get through procurement unless you have strict privacy toggles and a clear usage log.Q5: How do we measure the success of AI features in a product?Uncomfortable Truth: More engagement doesn’t always mean more value. In AI, time spent might mean confusion—or masked frustration. People may feel delight and friction in the same moment, and without qualitative research, you won’t know which signal you’re shipping.Strategic Frame: Define one high-value outcome. Build just enough UI to validate whether users reach it.Always Remember: Don’t just watch what users do—listen for what they expected to happen.Research This: Run a usability test where you ask users to explain what they expect the AI feature to do before using it—then again after. Once you've delivered an output that surprises them, ask them what outcomes it enables.Takeaway: In a contract automation tool, the success metric isn’t “time in app”—it’s “first draft accepted with zero edits.” That’s your true win signal.Q6: What’s the best way to communicate AI capabilities to non-technical stakeholders or users?Uncomfortable Truth: AI isn’t novel anymore—outcomes are.Strategic Frame: Sell transformation, not tech. Show how life is better with the tool than without.Always Remember: Once someone experiences the magic, it doesn’t matter what powers it.Research This: Ask 5 users to explain your AI feature to a friend, using their own words. Their phrasing will tell you how clearly the value lands—and what metaphors or language they trust.Examples:* GlucoCopilot: Turns data chaos into peace of mind.* Flo: Makes symptom tracking feel intuitive and empowering.* Lovart: Auto-generates brand kits from a single prompt.Takeaway: Everyone’s building outputs. You win by delivering outcomes. Spreadsheets are useful to power users—but most people just want the insight and what to do next. AI should skip the formula and deliver the finish line.Q7: How do we monetize AI in a wa

The End of Product Teams as We Know Them

🎙️ Listen on Spotify | Apple Podcasts | YouTubeI recently spoke with Maor Shlomo, founder of Base44—the platform that lets anyone build apps, tools, and games just by describing them to an AI. In six months, he built Base44 solo and sold it to Wix for $80M. It’s the clearest signal yet: the rules of building have changed, and most teams aren’t ready.We dug into:* Why vibe coding crushes the myth that innovation requires big teams and big funding.* How cross-domain generalists will thrive while narrow specialists get sidelined.* Why software that doesn’t become agent-driven will be left for dead.* The ruthless advantage of starting over quickly when the build cost is near zero.Maor’s blunt take: “If one person can go this far alone, do we need whole teams to achieve the same things?”🎧 Full episode: Listen on SpotifyThanks for reading Design of AI: Strategies for Product Teams & Agencies! This post is public so feel free to share it.The uncomfortable truth: Interfaces are vanishingVibe coding strips away menus, clicks, and UIs. You speak, and the machine builds. The UX profession must decide—adapt to this new layer of interaction, or watch relevance slip away.* Speak ideas, skip interfaces.* Abstraction layers are collapsing.* Creation is now a conversation.🔗 Read the full post on LinkedIn📅 AI Product Strategy Workshop — Register hereThis isn’t a “future of work” talk. It’s a hands-on reality check.* Spot where AI will gut existing workflows—and where the real opportunities lie.* Pressure test your product strategy against the agent-driven future.* Learn how to pivot faster than incumbents weighed down by legacy.If you think you can wait this out, you’ll already be too late.There’s a 2-for-1 deal right now using this link.* SSRN study: AI is already displacing workers across industries.* Challenger, Gray & Christmas: 10,000+ AI-driven layoffs in the first seven months of 2025.* World Economic Forum: up to 30% of U.S. jobs could be automated by 2030.* Anthropic CEO Dario Amodei: “Half of entry-level white-collar jobs may disappear, pushing unemployment to 10–20% within five years.” ([Axios](https://www.axios.com/2025/05/28/ai✍️ I recently published Navigating Contradictions: A Manifesto for Product Teams in an Era of Change.In it, I confront the contradictions head-on: speed vs. depth, AI optimism vs. ethical risk, innovation vs. trust. Teams that refuse to wrestle with these tensions won’t survive.Key line: “Product teams must learn to hold space for competing truths—where speed and discovery coexist with responsibility and depth.”🔗 Read the full article on MediumAgencies and consultancies have thrived on labor arbitrage. That arbitrage just died. As AI agents mature, they won’t just support consultants—they’ll cannibalize them. The uncomfortable truth: if your business model depends on armies of analysts or designers, you’re already obsolete.🔗 Read the full post on LinkedIn👉 What do you think?I’ll admit it: I once wrote vibe coding off as a gimmick. Now, I see it as the end of UI as we know it. Every interface has been an abstraction—an awkward compromise between human thought and digital execution. Those compromises are being stripped away at speed.The uncomfortable truth? The gap between an idea and a product is collapsing. That means fewer roles, fewer gatekeepers, and a brutal shift in how work gets done.Have you tried vibe coding? Does it excite you, scare you—or both? Reply and let’s talk. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit productimpactpod.substack.com

From AI as Tool to AI as Teammate: Lessons from Atlassian & What’s Next for Product Leaders

🎙 Episode 40: Atlassian’s Secrets to Successful AgentsIn this episode, Jamil Valliani (VP & Head of Product AI at Atlassian) shares how they embed AI across Jira, Confluence, and Trello through intelligent agents that blend into workflows—far from mere “+AI” buttons. He emphasizes starting small with tangible prototypes to build momentum and leadership alignment, showing that AI gains stick when they're experienced, not explained.Highlights from the episode:* Hands-on AI adoption at Atlassian: transforming workflows, not just products* From friction to flow: how prototypes bridge skepticism and trust* AI as teammate, not feature—designing for collaboration, not automation* Adoption baked into experience—make AI habitual, not optional“The most successful teams will treat AI not as a button you press, but as a teammate you collaborate with.”Listen on Spotify | Listen on Apple | Watch on YouTube —and share one workflow where AI acting more like a teammate could unlock unexpected value.About the Guest:Jamil Valliani brings two decades of product leadership (including 15 years at Microsoft) to Atlassian, where he’s spearheading AI-powered design.* LinkedIn* Atlassian RovoUpcoming Workshop: AI Product StrategyProduct teams everywhere are facing the same challenge: leadership wants AI integration for competitive advantage, but without certainty about which AI products will actually be valuable to customers.When: Thursday, September 18, 2025 (online)What you’ll gain:* Diagnose the highest-leverage AI use cases* Prototype with precision—avoid costly detours* Craft a resilient strategy that scales beyond pilot phaseRegister on Eventbrite and get a 2 for 1 promo.Learn to Synthesize or ElseIn a world awash with data, the real advantage lies not in knowing more—but in drawing clarity from the noise. Product and design leaders must become the translators of complexity, turning abundant knowledge into purposeful, actionable insight.h/t Stuart Winter TearEmerging Shift: Role-Dissolving AIFigma, OpenAI, and others are signaling a paradigm shift: AI is merging design, engineering, and research into a unified discipline. The competitive edge now lies in craft, judgment, and cross-disciplinary fluency—not siloed specialization.AI Merging Tech Roles, Favoring Generalists: Figma CEO Dylan FieldFeatured Video: Why Designers & Engineers Must Rethink Workflows for AI to Deliver Real ValueThis video pressurizes teams to question legacy workflows. Without overhauling collaboration models, decision-making structures, and design intent, even advanced AI remains misunderstood or underleveraged.Research To Reframe Your Strategy1️⃣ Mixture of Reasoning (MoR)Why it matters: LLMs can be trained to switch between reasoning styles—stepwise logic, analogies, symbolic reasoning—without prompt engineering.Strategy shift: Build assistants that adapt reasoning to task: planning one moment, diagnosing the next.Quick test: A/B fixed vs. adaptive reasoning in support/search flows to spot gains in mixed-query handling.2️⃣ In-Context Learning as Implicit Weight UpdatesWhy it matters: Transformers tweak their own behavior on-the-fly based on prompt context—no retraining required.Strategy shift: Enable products to adapt within interaction sessions, not over multiple deploy cycles.Quick test: Prototype context-aware replies and monitor when users feel seen vs. served.3️⃣ Chain-of-Thought (CoT) MonitorabilityWhy it matters: Exposing AI’s reasoning steps helps catch misalignment before it reaches users—but this safety window is fragile.Strategy shift: Don’t equate explanation with trust. For high-stakes domains, embed traceability and risk alerts.Quick test: Add CoT transparency to UX, measure user trust shifts when rationale is visible.Follow my co-host, Brittany Hobbs for essential research and product insights news.Your Next ChallengeMost teams drop AI into their products like sprinkles on a cupcake. But strategy—true product strategy—demands AI baked into the experience, from the core outward.Reply here or email me.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! Subscribe for free to receive new posts and support my work. This is a public episode. If you would like to discuss this with other subscribers or get access to bonus episodes, visit productimpactpod.substack.com

The Risks & Research of Over Reliance on AI

After a frustrating week of trying to wrangle AI outputs, we decided to explore the risks of overreliance on AI. It’s good for us to question our tools. It enhances our processes and challenges us to find the right tools.Listen on Spotify | Listen on Apple Podcasts | Watch on YouTubeIn this episode, we say the quiet parts out loud. Not only are LLMs often feeding us incorrect information, but over-trusting these systems poses a serious risk.We can look at this Rolling Stone article headline and immediately laugh it off. It is insane to believe this will happen to anyone we know. However, in Mark Zuckerberg’s vision of the AI future, your friends will be bots. The loneliness epidemic is real. One in three Americans feels lonely every week. Data from Harvard’s Making Caring Common Project supports that loneliness is tied to increasing feelings of anxiety, not part of this country, and being about more than social isolation. 65% of respondents blame “our society,” pointing to a lack of confidence in our way of life and institutions.So, it should be no surprise that Harvard Business Review found that the top three use cases of 2025 involved loneliness and navigating life's stresses.AI could quickly become the next addiction for a world desperate for solutions. The fact that there’s demand for robo-companionship shouldn’t be treated as validation for building more tools to disassociate them from life. Let’s go back to exploring this topic from the perspective of business users.Understanding GenAI’s Productivity GainsAs we barrel into the AI-powered era, we can take one of two perspectives:* GenAI products are the next evolution of SaaS: Precise tools for specific workflows* LLMs are the next evolution of social media, where instead of degrading our interpersonal relationships, AI will addict us to easy and often incorrect informationThe majority of the research identified productivity gains and time savings, which would support the goal of GenAI as a professional advantage. But when you dig into the data, there are concerns.Many are funded by Microsoft, OpenAI, and Google, like this one showing that GitHub Copilot users completed tasks 55.8% faster than the control group. While that result was impressive, they were being assessed on their ability to complete a very basic task. The paper’s results were boosted by showing that people with less experience benefit more from coding assistants, something that should worry anyone concerned about being replaced by cheaper talent who are boosted by AI.But these results were refuted in a separate study where no DevOps productivity gains were found from using the same GitHub Copilot. That study found the code quality to be poor, leading to a 41% increase in bugs!Remember that GitHub is owned by Microsoft and powered by OpenAI’s foundation model, ChatGPT.This contradictory data highlights a paradox of GenAI: The technology is increasingly more successful at a basic task-level, but shouldn’t be over-relied on to do our work for us. This Danish study hammers that point home: Time saved by AI offset by new work created. If we shake ourselves out of the AI hype stupor, we can critically examine the current state of LLMs more like SaaS tools. 95% of SaaS tools available won’t help you and your business. Once you find the right tool for your hyper-specific use case, the AI product’s success will depend on its implementation and the first-party data entered into it.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! More Research about Using AIBehavioural research about AI should be considered a counterpoint to the benefits of AI. Yes, leveraging GenAI will have productivity gains in specific circumstances. But the technology also brings with it risks and considerations that built into the design and business case of “should we build this” discussions.* Increased AI use linked to eroding critical thinking skills* When experiencing time pressures, we’re more susceptible to misinformation* AI systems are already capable of deceiving humans* People Facing Life-or-Death Choice Put Too Much Trust in AI* AI’s Trust Problem: Twelve persistent risks of AI that are driving skepticismCatch up on Recent Design of AI Episodes31. AI is Disrupting Architecture and Lessons for Digital Product TeamsGuest Matthew Krissel (FAIA) explores how AI is reshaping architectural design and what digital product teams can learn about process, creativity, and scale from the built environment.30. Take Control of AI’s Predictive Power – Tyler Hochman, ForethoughtTyler Hochman shares how businesses can operationalize AI for forecasting and insights by targeting high-value, repeatable problems and unlocking underutilized data.29. Trust is a Double‑edged Sword: AI will Transform Services – Sarah Gold, Projects by IFSarah Gold explains how AI changes our relationship with services and why it’s urgent to rethink trust, transparency, and accountability in product design.28. AI will Transform Pro

AI Promises us More Time. What Should we do With it?

When reports like Adecco’s Global Workforce of the Future survey find that the average saving for workers using AI is 1 hour a day, we should question this. * What did those workers do with their time savings? * Should that time savings benefit the employer or the employee?* Can we trust such a hard-to-measure stat?Our latest episode tackles this and other disruptions happening to the creative and production processes. Matthew Krissel is the Co-Founder of the Built Environment Futures Council and a Principal at Perkins&Will. For over two decades, he has led transformative architectural projects across North America and internationally. We discussed how AI is disrupting architecture and lessons for digital product teams. He really struck powerful points many times during our conversation about questioning the role of time and permanence in a world when we want more, faster.Other points covered in the conversation:* Commoditizing design makes production easier, enabling societies to tackle challenges like housing shortfalls* Commoditizing design devalues other vital processes, like community engagement, respectful place-making, and longevity of projects* Over-indexing AI’s potential as a workflow optimizer, while under-indexing the potential to reimagine how complex projects are planned and operationalizedListen on Spotify | Listen on Apple PodcastsIn this newsletter, I’d like to tackle the concept of time saving and what it means from the perspective of crafting an AI strategy. Here was the most important quote from the episode: So just because something took half the time it did before, what happened is we just did more. So we just filled the time. Is there something higher and better use? I suspect that somewhere along the line the designs got better. Also I suspect that somewhere along there was diminishing returns. We were just doing more because we could not that it was actually yielding anything better. Are you gonna focus on fewer, but better increase your quality? Are you going to spend more time on business development or some entrepreneurial side hustle? Just go home early? What you decide to do as we start to gain productivity time is going to shape a lot of where this is all happening.Newsletter recommendation: Scott BelskyEssential insights and lessons from Scott Belsky that anyone building with AI must read. His newsletter is fantastic and a must-subscribe because of his unique cross-section of expertise across creativity, product, and innovation. His books have also always been pivotal reads to advance your craft. Hopefully, we can do some of the same with our Design of AI podcast and newsletter. Who should benefit most from your ability to learn AI: You or your employer?The challenge to creatives and builders is to decide who should benefit from these transformative technologies if you’re self-taught:* Should you gift your employer the benefits if you’ve taught yourself ways of getting 25% more work accomplished in a day?* Should you gift yourself the benefits of your increased productivity and work on side projects, or spend more time with your family?Historically speaking, employers were responsible for the means and training of production. They paid for novel technologies —desktops, SaaS, big data— and were responsible for training you on how to use them. AI is different because employers are often lagging behind employees in embracing and educating on how to use the technology effectively. It is very easy to argue that the 200 hours you’ve spent learning AI outside of work hours should exclusively benefit you.AI Time Savings: Benefits & RisksTechnologies have consistently saved us time, but the resulting effects have been questionable. The internet and mobile phones connected the world, while also leading to increased poor health outcomes due to more time sitting. We also spend more time alone than ever.Further back, the Industrial Revolution raised the quality of life for everyone. Still, the commoditization of work led to industrialists exploiting child labour and putting everyone into deplorable working conditions that polluted communities. The time the workforce saved most benefited employers, with employees giving up their ways of life in favour of steady incomes. Most relocated to cities, got cut off from their families, and learned the pain of commuting for the first time.When it comes to AI, the benefits we hope for centre on automation and augmentation. The hope is that we will benefit from less shitty work (automated away) and that we can our new capabilities (augmented by AI) will enable us all become wealthy entrepreneurs. Sure, this may be true for the top 0.01% of AI users who learn how to run a typically 10-person business by themselves. For the rest of us our work may in fact get a lot shittier. At least that’s what the authors of the upcoming book, The AI Con, believe. The authors (and upcoming Design of AI guests), Alex Hanna and Emily M. Bender tell a tale of how AI’s r

AI's Predictive Powers will Change how we Live & Work

As much as image generation is fun, the power of GenAI is prediction. The technology operates very similarly to people you might meet: * Some people have studied and are experts in a single topic for a decade. They’re experts in that topic and can easily infer, correct, and complete tasks. They’re unreliable for everything else.* Some people are generally knowledgeable and have a good understanding of many topics. They aren’t experts but can reliably assist you in many ways. But they’ll also be wrong sometimes.OpenAI, Anthropic, etc.— are highly knowledgeable in almost every topic. That’s the result of being trained on all accessible information online, data they’ve licensed, plus data they’ve allegedly stolen. AI products built on these frontier models are immediately powerful for completing any task. But if you build a point solution on proprietary data explicitly trained on a narrow topic, it can achieve an expert level. That was the focus of our conversation with Tyler Hochman, the Founder and CEO of FORE Enterprise. We discussed unlocking AI’s predictive power by focusing on expensive and repeating problems. How any business or founder can leverage and/or specialized data sets to train AI models to deliver powerful prediction capabilities.Listen on Spotify | Listen on Apple Podcasts | Watch on YouTubeHe’s built AI-powered software to predict when employees may leave their jobs, offer fashion advice, and help professional sports teams improve performance. This video explains how to train your model using Figma files.This conversation highlights how important your first party will become. This data includes more than just your customer data; it should include documenting workflows, quantifying initiatives, and developing a matrix of your offerings/capabilities. Anything repeatable must be quantified as a learning tool.Example of a data collection strategy for AI trainingWhen OpenAI launched a new image generation feature in ChatGPT, everyone jumped on it. AI-generated images infested our feeds in the Studio Ghibli style. These images sparked a lot of worthy debate about copyright infringement, which added to the ethical concerns about how OpenAI trains its model. A recent study highlighted evidence that ChatGPT is trained on copyrighted works.Given that AI models are running out of data to consume, they need to find clever ways to access a new data set. Enter ChatGPT’s image generation tool and Ghibli craze. Millions of people have been feeding their photos into the model, giving it access to an entire universe of new training data to improve the quality of its image generation capabilities. Lesson: Collecting user-generated content can provide your custom model with access to training data that was never possible before. This holds true whether your product is a document scanner, video generator, accounting software, run tracking app, or anything else. As we move into the next phase of AI model evolution, the data you have access to might become your best competitive moat. Thus, businesses with access to ethically sourced content from their communities and customers have an advantage.Thanks for reading Design of AI: Strategies for Product Teams & Agencies! Future of AI-powered workforcesYesterday, LinkedIn exploded with screenshots of an internal memo sent by Shopify CEO Tobi Lutke to teams. It marks the most public evidence that AI is moving from a toy we experiment with to a critical skill that you’ll be scored in your next performance review.The data backs up that AI adoption is surging within workplaces. A study by the Wharton School at the University of Pennsylvania collected data on which use cases AI is most used for. The report highlighted use cases that every business and employees rely on daily or weekly. Not so long ago, employees secretly used AI at work. The year-over-year data indicate that AI products are becoming adopted at an organizational level.AI’s impact on our lives will be dramatic & potentially dystopianStanford’s 2025 AI Index Report offers metrics demonstrating the significant leaps forward AI has made across performance and usage metrics. The technology has already surpassed human baseline performance on many measures. And the technology’s predictive capabilities are showcased in how effective LLM’s performance in clinical diagnosis. It points to a future where every one of us —physicians, educators, factory workers, and beyond— will rely on AI to make more informed decisions. MUST READ: Futures essay about future of superintelligenceThe AI 2027 essay, written by researchers and journalists, examines the question of what happens on a global level as we approach AI superintelligence. A long and worthy read, it illustrates that we are much closer to superintelligence than the public may believe and that the snowball effects of achieving it are massive. They predict dystopian outcomes unless the world unifies around regulations and safety guidelines.If their predictions are true,

Prepare Yourself for AI to Increasingly Change Our Jobs

“The future is already here, it's just not evenly distributed” Science fiction is inspiring, frightening, and often the best lens into the future. Many ideas about the future are b******t —just like this quote being misattributed to the ever-amazing William Gibson— but even the wildest idea shares truths worth discussing.This week’s newsletter is an exercise in imagining how AI will transform the way that we work. The future will impact us differently because some already live with a future-centred mindset, while others prefer to shift their thinking daily. One such future-centred thinker is John Whalen, the author of Design for How People Think and the Founder of Brilliant Experience. He shifted from being an AI skeptic to an advocate because he sees a tidal wave of change coming to how product teams operate.Listen on Spotify | Listen on Apple Podcasts | Watch on YouTubeIn the episode, we discuss how he’s implemented AI into his workflows and how he can now accomplish projects in one week that used to take seven weeks to complete. He makes a compelling case for why every team should use AI-moderation and synthetic users to enhance product outcomes. But most importantly, he’s become an AI advocate because, over his three-decade career, introducing new tools has always been met with doubts and resistance. Ultimately, businesses force the adoption of tools that deliver a clear ROI. There’s still much to debate about AI. Reports like this one from Microsoft continue to show that AI isn’t ready to replace humans at key tasks. Another 2024 study found that ChatGPT delivered inconsistent results on a key qualitative research task, compared to humans. The most important thing about this study wasn’t that humans outperformed LLMs; it was the significant performance improvement from GPT-3.5 to GPT-4.0. AI is getting much better at tasks that seemed unimaginable to automate. We’re hearing the same shocking stories across design, development, research, marketing, and sales. Undoubtedly, AI will be able to automate most of our work within a few years.Will that mean we’ll be replaced? Yes and no. Just like the industrial age and globalization destroyed artisans, AI will significantly reduce the headcount of “artisanal” product people and the rest of the work will be an assembly line of tool operators.Automation will significantly change many people’s lives in ways that may be painful and enduring. But for the economy as a whole, more jobs will be created, and those jobs will look different from those today.Thanks for reading Design of AI. Subscribe to receive new posts.Should we be worried about our jobs?These same conversations are happening across all fields:* Will AI Replace Therapists?* As Technology Progresses, Certain Accounting Jobs May Fade Away* The Risk of Dependence on Artificial Intelligence in Surgery* AI could terminate graphic designers before 2030You’re probably reading this with a sense of confidence that you’re shielded from the impacts of AI because you’re working on the bleeding edge of technology. It’s true. You should be better equipped to navigate the changes as they happen and adapt to the future better than others. Conversely, your roles face additional pressure to change faster than in other industries. The business realities of being backed by venture capital and private equity mean you’re always chasing the future. Tech and agencies have to unlock benefits from AI or risk losing market share and funding.The problem is that nobody can agree on AI's expected impact because it’s still just science fiction.According to the OECD report, the level of impact will largely depend on the level of adoption. High adopters might expect a 3x gain compared to those who adopt AI minimally. A McKinsey report highlights the pressure being placed on employees. Their data shows that C-suite executives blame employee readiness as a barrier to gaining benefits from AI. Only 1% of them believe their AI investments have reached maturity.Combined with last week’s conversation with Jan Emmanuele, AI investments in creative augmentation and automation will surge in 2026 and beyond. This suggests that employees will be under a lot of pressure to become more productive or else be replaced. Listen to that episode for more details on how AI is being adopted:Listen on Spotify | Listen on Apple PodcastsHow will jobs change as a result of AI?There’s no doubt that our jobs will change. They’ve had to change every time a transformative new technology becomes widely adopted. The only difference now is the speed at which change is happening.Let’s analyze how roles are changing from the perspective of product teams.* Our jobs used to be distinct. Each of us had specialties and expertise in areas that protected us.* Our jobs are increasingly commoditized, meaning people from other jobs can do many of our tasks.For example, a designer can now do tasks that previously were out of their sphere:* Use ChatGPT and Cove to explore a strat