Audio is streamed directly from the publisher (anchor.fm) as published in their RSS feed. Play Podcasts does not host this file. Rights-holders can request removal through the copyright & takedown page.

Show Notes

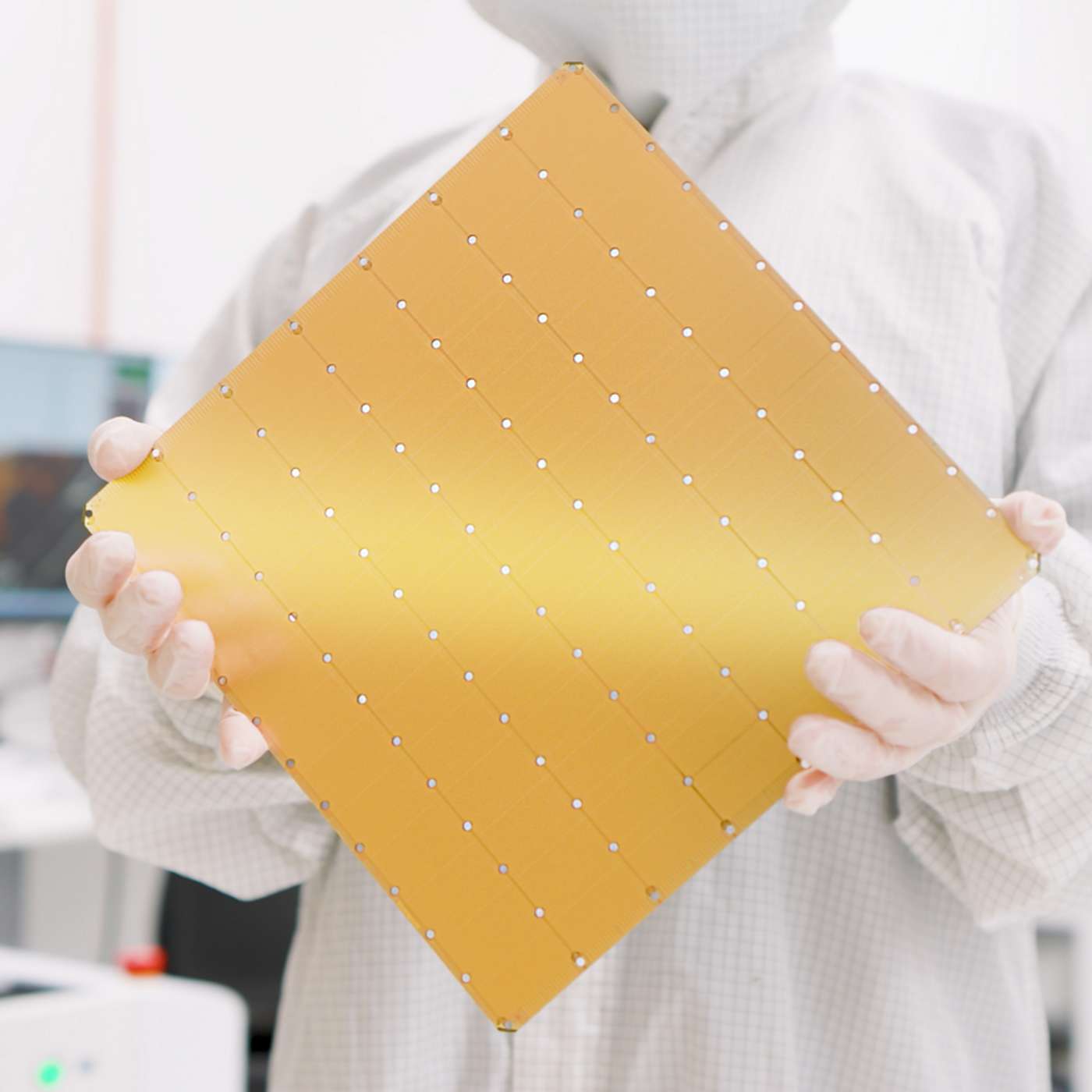

In this episode, we discuss Cerebras Systems and their Wafer-Scale Engine, currently the fastest LLM inference processor, with 7000x more memory bandwidth than nVidia H100. Together with G42, they’re also developing the Condor Galaxy, potentially the largest AI supercomputer. Is this all just hype? What are the real world use cases and why should average users care about it?