AI & The Future of Humanity: Artificial Intelligence, Technology, VR, Algorithm, Automation, ChatBPT, Robotics, Augmented Reality, Big Data, IoT, Social Media, CGI, Generative-AI, Innovation, Nanotechnology, Science, Quantum Computing: The Creative Process Interviews

145 episodes — Page 2 of 3

AI & The Pathway to Flow with Neuroscientist, Fmr. Dancer DR. JULIA CHRISTENSEN

“So, syncopation is now the big thing. It will induce people to groove and to like your music more. So let's have a lot of syncopation inside your music and you'll sell a lot. By chasing superficial beauty, which is what AI gives us at the moment, it aims for perfect outcomes. Not that anything these models produce is perfect, because how do you evaluate perfection? But they are based on the data that most people want to see again. That's extremely important to bear in mind. When you say 'cluttered mind,' it's actually also a cluttered brain in terms of the neurotransmitters out and about. As we strive for that perfect coding and external beauty, our brain releases dopamine signals. Dopamine is good; it's a learning signal to the brain, but we need to know how to use it. Constantly swiping our phone and getting this beauty into our brain via our eyes or via the syncopations in the music teaches our mind to seek that all the time because that's a dopamine signal. It's a learning signal. So, striving after these shapes and sound cues repeatedly clutters your brain. That's why your mind is full.”Dr. Julia F. Christensen is a Danish neuroscientist and former dancer currently working as a senior scientist at the Max Planck Institute for Empirical Aesthetics in Germany. She studied psychology, human evolution, and neuroscience in France, Spain and the UK. For her postdoctoral training, she worked in international, interdisciplinary research labs at University College London, City, University London and the Warburg Institute, London and was awarded a postdoctoral Newton International Fellowship by the British Academy. Her new book The Pathway to Flow is about the science of flow, why our brain needs it and how to create the right habits in our brain to get it.https://www.linkedin.com/in/dr-julia-f-christensen-36539a144https://www.instagram.com/dr.julia.f.christensen?igsh=cHZkODgxczJqZmxlwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI, Technological Progress & the Growth Dilemma w/ Economist DANIEL SUSSKIND - Highlights

“The running theme in all of my work has been technology. The first book that I co-authored with my dad was published in 2015. The second book I wrote was A World Without Work: Technology, Automation, and How We Should Respond, published in 2020, just before the pandemic began. My new book Growth: A Reckoning is about growth, but also technological progress, because what drives growth is technological progress—we have a choice to change the nature of growth, and the same is true of our technological progress. To reach a dynamic economy capable of generating ever more ideas about the world, we need to use the technologies we have to generate new ideas about the world. One of the technologies I've been particularly excited by was AlphaFold, developed by DeepMind to solve protein folding problems in biology. Essentially, understanding the 3D shape of proteins is important for understanding disease and designing effective treatment, but incredibly difficult to figure out, and Alpha fold has solved this problem by providing the 3D structures of millions of proteins. As the only economist in The Institute for Ethics in AI, I’ve always found the moral, ethical side of technology interesting. I often get asked, “What can machines do, and what can they not do?” But I think one of the most troubling, but also one of the most fascinating things about technology is it is forcing us to ask the question “What does it really mean to be human? What is humanity?” For a long time, many people thought the core of what it means to be a human being is to be a creative thing. But with the arrival of generative AI in the last few years, I think that that has been really called into question. These AI systems are particularly good at creative tasks—coming up with original, novel text, images, and video. In fact, I actually use these AI systems to generate bedtime stories with my children—getting the kids to craft a good prompt is quite a fun, intellectually demanding exercise, and these technologies now give my children a storytelling capability that would have been unimaginable only a few years ago. So, one of the interesting philosophical consequences of technologies is that it's challenging some of the complacency and deep-rooted assumptions about what it really means to be a human being.”Daniel Susskind is a Research Professor in Economics at King's College London and a Senior Research Associate at the Institute for Ethics in AI at Oxford University. He is the author of A World without Work and co-author of the bestselling The Future of the Professions. Previously, he worked in various roles in the British Government - in the Prime Minister’s Strategy Unit, in the Policy Unit in 10 Downing Street, and in the Cabinet Office. His latest book is Growth: A Reckoning.www.danielsusskind.comwww.penguin.co.uk/books/446381/growth-by-susskind-daniel/9780241542309www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Growth: A Reckoning with Economist DANIEL SUSSKIND

How can we look beyond GDP and develop new metrics that balance growth with human flourishing and environmental well-being? How can we be more engaged global citizens? In this age of AI, what does it really mean to be human? And how are our technologies transforming us?Daniel Susskind is a Research Professor in Economics at King's College London and a Senior Research Associate at the Institute for Ethics in AI at Oxford University. He is the author of A World without Work and co-author of the bestselling The Future of the Professions. Previously, he worked in various roles in the British Government - in the Prime Minister’s Strategy Unit, in the Policy Unit in 10 Downing Street, and in the Cabinet Office. His latest book is Growth: A Reckoning.“The running theme in all of my work has been technology. The first book that I co-authored with my dad was published in 2015. The second book I wrote was A World Without Work: Technology, Automation, and How We Should Respond, published in 2020, just before the pandemic began. My new book Growth: A Reckoning is about growth, but also technological progress, because what drives growth is technological progress—we have a choice to change the nature of growth, and the same is true of our technological progress. To reach a dynamic economy capable of generating ever more ideas about the world, we need to use the technologies we have to generate new ideas about the world. One of the technologies I've been particularly excited by was AlphaFold, developed by DeepMind to solve protein folding problems in biology. Essentially, understanding the 3D shape of proteins is important for understanding disease and designing effective treatment, but incredibly difficult to figure out, and Alpha fold has solved this problem by providing the 3D structures of millions of proteins. As the only economist in The Institute for Ethics in AI, I’ve always found the moral, ethical side of technology interesting. I often get asked, “What can machines do, and what can they not do?” But I think one of the most troubling, but also one of the most fascinating things about technology is it is forcing us to ask the question “What does it really mean to be human? What is humanity?” For a long time, many people thought the core of what it means to be a human being is to be a creative thing. But with the arrival of generative AI in the last few years, I think that that has been really called into question. These AI systems are particularly good at creative tasks—coming up with original, novel text, images, and video. In fact, I actually use these AI systems to generate bedtime stories with my children—getting the kids to craft a good prompt is quite a fun, intellectually demanding exercise, and these technologies now give my children a storytelling capability that would have been unimaginable only a few years ago. So, one of the interesting philosophical consequences of technologies is that it's challenging some of the complacency and deep-rooted assumptions about what it really means to be a human being.”www.danielsusskind.comwww.penguin.co.uk/books/446381/growth-by-susskind-daniel/9780241542309www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

The Human Smart City: Balancing Ecology & Economy with CARLOS MORENO - Highlights

“This is the difference between a technological smart city and a real human smart city towards a 15-minute city as the expression of a human-centered urban approach. This is our challenge for the next decades and our target, to humanize our cities. The Olympic Games in Paris have shown the world that it is possible to recreate, to regenerate a really vibrant city with harmonious life between districts, different places, the role of the Seine River as nature in the presence of a lot of people for having more real livability and not an illusory computer life driven by social networks.”Carlos Moreno was born in Colombia in 1959 and moved to France at the age of 20. He is known for his influential "15-Minute City" concept, embraced by Paris Mayor Anne Hidalgo and leading cities around the world. Scientific Director of the "Entrepreneurship - Territory - Innovation" Chair at the Paris Sorbonne Business School, he is an international expert of the Human Smart City, and a Knight of the French Legion of Honour. He is recipient of the Obel Award and the UN-Habitat Scroll of Honour. His latest book is The 15-Minute City: A Solution to Saving Our Time and Our Planet.https://www.moreno-web.net/https://www.wiley.com/en-us/The+15-Minute+City%3A+A+Solution+to+Saving+Our+Time+and+Our+Planet-p-9781394228140www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

The 15-Minute City: A Solution to Saving Our Time & Our Planet with CARLOS MORENO

How can the 15-minute city model revolutionize urban living, enhance wellbeing, and reduce our carbon footprint? Online shopping is turning cities into ghost towns. We can now buy anything anywhere anytime. How can we learn to stop scrolling and start strolling and create more livable, sustainable communities we are happy to call home.Carlos Moreno was born in Colombia in 1959 and moved to France at the age of 20. He is known for his influential "15-Minute City" concept, embraced by Paris Mayor Anne Hidalgo and leading cities around the world. Scientific Director of the "Entrepreneurship - Territory - Innovation" Chair at the Paris Sorbonne Business School, he is an international expert of the Human Smart City, and a Knight of the French Legion of Honour. He is recipient of the Obel Award and the UN-Habitat Scroll of Honour. His latest book is The 15-Minute City: A Solution to Saving Our Time and Our Planet.“This is the difference between a technological smart city and a real human smart city towards a 15-minute city as the expression of a human-centered urban approach. This is our challenge for the next decades and our target, to humanize our cities. The Olympic Games in Paris have shown the world that it is possible to recreate, to regenerate a really vibrant city with harmonious life between districts, different places, the role of the Seine River as nature in the presence of a lot of people for having more real livability and not an illusory computer life driven by social networks.”https://www.moreno-web.net/https://www.wiley.com/en-us/The+15-Minute+City%3A+A+Solution+to+Saving+Our+Time+and+Our+Planet-p-9781394228140www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Neuroscience, AI & The Future of Humanity - DR. BEN SHOFTY - Highlights

“I'm one of the people who believe that anything that we as human beings can imagine will eventually happen. So, if somebody has raised the question possibility of having brain implants that augment the brain and generate additional functions, I feel like it will eventually happen. There are a lot of private companies, like Elon Musk's Neuralink and others, that are busy designing these interfaces and planning these devices. Of course, nothing is available or even close to completion right now. The next step, of course, would be to modulate them. Just like any other thing in medicine, it will start or has already started with pathological states which we've talked about and people looking for potential interventions through TMS (transcranial magnetic stimulation). It doesn't necessarily have to be invasive, but of course the next step, especially when we're talking about the brain is to intervene and generate additional functions or to improve the way the brain functions. Many people are working on trying to generate memory augmentation, navigation augmentations, and a lot of other functions. I assume eventually it will reach a point where we'll be able to pick and choose what we want to augment about our own brains. I assume that the technology will be there eventually. And this is something that will be a part of the natural evolution of the human race.”Dr. Ben Shofty is a functional neurosurgeon affiliated with the University of Utah. He graduated from the Tel-Aviv University Faculty of Medicine, received his PhD in neurosurgical training from the Israeli Institute of Technology, and completed his training at the Tel Aviv Medical Center and Baylor University. He was also an Israeli national rugby player. His practice specializes in neuromodulation and exploring treatments for disorders such as OCD, depression, and epilepsy, among others, while also seeking to understand the science behind creativity, mind-wandering, and the many complexities of the brain.https://healthcare.utah.edu/find-a-doctor/ben-shoftyhttps://academic.oup.com/brain/advance-article/doi/10.1093/brain/awae199/7695856www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

The Neuroscience of Creativity with DR. BEN SHOFTY

Where do creative thoughts come from? How can we harness our stream of consciousness and spontaneity to express ourselves? How are mind-wandering, meditation, and the arts good for our creativity and physical and mental well-being?Dr. Ben Shofty is a functional neurosurgeon affiliated with the University of Utah. He graduated from the Tel-Aviv University Faculty of Medicine, received his PhD in neurosurgical training from the Israeli Institute of Technology, and completed his training at the Tel Aviv Medical Center and Baylor University. He was also an Israeli national rugby player. His practice specializes in neuromodulation and exploring treatments for disorders such as OCD, depression, and epilepsy, among others, while also seeking to understand the science behind creativity, mind-wandering, and the many complexities of the brain.“I'm one of the people who believe that anything that we as human beings can imagine will eventually happen. So, if somebody has raised the question possibility of having brain implants that augment the brain and generate additional functions, I feel like it will eventually happen. There are a lot of private companies, like Elon Musk's Neuralink and others, that are busy designing these interfaces and planning these devices. Of course, nothing is available or even close to completion right now. The next step, of course, would be to modulate them. Just like any other thing in medicine, it will start or has already started with pathological states which we've talked about and people looking for potential interventions through TMS (transcranial magnetic stimulation). It doesn't necessarily have to be invasive, but of course the next step, especially when we're talking about the brain is to intervene and generate additional functions or to improve the way the brain functions. Many people are working on trying to generate memory augmentation, navigation augmentations, and a lot of other functions. I assume eventually it will reach a point where we'll be able to pick and choose what we want to augment about our own brains. I assume that the technology will be there eventually. And this is something that will be a part of the natural evolution of the human race.”https://healthcare.utah.edu/find-a-doctor/ben-shoftyhttps://academic.oup.com/brain/advance-article/doi/10.1093/brain/awae199/7695856www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

What is good design? How AI is Shaping OurWorld? - SCOTT DOORLEY & CARISSA CARTER - Co-authors of Assembling Tomorrow - Highlights

“The way we understand the world and how the world actually works is just not mapped perfectly. That kind of leads to problems because we don't know exactly what we're doing in the world. We can't see all the repercussions of the things we create until later on. One silver lining about the technologies we're creating is that technologies like AI could be used to help us with this issue, with the fact that our mental models aren't exactly in line with how the world works. AI is actually very good at predicting and modeling outcomes. It could be used to understand climate change better so that we're able to understand it in a way that allows us to act. It could also help us predict the impacts of the things that we're making. So there's a bit of a silver lining in here, even though it can feel scary to be in a situation where your mental model and how the world works are not in line.”“I worry that AI is changing my thoughts and can control my thoughts, and that used to sound really far-fetched and now seems sort of middle of the road. I guarantee in a year's time that will sound like a very normal concern. Social listening is very sophisticated. All of the data in the websites that we visit, the data trails that we leave out in the world, are tracking us—our locations, our behaviors, and our habits such that there are many sites out there that can predict exactly what we're thinking and feeling and feed us advertising content or things that aren't even advertising content that can change what our next behaviors are. I think that's getting more and more sophisticated. We have already seen our political elections affected by mass attacks on our social media. When that comes down to our individual agency and behavior, I think that's something we do need to be concerned about. The way that we as individuals can combat it is to be aware that it's happening. Really start to notice the unnoticed, and I still feel optimistic amongst this concern.”Scott Doorley is the Creative Director at Stanford's d. school and co author of Make Space. He teaches design communication and his work has been featured in museums and architecture and urbanism and the New York Times. Carissa Carteris the Academic Director at Stanford's d. schooland author of The Secret Language of Maps. She teaches courses on emerging technologies and data visualization and received Fast Company and Core 77 awards for her work on designing with machine learning and blockchain. Together, they co authored Assembling Tomorrow: A Guide to Designing a Thriving Future.www.scottdoorley.comwww.snowflyzone.comhttps://dschool.stanford.edu/www.penguinrandomhouse.com/books/623529/assembling-tomorrow-by-scott-doorley-carissa-carter-and-stanford-dschool-illustrations-by-armando-veve/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Can Design Save the World? - SCOTT DOORLEY & CARISSA CARTER - Co-authors of Assembling Tomorrow - Directors of Stanford’s d.School

How can we design and adapt for the uncertainties of the 21st century? How do emotions shape our decisions and the way we design the world around us?Scott Doorley is the Creative Director at Stanford's d. school and co author of Make Space. He teaches design communication and his work has been featured in museums and architecture and urbanism and the New York Times. Carissa Carteris the Academic Director at Stanford's d. schooland author of The Secret Language of Maps. She teaches courses on emerging technologies and data visualization and received Fast Company and Core 77 awards for her work on designing with machine learning and blockchain. Together, they co authored Assembling Tomorrow: A Guide to Designing a Thriving Future.“The way we understand the world and how the world actually works is just not mapped perfectly. That kind of leads to problems because we don't know exactly what we're doing in the world. We can't see all the repercussions of the things we create until later on. One silver lining about the technologies we're creating is that technologies like AI could be used to help us with this issue, with the fact that our mental models aren't exactly in line with how the world works. AI is actually very good at predicting and modeling outcomes. It could be used to understand climate change better so that we're able to understand it in a way that allows us to act. It could also help us predict the impacts of the things that we're making. So there's a bit of a silver lining in here, even though it can feel scary to be in a situation where your mental model and how the world works are not in line.”“I worry that AI is changing my thoughts and can control my thoughts, and that used to sound really far-fetched and now seems sort of middle of the road. I guarantee in a year's time that will sound like a very normal concern. Social listening is very sophisticated. All of the data in the websites that we visit, the data trails that we leave out in the world, are tracking us—our locations, our behaviors, and our habits such that there are many sites out there that can predict exactly what we're thinking and feeling and feed us advertising content or things that aren't even advertising content that can change what our next behaviors are. I think that's getting more and more sophisticated. We have already seen our political elections affected by mass attacks on our social media. When that comes down to our individual agency and behavior, I think that's something we do need to be concerned about. The way that we as individuals can combat it is to be aware that it's happening. Really start to notice the unnoticed, and I still feel optimistic amongst this concern.”www.scottdoorley.comwww.snowflyzone.comhttps://dschool.stanford.edu/www.penguinrandomhouse.com/books/623529/assembling-tomorrow-by-scott-doorley-carissa-carter-and-stanford-dschool-illustrations-by-armando-veve/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcastImage credit: Patrick Beaudouin

AI, Tech & The Future of Museums - STEPHEN REILY, Founding Director of Remuseum on Transforming Cultural Spaces

“The opportunity is that we have never had a public that is more passionate and obsessed with visual imagery. If the owners of the best original imagery in the world can't figure out how to take advantage of the fact that the world has now become obsessed with these treasures that we have to offer as museums, then shame on us. This is the opportunity to say, if you're spending all day scrolling on Instagram looking for amazing imagery, come and see the original source. Come and see the real work. Let us figure out how to make that connection.”Stephen Reily is the Founding Director of Remuseum, an independent research project housed at Crystal Bridges Museum of American Art in Bentonville, Arkansas. Funded by arts patron David Booth with additional support by the Ford Foundation, Remuseum focuses on advancing relevance and governance in museums across the U.S. He works with museums to create a financially sustainable strategy that is human-focused, centering on inclusion, diversity, and important causes like climate change. During his time as director of the Speed Art Museum in Louisville, KY, Reily presented Promise, Witness, Remembrance, an exhibition in response to the killing of Breonna Taylor and a year of protests in Louisville. In 2022, he co-wrote a book documenting the exhibition. As an active civic leader, Reily has been a part of numerous community organizations and boards, like the Reily Reentry Project, supporting expungement programs for Kentucky citizens, Creative Capital, offering grants for the arts, and founded Seed Capital Kentucky, a non-profit that aims to improve the food economy in the area.A Yale and Stanford Law graduate, Reily clerked for U.S. Supreme Court Justice John Paul Stevens before launching a successful entrepreneurial career, experiences he draws upon for public engagement initiatives.https://remuseum.orghttps://crystalbridges.orgwww.stephenreily.comwww.kentuckypress.com/9781734248517/promise-witness-remembrancewww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI, Curiosity, Cognition & Creativity with Neuroscientist DR. JACQUELINE GOTTLIEB

“We have an onslaught of information the moment we open our eyes. We evolved to deal with an onslaught of information, and we are masters at focusing and ignoring vast amounts of information. Now, AI in this digital age is a relatively new stream of information, which is man-made, so we make it more salient. So, yes, it's harder to ignore it, but people can learn to ignore it, and indeed, it's a learning process. I think it will also require learning how to teach our children. I mean, we're raising generations of kids who will take AI and the digital world as a given. To them, it will be no different than a chair and a table were to us. So they will learn to not be so distracted by chairs and tables.”Dr. Jacqueline Gottlieb is a Professor of Neuroscience and Principal Investigator at Columbia University’s Zuckerman Mind Brain Behavior Institute. Dr. Gottlieb studies the mechanisms that underlie the brain's higher cognitive functions, including decision making, memory, and attention. Her interest is in how the brain gathers the evidence it needs—and ignores what it doesn’t—during everyday tasks and during special states such as curiosity.www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI, Cognitive Bias & the Future of Journalism w/ Pulitzer Prize-winning Journalist NICHOLAS KRISTOF

“There have been some alarming experiments that show AI arguments are better at persuading people than humans are at persuading people. I think that's partly because humans tend to make the arguments that we ourselves find most persuasive. For example, a liberal will make the arguments that will appeal to liberals, but the person you're probably trying to persuade is somebody in the center. We're just not good at putting ourselves in other people's shoes. That's something I try very hard to do in the column, but I often fall short. And with AI, I think people are going to become more vulnerable to being manipulated. I think we're at risk of being manipulated by our own cognitive biases and the tendency to reach out for information sources that will confirm our prejudices. Years ago, the theorist Nicholas Negroponte wrote that the internet was going to bring a product he called the Daily Me—basically information perfectly targeted to our own brains—and that's kind of what we've gotten now. A conservative will get conservative sources that show how awful Democrats are and will have information that buttresses that point of view, while liberals will get the liberal version of that. So, I think we have to try to understand those cognitive biases and understand the degree to which we are all vulnerable to being fooled by selection bias. I'd like to see high schools, in particular, have more information training and media literacy programs so that younger people can learn that there are some news sources that are a little better than others and that just because you see something on Facebook doesn't make it true."Nicholas D. Kristof is a two-time Pulitzer-winning journalist and Op-ed columnist for The New York Times, where he was previously bureau chief in Hong Kong, Beijing, and Tokyo. Kristof is a regular CNN contributor and has covered, among many other events and crises, the Tiananmen Square protests, the Darfur genocide, the Yemeni civil war, and the U.S. opioid crisis. He is the author of the memoir Chasing Hope, A Reporter's Life, and coauthor, with his wife, Sheryl WuDunn, of five previous books: Tightrope, A Path Appears, Half the Sky, Thunder from the East, and China Wakes.www.nytimes.com/column/nicholas-kristofwww.penguinrandomhouse.com/books/720814/chasing-hope-by-nicholas-d-kristofFamily vineyard & apple orchard in Yamhill, Oregon: www.kristoffarms.comwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI, Populism & Consumer Society with Historian FRANK TRENTMANN

“The bridge between Out of the Darkness and my previous work, which looked at the transformation of consumer culture in the world, is morality. One thing that became clear in writing Empire of Things was that there's virtually no time or place in history where consumption isn't heavily moralized. Our lifestyle is treated as a mirror of our virtue and sins. And in the course of modern history, there's been a remarkable moral shift in the way that consumption used to be seen as something that led you astray or undermined authority, status, gender roles, and wasted money, to a source of growth, a source of self, fashioning the way we create our own identity. In the last few years, the environmental crisis has led to new questions about whether consumption is good or bad. And in 2015, during the refugee crisis when Germany took in almost a million refugees, morality became a very powerful way in which Germans talked about themselves as humanitarian world champions, as one politician called it. I realized that there's many other topics from family, work, to saving the environment, and of course, with regard to the German responsibility for the Holocaust and the war of extermination where German public discourse is heavily moralistic, so I became interested in charting that historical process."What can we learn from Germany's postwar transformation to help us address today's environmental and humanitarian crises? With the rise of populism, authoritarianism, and digital propaganda, how can history provide insights into the challenges of modern democracy?Frank Trentmann is a Professor of History at Birkbeck, University of London, and at the University of Helsinki. He is a prize-winning historian, having received awards such as the Whitfield Prize, Austrian Wissenschaftsbuch/Science Book Prize, Humboldt Prize for Research, and the 2023 Bochum Historians' Award. He has also been named a Moore Scholar at Caltech. He is the author of Empire of Things and Free Trade Nation. His latest book is Out of the Darkness: The Germans 1942 to 2022, which explores Germany's transformation after the Second World War.www.bbk.ac.uk/our-staff/profile/8009279/frank-trentmannwww.penguin.co.uk/authors/32274/frank-trentmann?tab=penguin-bookswww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Is AI capable of creating a protest song that disrupts oppression & inspires social change? - JAKE FERGUSON, ANTHONY JOSEPH & JERMAIN JACKMAN

“There's something raw about The Architecture of Oppression, both part one and part two. There's a raw realness and authenticity in those songs that AI can't create. There's a lived experience that AI won't understand, and there's a feeling in those songs. And it's not just in the words from the spoken word artists, if it's not in the instruments that are being played. It's in the voice that you hear. You hear the pain, you hear the struggle, you hear the joy, you hear all of those emotions in all of those songs. And that's something that AI can't make up or create.”Jake Ferguson is an award-winning musician known for his work with The Heliocentrics and as a solo artist under the name The Brkn Record. Alongside legendary drummer Malcolm Catto, Ferguson has composed two film scores and over 10 albums, collaborating with icons like Archie Shepp, Mulatu Astatke, and Melvin Van Peebles. His latest album is The Architecture of Oppression Part 2. The album also features singer and political activist Jermain Jackman, a former winner of The Voice (2014) and the T.S. Eliot Prize winning poet and musician, Anthony Joseph.“I think as humans, we forget. We are often limited by our own stereotypes, and we don't see that in everyone there's the potential for beauty and love and all these things. And I think The Architecture of Oppression, both parts one and two, are really a reflection of all the community and civil rights work that I've been doing for the same amount of time, really - 25 years. And I wanted to try and mix my day job and my music side, so bringing those two sides of my life together. I wanted to create a platform for black artists, black singers, and poets who I really admire. Jermain is somebody I've worked with for probably about six, seven years now. He's also in the trenches of the black civil rights struggle. We worked together on a number of projects, but it was very interesting to then work with Jemain in a purely artistic capacity. And it was a no-brainer to give Anthony a call for this second album because I know of his pedigree, and he's much more able to put ideas and thoughts on paper than I would be able to.”www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

The SDGs, AI & UN Summit of the Future - GUILLAUME LAFORTUNE - VP, UN SDSN, Paris

“The SDSN has been set up to mobilize research and science for the Sustainable Development Goals. Each year, we aim to provide a fair and accurate assessment of countries' progress on the 17 Sustainable Development Goals. The development goals were adopted back in 2015 by all UN member states, marking the first time in human history that we have a common goal for the entire world. Our goal each year with the SDG index is to have sound methodologies and translate these into actionable insights that can generate impactful results at the end of the day. Out of all the targets that we track, only 16 percent are estimated to be on track. This agenda not only combines environmental development but also social development, economic development, and good governance. Currently, none of the SDGs are on track to be achieved at the global level.”In today's podcast, we talk with Guillaume Lafortune, Vice President and Head of the Paris Office of the UN Sustainable Development Solutions Network (SDSN), the largest global network of scientists and practitioners dedicated to implementing the Sustainable Development Goals (SDGs). We discuss the intersections of sustainability, global progress, the UN Summit of the Future, and the daunting challenges we face. From the impact of war on climate initiatives to transforming data into narratives that drive change, we explore how global cooperation, education, and technology pave the way for a sustainable future and look at the lessons of history and the power of diplomacy in shaping our path forward.Guillaume Lafortune joined SDSN in 2017 to lead work on SDG data, policies, and financing including the preparation of the annual Sustainable Development Report (which includes the SDG Index and Dashboards). Between 2020 and 2022 Guillaume was a member of The Lancet Commission on COVID-19, where he coordinated the taskforces on “Fiscal Policy and Financial Markets” and “Green Recovery”, and co-authored the final report of the Commission. Guillaume is also a member of the Grenoble Center for Economic Research (CREG) at the Grenoble Alpes University. Previously, he served as an economist at the OECD in Paris and at the Ministry of Economic Development in the Government of Quebec (Canada). Guillaume is the author of 50+ scientific publications, book chapters, policy briefs and international reports on sustainable development, economic policy and good governance.SDSN's Summit of the Future RecommendationsSDG Transformation CenterSDSN Global Commission for Urban SDG Financewww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI & How Utopian Visions Shape Our Reality & Future - Highlights - S. D. CHROSTOWSKA

“There’s the existing AI and the dream of artificial general intelligence that is aligned with our values and will make our lives better. Certainly, the techno-utopian dream is that it will lead us towards utopia. It is the means of organizing human collectivities, human societies, in a way that would reconcile all the variables, all the things that we can't reconcile because we don't have enough of a fine-grained understanding of how people interact, the different motivations of their psychologies and of societies, of groups, of people. Of course, that's another kind of psychology that we're talking about. So I think the dream of AI is a utopian dream that stands correcting, but it is itself being corrected by those who are the curators of that technology. Now you asked me about the changing role of artists in this landscape. I would say, first of all, that I'm for virtuosity. And this makes me think of AI and a higher level AI, it would be virtuous before it becomes super intelligence.”S. D. Chrostowska is professor of humanities at York University, Canada. She is the author of several books, among them Permission, The Eyelid, A Cage for Every Child, and, most recently, Utopia in the Age of Survival: Between Myth and Politics. Her essays have appeared in such venues as Public Culture, Telos, Boundary 2, and The Hedgehog Review. She also coedits the French surrealist review Alcheringa and is curator of the 19th International Exhibition of Surrealism, Marvellous Utopia, which runs from July to September 2024 in Saint-Cirq-Lapopie, France.https://profiles.laps.yorku.ca/profiles/sylwiac/www.sup.org/books/title/?id=33445https://chbooks.com/Books/T/The-Eyelidhttps://ciscm.fr/en/merveilleuse-utopiewww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Utopia in the Age of Survival with S. D. CHROSTOWSKA

As Surrealism turns 100, what can it teach us about the importance of dreaming and creating a better society? Will we wake up from the consumerist dream sold to us by capitalism and how would that change our ideas of utopia?S. D. Chrostowska is professor of humanities at York University, Canada. She is the author of several books, among them Permission, The Eyelid, A Cage for Every Child, and, most recently, Utopia in the Age of Survival: Between Myth and Politics. Her essays have appeared in such venues as Public Culture, Telos, Boundary 2, and The Hedgehog Review. She also coedits the French surrealist review Alcheringa and is curator of the 19th International Exhibition of Surrealism, Marvellous Utopia, which runs from July to September 2024 in Saint-Cirq-Lapopie, France.“There’s the existing AI and the dream of artificial general intelligence that is aligned with our values and will make our lives better. Certainly, the techno-utopian dream is that it will lead us towards utopia. It is the means of organizing human collectivities, human societies, in a way that would reconcile all the variables, all the things that we can't reconcile because we don't have enough of a fine-grained understanding of how people interact, the different motivations of their psychologies and of societies, of groups, of people. Of course, that's another kind of psychology that we're talking about. So I think the dream of AI is a utopian dream that stands correcting, but it is itself being corrected by those who are the curators of that technology. Now you asked me about the changing role of artists in this landscape. I would say, first of all, that I'm for virtuosity. And this makes me think of AI and a higher level AI, it would be virtuous before it becomes super intelligence.”https://profiles.laps.yorku.ca/profiles/sylwiac/www.sup.org/books/title/?id=33445https://chbooks.com/Books/T/The-Eyelidhttps://ciscm.fr/en/merveilleuse-utopiewww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

AI’s Role in Society, Culture & Climate with CHARLIE HERTZOG YOUNG

The planet’s well-being unites us all, from ecosystems to societies, global systems to individual health. How is planetary health linked to mental health? Charlie Hertzog Young is a researcher, writer and award-winning activist. He identifies as a “proudly mad bipolar double amputee” and has worked for the New Economics Foundation, the Royal Society of Arts, the Good Law Project, the Four Day Week Campaign and the Centre for Progressive Change, as well as the UK Labour Party under three consecutive leaders. Charlie has spoken at the LSE, the UN and the World Economic Forum. He studied at Harvard, SOAS and Schumacher College and has written for The Ecologist, The Independent, Novara Media, Open Democracy and The Guardian. He is the author of Spinning Out: Climate Change, Mental Health and Fighting for a Better Future.https://charliehertzogyoung.mehttps://footnotepress.com/books/spinning-out/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

The Future of Energy - RICHARD BLACK - Director, Policy & Strategy, Ember - Fmr. BBC Environment Correspondent

Richard Black spent 15 years as a science and environment correspondent for the BBC World Service and BBC News, before setting up the Energy & Climate Intelligence Unit. He now lives in Berlin and is the Director of Policy and Strategy at the global clean energy think tank Ember, which aims to accelerate the clean energy transition with data and policy. He is the author of The Future of Energy; Denied:The Rise and Fall of Climate Contrarianism, and is an Honorary Research Fellow at Imperial College London."I guess no one needs AI in the same way that we need oil or food. So, from that point of view, it's a lot easier. AI is fascinating, slightly scary. I find that the amount of discussion of setting it off in a carefully thought through direction is way lower than the amount of fascination with the latest thing that it can do. Often fiction should be our guide to these things or can be a valuable guide to these things. And if we go back to Isaac Asimov and his three laws of robotics, and to all these three very fundamental points that he said should be embedded in all automata, there's no discussion of that around AI, like none. I personally find that quite a hole in the discourse that we're having.”https://mhpbooks.com/books/the-future-of-energyhttps://ember-climate.org/about/people/richard-blackhttps://ember-climate.orgwww.therealpress.co.uk/?s=Richard+blackwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

DIANE VON FÜRSTENBERG: Woman in Charge & How AI Will Change Storytelling w/ Oscar-winning Director SHARMEEN OBAID-CHINOY

Sharmeen Obaid-Chinoy is an Oscar and Emmy award-winning Canadian-Pakistani filmmaker whose work highlights extraordinary women and their stories. She earned her first Academy Award in 2012 for her documentary Saving Face, about the Pakistani women targeted by brutal acid attacks. Today, Obaid-Chinoy is the first female film director to have won two Oscars by the age of 37. In 2023, it was announced that Obaid-Chinoy will direct the next Star Wars film starring Daisy Ridley. Her most recent project, co-directed alongside Trish Dalton, is the new documentary Diane von Fürstenberg: Woman in Charge, about the trailblazing Belgian fashion designer who invented the wrap dress 50 years ago. The film had its world premiere as the opening night selection at the 2024 Tribeca Festival on June 5th and premiered on June 25th on Hulu in the U.S. and Disney+ internationally. A product of Obaid-Chinoy's incredibly talented female filmmaking team, Woman in Charge provides an intimate look into Diane von Fürstenberg’s life and accomplishments and chronicles the trajectory of her signature dress from an innovative fashion statement to a powerful symbol of feminism.“I think it's very early for us to see how AI is going to impact us all, especially documentary filmmakers. And so I embrace technology, and I encourage everyone as filmmakers to do so. We're looking at how AI is facilitating filmmakers to tell stories, create more visual worlds. I think that right now we're in the play phase of AI, where there's a lot of new tools and you're playing in a sandbox with them to see how they will develop.I don't think that AI has developed to the extent that it is in some way dramatically changing the film industry as we speak, but in the next two years, it will. We have yet to see how it will. As someone who creates films, I always experiment, and then I see what it is that I'd like to take from that technology as I move forward.”www.hulu.com/movie/diane-von-furstenberg-woman-in-charge-95fb421e-b7b1-4bfc-9bbf-ea666dba0b02https://www.disneyplus.com/movies/diane-von-furstenberg-woman-in-charge/1jrpX9AhsaJ6https://socfilms.comwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Does AI-generated Perfection Detach Us from Reality, Life & Human Connection? - Highlights - HENRY AJDER

“Having worked in this space for seven years, really since the inception of DeepFakes in late 2017, for some time, it was possible with just a few hours a day to really be on top of the key kind of technical developments. It's now truly global. AI-generated media have really exploded, particularly the last 18 months, but they've been bubbling under the surface for some time in various different use cases. The disinformation and deepfakes in the political sphere really matches some of the fears held five, six years ago, but at the time were more speculative. The fears around how deepfakes could be used in propaganda efforts, in attempts to destabilize democratic processes, to try and influence elections have really kind of reached a fever pitch Up until this year, I've always really said, “Well, look, we've got some fairly narrow examples of deepfakes and AI-generated content being deployed, but it's nowhere near on the scale or the effectiveness required to actually have that kind of massive impact.” This year, it's no longer a question of are deepfakes going to be used, it's now how effective are they actually going to be? I'm worried. I think a lot of the discourse around gen AI and so on is very much you're either an AI zoomer or an AI doomer, right? But for me, I don't think we need to have this kind of mutually exclusive attitude. I think we can kind of look at different use cases. There are really powerful and quite amazing use cases, but those very same baseline technologies can be weaponized if they're not developed responsibly with the appropriate safety measures, guardrails, and understanding from people using and developing them. So it is really about that balancing act for me. And a lot of my research over the years has been focused on mapping the evolution of AI generated content as a malicious tool.”Henry Ajder is an advisor, speaker, and broadcaster working at the frontier of the generative AI and the synthetic media revolution. He advises organizations on the opportunities and challenges these technologies present, including Adobe, Meta, The European Commission, BBC, The Partnership on AI, and The House of Lords. Previously, Henry led Synthetic Futures, the first initiative dedicated to ethical generative AI and metaverse technologies, bringing together over 50 industry-leading organizations. Henry presented the BBC documentary series, The Future Will be Synthesised.www.henryajder.comwww.bbc.co.uk/programmes/m0017cgrwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

How is AI Changing Our Perception of Reality, Creativity & Human Connection? w/ HENRY AJDER - AI Advisor

How is artificial intelligence redefining our perception of reality and truth? Can AI be creative? And how is it changing art and innovation? Does AI-generated perfection detach us from reality and genuine human connection?Henry Ajder is an advisor, speaker, and broadcaster working at the frontier of the generative AI and the synthetic media revolution. He advises organizations on the opportunities and challenges these technologies present, including Adobe, Meta, The European Commission, BBC, The Partnership on AI, and The House of Lords. Previously, Henry led Synthetic Futures, the first initiative dedicated to ethical generative AI and metaverse technologies, bringing together over 50 industry-leading organizations. Henry presented the BBC documentary series, The Future Will be Synthesised.“Having worked in this space for seven years, really since the inception of DeepFakes in late 2017, for some time, it was possible with just a few hours a day to really be on top of the key kind of technical developments. It's now truly global. AI-generated media have really exploded, particularly the last 18 months, but they've been bubbling under the surface for some time in various different use cases. The disinformation and deepfakes in the political sphere really matches some of the fears held five, six years ago, but at the time were more speculative. The fears around how deepfakes could be used in propaganda efforts, in attempts to destabilize democratic processes, to try and influence elections have really kind of reached a fever pitch Up until this year, I've always really said, “Well, look, we've got some fairly narrow examples of deepfakes and AI-generated content being deployed, but it's nowhere near on the scale or the effectiveness required to actually have that kind of massive impact.” This year, it's no longer a question of are deepfakes going to be used, it's now how effective are they actually going to be? I'm worried. I think a lot of the discourse around gen AI and so on is very much you're either an AI zoomer or an AI doomer, right? But for me, I don't think we need to have this kind of mutually exclusive attitude. I think we can kind of look at different use cases. There are really powerful and quite amazing use cases, but those very same baseline technologies can be weaponized if they're not developed responsibly with the appropriate safety measures, guardrails, and understanding from people using and developing them. So it is really about that balancing act for me. And a lot of my research over the years has been focused on mapping the evolution of AI generated content as a malicious tool.”www.henryajder.comwww.bbc.co.uk/programmes/m0017cgrwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

How to Fight for Truth & Protect Democracy in A Post-Truth World? - Highlights - LEE McINTYRE

“When AI takes over with our information sources and pollutes it to a certain point, we'll stop believing that there is any such thing as truth anymore. ‘We now live in an era in which the truth is behind a paywall and the lies are free.’ One thing people don't realize is that the goal of disinformation is not simply to get you to believe a falsehood. It's to demoralize you into giving up on the idea of truth, to polarize us around factual issues, to get us to distrust people who don't believe the same lie. And even if somebody doesn't believe the lie, it can still make them cynical. I mean, we've all had friends who don't even watch the news anymore. There's a chilling quotation from Holocaust historian Hannah Arendt about how when you always lie to someone, the consequence is not necessarily that they believe the lie, but that they begin to lose their critical faculties, that they begin to give up on the idea of truth, and so they can't judge for themselves what's true and what's false anymore. That's the scary part, the nexus between post-truth and autocracy. That's what the authoritarian wants. Not necessarily to get you to believe the lie. But to give up on truth, because when you give up on truth, then there's no blame, no accountability, and they can just assert their power. There's a connection between disinformation and denial.”Lee McIntyre is a Research Fellow at the Center for Philosophy and History of Science at Boston University and a Senior Advisor for Public Trust in Science at the Aspen Institute. He holds a B.A. from Wesleyan University and a Ph.D. in Philosophy from the University of Michigan. He has taught philosophy at Colgate University, Boston University, Tufts Experimental College, Simmons College, and Harvard Extension School (where he received the Dean’s Letter of Commendation for Distinguished Teaching). Formerly Executive Director of the Institute for Quantitative Social Science at Harvard University, he has also served as a policy advisor to the Executive Dean of the Faculty of Arts and Sciences at Harvard and as Associate Editor in the Research Department of the Federal Reserve Bank of Boston. His books include On Disinformation and How to Talk to a Science Denier and the novels The Art of Good and Evil and The Sin Eater.https://leemcintyrebooks.comwww.penguinrandomhouse.com/books/730833/on-disinformation-by-lee-mcintyrehttps://mitpress.mit.edu/9780262545051/https://leemcintyrebooks.com/books/the-art-of-good-and-evil/https://leemcintyrebooks.com/books/the-sin-eater/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

On Disinformation: How to Fight for Truth & Protect Democracy in the Age of AI - LEE McINTYRE

How do we fight for truth and protect democracy in a post-truth world? How does bias affect our understanding of facts?Lee McIntyre is a Research Fellow at the Center for Philosophy and History of Science at Boston University and a Senior Advisor for Public Trust in Science at the Aspen Institute. He holds a B.A. from Wesleyan University and a Ph.D. in Philosophy from the University of Michigan. He has taught philosophy at Colgate University, Boston University, Tufts Experimental College, Simmons College, and Harvard Extension School (where he received the Dean’s Letter of Commendation for Distinguished Teaching). Formerly Executive Director of the Institute for Quantitative Social Science at Harvard University, he has also served as a policy advisor to the Executive Dean of the Faculty of Arts and Sciences at Harvard and as Associate Editor in the Research Department of the Federal Reserve Bank of Boston. His books include On Disinformation and How to Talk to a Science Denier and the novels The Art of Good and Evil and The Sin Eater.“When AI takes over with our information sources and pollutes it to a certain point, we'll stop believing that there is any such thing as truth anymore. ‘We now live in an era in which the truth is behind a paywall and the lies are free.’ One thing people don't realize is that the goal of disinformation is not simply to get you to believe a falsehood. It's to demoralize you into giving up on the idea of truth, to polarize us around factual issues, to get us to distrust people who don't believe the same lie. And even if somebody doesn't believe the lie, it can still make them cynical. I mean, we've all had friends who don't even watch the news anymore. There's a chilling quotation from Holocaust historian Hannah Arendt about how when you always lie to someone, the consequence is not necessarily that they believe the lie, but that they begin to lose their critical faculties, that they begin to give up on the idea of truth, and so they can't judge for themselves what's true and what's false anymore. That's the scary part, the nexus between post-truth and autocracy. That's what the authoritarian wants. Not necessarily to get you to believe the lie. But to give up on truth, because when you give up on truth, then there's no blame, no accountability, and they can just assert their power. There's a connection between disinformation and denial.”https://leemcintyrebooks.comwww.penguinrandomhouse.com/books/730833/on-disinformation-by-lee-mcintyrehttps://mitpress.mit.edu/9780262545051/https://leemcintyrebooks.com/books/the-art-of-good-and-evil/https://leemcintyrebooks.com/books/the-sin-eater/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

How will AI Affect Education, the Arts & Society? - Highlights - STEPHEN WOLFRAM

"Nobody, including people who worked on ChatGPT, really sort of expected this to work. It's something that we just didn't know scientifically what it would take to make something that was a fluent producer of human language. I think the big discovery is that this thing that has been sort of a proud achievement of our species, human language, is perhaps not as complicated as we thought it was. It's something that is more accessible to sort of simpler automation than we expected. And so, people have been asking me, when ChatGPT had come out, we were doing a bunch of things technologically around ChatGPT because kind of what, when ChatGPT is kind of stringing words together to make sentences, what does it do when it has to actually solve a computational problem? That's not what it does itself. It's a thing for stringing words together to make text. And so, how does it solve a computational problem? Well, like humans, the best way for it to do it is to use tools, and the best tool for many kinds of computational problems is tools that we've built. And so very early in kind of the story of ChatGPT and so on, we were figuring out how to have it be able to use the tools that we built, just like humans can use the tools that we built, to solve computational problems, to actually get sort of accurate knowledge about the world and so on. There's all these different possibilities out there. But our kind of challenge is to decide in which direction we want to go and then to let our automated systems pursue those particular directions.”Stephen Wolfram is a computer scientist, mathematician, and theoretical physicist. He is the founder and CEO of Wolfram Research, the creator of Mathematica, Wolfram|Alpha, and the Wolfram Language. He received his PhD in theoretical physics at Caltech by the age of 20 and in 1981, became the youngest recipient of a MacArthur Fellowship. Wolfram authored A New Kind of Science and launched the Wolfram Physics Project. He has pioneered computational thinking and has been responsible for many discoveries, inventions and innovations in science, technology and business.www.stephenwolfram.comwww.wolfram.comwww.wolframalpha.comwww.wolframscience.com/nks/www.amazon.com/dp/1579550088/ref=nosim?tag=turingmachi08-20www.wolframphysics.orgwww.wolfram-media.com/products/what-is-chatgpt-doing-and-why-does-it-work/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

What Role Do AI & Computational Language Play in Solving Real-World Problems?

How can computational language help decode the mysteries of nature and the universe? What is ChatGPT doing and why does it work? How will AI affect education, the arts and society?Stephen Wolfram is a computer scientist, mathematician, and theoretical physicist. He is the founder and CEO of Wolfram Research, the creator of Mathematica, Wolfram|Alpha, and the Wolfram Language. He received his PhD in theoretical physics at Caltech by the age of 20 and in 1981, became the youngest recipient of a MacArthur Fellowship. Wolfram authored A New Kind of Science and launched the Wolfram Physics Project. He has pioneered computational thinking and has been responsible for many discoveries, inventions and innovations in science, technology and business."Nobody, including people who worked on ChatGPT, really sort of expected this to work. It's something that we just didn't know scientifically what it would take to make something that was a fluent producer of human language. I think the big discovery is that this thing that has been sort of a proud achievement of our species, human language, is perhaps not as complicated as we thought it was. It's something that is more accessible to sort of simpler automation than we expected. And so, people have been asking me, when ChatGPT had come out, we were doing a bunch of things technologically around ChatGPT because kind of what, when ChatGPT is kind of stringing words together to make sentences, what does it do when it has to actually solve a computational problem? That's not what it does itself. It's a thing for stringing words together to make text. And so, how does it solve a computational problem? Well, like humans, the best way for it to do it is to use tools, and the best tool for many kinds of computational problems is tools that we've built. And so very early in kind of the story of ChatGPT and so on, we were figuring out how to have it be able to use the tools that we built, just like humans can use the tools that we built, to solve computational problems, to actually get sort of accurate knowledge about the world and so on. There's all these different possibilities out there. But our kind of challenge is to decide in which direction we want to go and then to let our automated systems pursue those particular directions.”www.stephenwolfram.comwww.wolfram.comwww.wolframalpha.comwww.wolframscience.com/nks/www.amazon.com/dp/1579550088/ref=nosim?tag=turingmachi08-20www.wolframphysics.orgwww.wolfram-media.com/products/what-is-chatgpt-doing-and-why-does-it-work/www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Can we have real conversations with AI? How do illusions help us make sense of the world? - Highlights - KEITH FRANKISH

“Generative AI, particularly Large Language Models, they seem to be engaging in conversation with us. We ask questions, and they reply. It seems like they're talking to us. I don't think they are. I think they're playing a game very much like a game of chess. You make a move and your chess computer makes an appropriate response to that move. It doesn't have any other interest in the game whatsoever. That's what I think Large Language Models are doing. They're just making communicative moves in this game of language that they've learned through training on vast quantities of human-produced text.”Keith Frankish is an Honorary Professor of Philosophy at the University of Sheffield, a Visiting Research Fellow with The Open University, and an Adjunct Professor with the Brain and Mind Programme in Neurosciences at the University of Crete. Frankish mainly works in the philosophy of mind and has published widely about topics such as human consciousness and cognition. Profoundly inspired by Daniel Dennett, Frankish is best known for defending an “illusionist” view of consciousness. He is also editor of Illusionism as a Theory of Consciousness and co-edits, in addition to others, The Cambridge Handbook of Cognitive Science.www.keithfrankish.comwww.cambridge.org/core/books/cambridge-handbook-of-cognitive-science/F9996E61AF5E8C0B096EBFED57596B42www.imprint.co.uk/product/illusionismwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Is Consciousness an Illusion? with Philosopher KEITH FRANKISH

Is consciousness an illusion? Is it just a complex set of cognitive processes without a central, subjective experience? How can we better integrate philosophy with everyday life and the arts?Keith Frankish is an Honorary Professor of Philosophy at the University of Sheffield, a Visiting Research Fellow with The Open University, and an Adjunct Professor with the Brain and Mind Programme in Neurosciences at the University of Crete. Frankish mainly works in the philosophy of mind and has published widely about topics such as human consciousness and cognition. Profoundly inspired by Daniel Dennett, Frankish is best known for defending an “illusionist” view of consciousness. He is also editor of Illusionism as a Theory of Consciousness and co-edits, in addition to others, The Cambridge Handbook of Cognitive Science.“Generative AI, particularly Large Language Models, they seem to be engaging in conversation with us. We ask questions, and they reply. It seems like they're talking to us. I don't think they are. I think they're playing a game very much like a game of chess. You make a move and your chess computer makes an appropriate response to that move. It doesn't have any other interest in the game whatsoever. That's what I think Large Language Models are doing. They're just making communicative moves in this game of language that they've learned through training on vast quantities of human-produced text.”www.keithfrankish.comwww.cambridge.org/core/books/cambridge-handbook-of-cognitive-science/F9996E61AF5E8C0B096EBFED57596B42www.imprint.co.uk/product/illusionismwww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

What can AI teach us about human cognition & creativity? - Highlights - RAPHAËL MILLIÈRE

“I'd like to focus more on the immediate harms that the kinds of AI technologies we have today might pose. With language models, the kind of technology that powers ChatGPT and other chatbots, there are harms that might result from regular use of these systems, and then there are harms that might result from malicious use. Regular use would be how you and I might use ChatGPT and other chatbots to do ordinary things. There is a concern that these systems might reproduce and amplify, for example, racist or sexist biases, or spread misinformation. These systems are known to, as researchers put it, “hallucinate” in some cases, making up facts or false citations. And then there are the harms from malicious use, which might result from some bad actors using the systems for nefarious purposes. That would include disinformation on a mass scale. You could imagine a bad actor using language models to automate the creation of fake news and propaganda to try to manipulate voters, for example. And this takes us into the medium term future, because we're not quite there, but another concern would be language models providing dangerous, potentially illegal information that is not readily available on the internet for anyone to access. As they get better over time, there is a concern that in the wrong hands, these systems might become quite powerful weapons, at least indirectly, and so people have been trying to mitigate these potential harms.”Dr. Raphaël Millière is Assistant Professor in Philosophy of AI at Macquarie University in Sydney, Australia. His research primarily explores the theoretical foundations and inner workings of AI systems based on deep learning, such as large language models. He investigates whether these systems can exhibit human-like cognitive capacities, drawing on theories and methods from cognitive science. He is also interested in how insights from studying AI might shed new light on human cognition. Ultimately, his work aims to advance our understanding of both artificial and natural intelligence.https://raphaelmilliere.comhttps://researchers.mq.edu.au/en/persons/raphael-millierewww.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

How can we ensure that AI is aligned with human values? - RAPHAËL MILLIÈRE

How can we ensure that AI is aligned with human values? What can AI teach us about human cognition and creativity?Dr. Raphaël Millière is Assistant Professor in Philosophy of AI at Macquarie University in Sydney, Australia. His research primarily explores the theoretical foundations and inner workings of AI systems based on deep learning, such as large language models. He investigates whether these systems can exhibit human-like cognitive capacities, drawing on theories and methods from cognitive science. He is also interested in how insights from studying AI might shed new light on human cognition. Ultimately, his work aims to advance our understanding of both artificial and natural intelligence.“I'd like to focus more on the immediate harms that the kinds of AI technologies we have today might pose. With language models, the kind of technology that powers ChatGPT and other chatbots, there are harms that might result from regular use of these systems, and then there are harms that might result from malicious use. Regular use would be how you and I might use ChatGPT and other chatbots to do ordinary things. There is a concern that these systems might reproduce and amplify, for example, racist or sexist biases, or spread misinformation. These systems are known to, as researchers put it, “hallucinate” in some cases, making up facts or false citations. And then there are the harms from malicious use, which might result from some bad actors using the systems for nefarious purposes. That would include disinformation on a mass scale. You could imagine a bad actor using language models to automate the creation of fake news and propaganda to try to manipulate voters, for example. And this takes us into the medium term future, because we're not quite there, but another concern would be language models providing dangerous, potentially illegal information that is not readily available on the internet for anyone to access. As they get better over time, there is a concern that in the wrong hands, these systems might become quite powerful weapons, at least indirectly, and so people have been trying to mitigate these potential harms.”https://raphaelmilliere.comhttps://researchers.mq.edu.au/en/persons/raphael-milliere“I'd like to focus more on the immediate harms that the kinds of AI technologies we have today might pose. With language models, the kind of technology that powers ChatGPT and other chatbots, there are harms that might result from regular use of these systems, and then there are harms that might result from malicious use. Regular use would be how you and I might use ChatGPT and other chatbots to do ordinary things. There is a concern that these systems might reproduce and amplify, for example, racist or sexist biases, or spread misinformation. These systems are known to, as researchers put it, “hallucinate” in some cases, making up facts or false citations. And then there are the harms from malicious use, which might result from some bad actors using the systems for nefarious purposes. That would include disinformation on a mass scale. You could imagine a bad actor using language models to automate the creation of fake news and propaganda to try to manipulate voters, for example. And this takes us into the medium term future, because we're not quite there, but another concern would be language models providing dangerous, potentially illegal information that is not readily available on the internet for anyone to access. As they get better over time, there is a concern that in the wrong hands, these systems might become quite powerful weapons, at least indirectly, and so people have been trying to mitigate these potential harms.”www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

Is understanding AI a bigger question than understanding the origin of the universe? - Highlights, NEIL JOHNSON

“It gets back to this core question. I just wish I was a young scientist going into this because that's the question to answer: Why AI comes out with what it does. That's the burning question. It's like it's bigger than the origin of the universe to me as a scientist, and here's the reason why. The origin of the universe, it happened. That's why we're here. It's almost like a historical question asking why it happened. The AI future is not a historical question. It's a now and future question.I'm a huge optimist for AI, actually. I see it as part of that process of climbing its own mountain. It could do wonders for so many areas of science, medicine. When the car came out, the car initially is a disaster. But you fast forward, and it was the key to so many advances in society. I think it's exactly the same as AI. The big challenge is to understand why it works. AI existed for years, but it was useless. Nothing useful, nothing useful, nothing useful. And then maybe last year or something, now it's really useful. There seemed to be some kind of jump in its ability, almost like a shock wave. We're trying to develop an understanding of how AI operates in terms of these shockwave jumps. Revealing how AI works will help society understand what it can and can't do and therefore remove some of this dark fear of being taken over. If you don't understand how AI works, how can you govern it? To get effective governance, you need to understand how AI works because otherwise you don't know what you're going to regulate.”How can physics help solve messy, real world problems? How can we embrace the possibilities of AI while limiting existential risk and abuse by bad actors?Neil Johnson is a physics professor at George Washington University. His new initiative in Complexity and Data Science at the Dynamic Online Networks Lab combines cross-disciplinary fundamental research with data science to attack complex real-world problems. His research interests lie in the broad area of Complex Systems and ‘many-body’ out-of-equilibrium systems of collections of objects, ranging from crowds of particles to crowds of people and from environments as distinct as quantum information processing in nanostructures to the online world of collective behavior on social media. https://physics.columbian.gwu.edu/neil-johnson https://donlab.columbian.gwu.eduwww.creativeprocess.infowww.oneplanetpodcast.org IG www.instagram.com/creativeprocesspodcast

How can physics help solve real world problems? - NEIL JOHNSON, Head of Dynamic Online Networks Lab

How can physics help solve messy, real world problems? How can we embrace the possibilities of AI while limiting existential risk and abuse by bad actors?Neil Johnson is a physics professor at George Washington University. His new initiative in Complexity and Data Science at the Dynamic Online Networks Lab combines cross-disciplinary fundamental research with data science to attack complex real-world problems. His research interests lie in the broad area of Complex Systems and ‘many-body’ out-of-equilibrium systems of collections of objects, ranging from crowds of particles to crowds of people and from environments as distinct as quantum information processing in nanostructures to the online world of collective behavior on social media.“It gets back to this core question. I just wish I was a young scientist going into this because that's the question to answer: Why AI comes out with what it does. That's the burning question. It's like it's bigger than the origin of the universe to me as a scientist, and here's the reason why. The origin of the universe, it happened. That's why we're here. It's almost like a historical question asking why it happened. The AI future is not a historical question. It's a now and future question.I'm a huge optimist for AI, actually. I see it as part of that process of climbing its own mountain. It could do wonders for so many areas of science, medicine. When the car came out, the car initially is a disaster. But you fast forward, and it was the key to so many advances in society. I think it's exactly the same as AI. The big challenge is to understand why it works. AI existed for years, but it was useless. Nothing useful, nothing useful, nothing useful. And then maybe last year or something, now it's really useful. There seemed to be some kind of jump in its ability, almost like a shock wave. We're trying to develop an understanding of how AI operates in terms of these shockwave jumps. Revealing how AI works will help society understand what it can and can't do and therefore remove some of this dark fear of being taken over. If you don't understand how AI works, how can you govern it? To get effective governance, you need to understand how AI works because otherwise you don't know what you're going to regulate.”https://physics.columbian.gwu.edu/neil-johnsonhttps://donlab.columbian.gwu.eduwww.creativeprocess.infowww.oneplanetpodcast.org IG www.instagram.com/creativeprocesspodcast

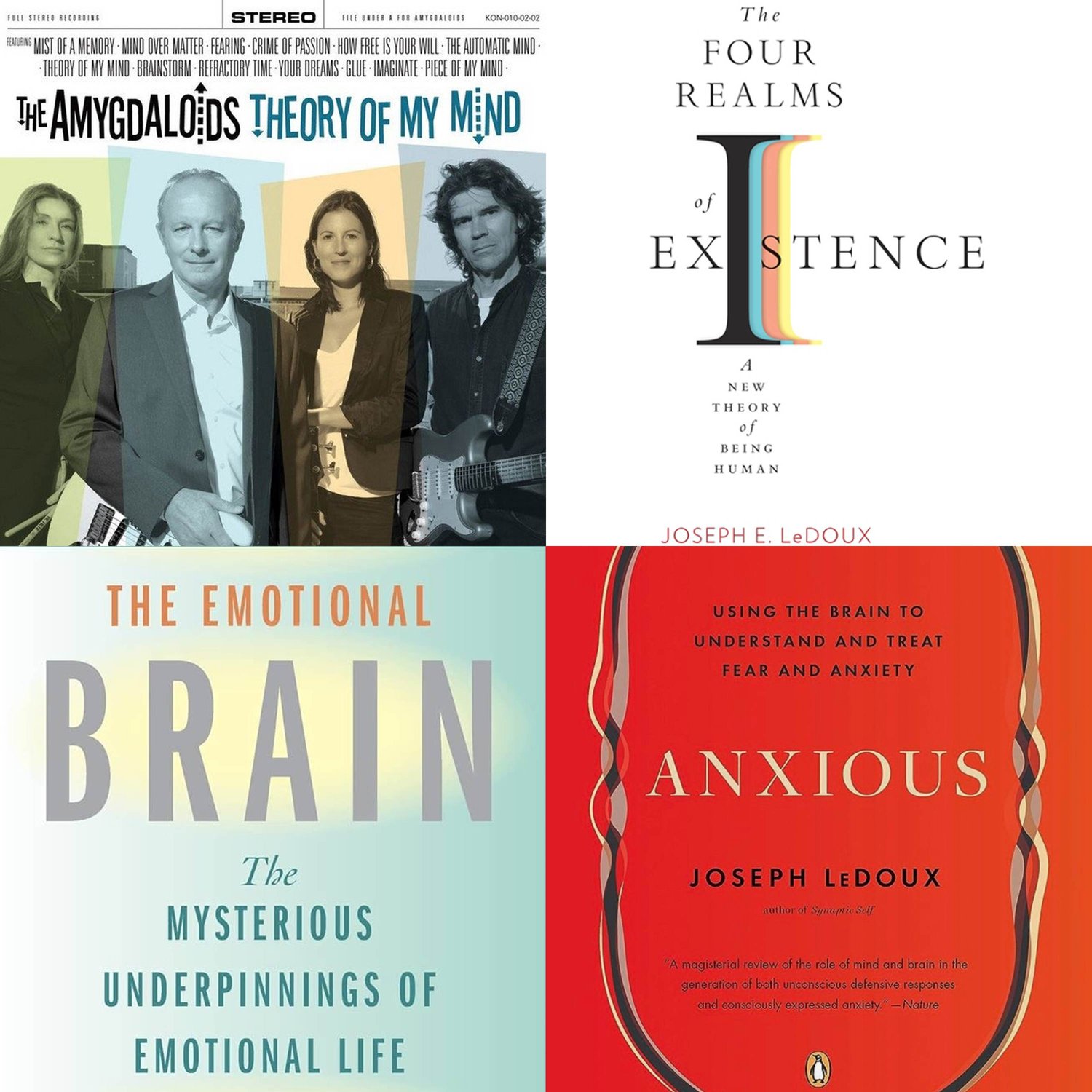

Exploring Consciousness, AI & Creativity with JOSEPH LEDOUX - Highlights

“We've got four billion years of biological accidents that created all of the intricate aspects of everything about life, including consciousness. And it's about what's going on in each of those cells at the time that allows it to be connected to everything else and for the information to be understood as it's being exchanged between those things with their multifaceted, deep, complex processing.”Joseph LeDoux is a Professor of Neural Science at New York University at NYU and was Director of the Emotional Brain Institute. His research primarily focuses on survival circuits, including their impacts on emotions, such as fear and anxiety. He has written a number of books in this field, including The Four Realms of Existence: A New Theory of Being Human, The Emotional Brain, Synaptic Self, Anxious, and The Deep History of Ourselves. LeDoux is also the lead singer and songwriter of the band The Amygdaloids. www.joseph-ledoux.comwww.cns.nyu.edu/ebihttps://amygdaloids.netwww.hup.harvard.edu/books/9780674261259www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcast

How does the brain process emotions and music? JOSEPH LEDOUX - Neuroscientist, Author, Musician

How does the brain process emotions? How are emotional memories formed and stored in the brain, and how do they influence behavior, perception, and decision-making? How does music help us understand our emotions, memories, and the nature of consciousness?Joseph LeDoux is a Professor of Neural Science at New York University at NYU and was Director of the Emotional Brain Institute. His research primarily focuses on survival circuits, including their impacts on emotions, such as fear and anxiety. He has written a number of books in this field, including The Four Realms of Existence: A New Theory of Being Human, The Emotional Brain, Synaptic Self, Anxious, and The Deep History of Ourselves. LeDoux is also the lead singer and songwriter of the band The Amygdaloids. “We've got four billion years of biological accidents that created all of the intricate aspects of everything about life, including consciousness. And it's about what's going on in each of those cells at the time that allows it to be connected to everything else and for the information to be understood as it's being exchanged between those things with their multifaceted, deep, complex processing.”www.joseph-ledoux.comwww.cns.nyu.edu/ebihttps://amygdaloids.netwww.hup.harvard.edu/books/9780674261259www.creativeprocess.infowww.oneplanetpodcast.orgIG www.instagram.com/creativeprocesspodcastMusic courtesy of Joseph LeDoux

Emotional Intelligence in the Age of AI - Highlights - DANIEL GOLEMAN