Audio is streamed directly from the publisher (s3.amazonaws.com) as published in their RSS feed. Play Podcasts does not host this file. Rights-holders can request removal through the copyright & takedown page.

Show Notes

172: Transformers and Large Language Models

Intro topic: Is WFH actually WFC?

News/Links:

- Falsehoods Junior Developers Believe about Becoming Senior

- Pure Pursuit

- Tutorial with python code: https://wiki.purduesigbots.com/software/control-algorithms/basic-pure-pursuit

- Video example: https://www.youtube.com/watch?v=qYR7mmcwT2w

- PID without a PHD

- Google releases Gemma

Book of the Show

- Patrick: The Eye of the World by Robert Jordan (Wheel of Time)

- Jason: How to Make a Video Game All By Yourself

Patreon Plug https://www.patreon.com/programmingthrowdown?ty=h

Tool of the Show

- Patrick: Stadia Controller Wifi to Bluetooth Unlock

- Jason: FUSE and SSHFS

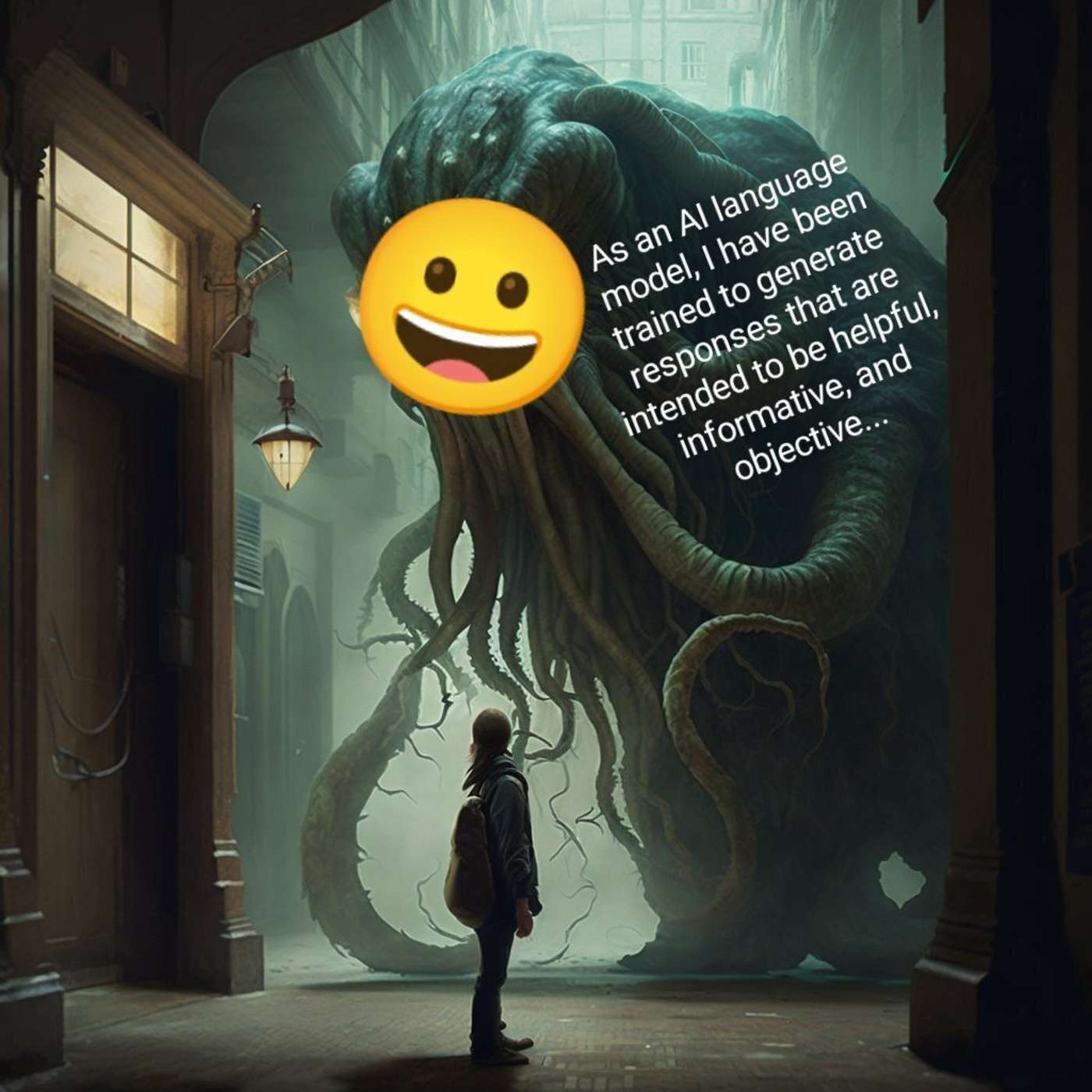

Topic: Transformers and Large Language Models

- How neural networks store information

- Latent variables

- Transformers

- Encoders & Decoders

- Attention Layers

- History

- RNN

- Vanishing Gradient Problem

- LSTM

- Short term (gradient explodes), Long term (gradient vanishes)

- RNN

- Differentiable algebra

- Key-Query-Value

- Self Attention

- History

- Self-Supervised Learning & Forward Models

- Human Feedback

- Reinforcement Learning from Human Feedback

- Direct Policy Optimization (Pairwise Ranking)

Topics

Programming ThrowdownProgramming LanguagesCCJavaPythonObjective C