Learning Machines 101

85 episodes — Page 2 of 2

S1 Ep 36LM101-036: How to Predict the Future from the Distant Past using Recurrent Neural Networks

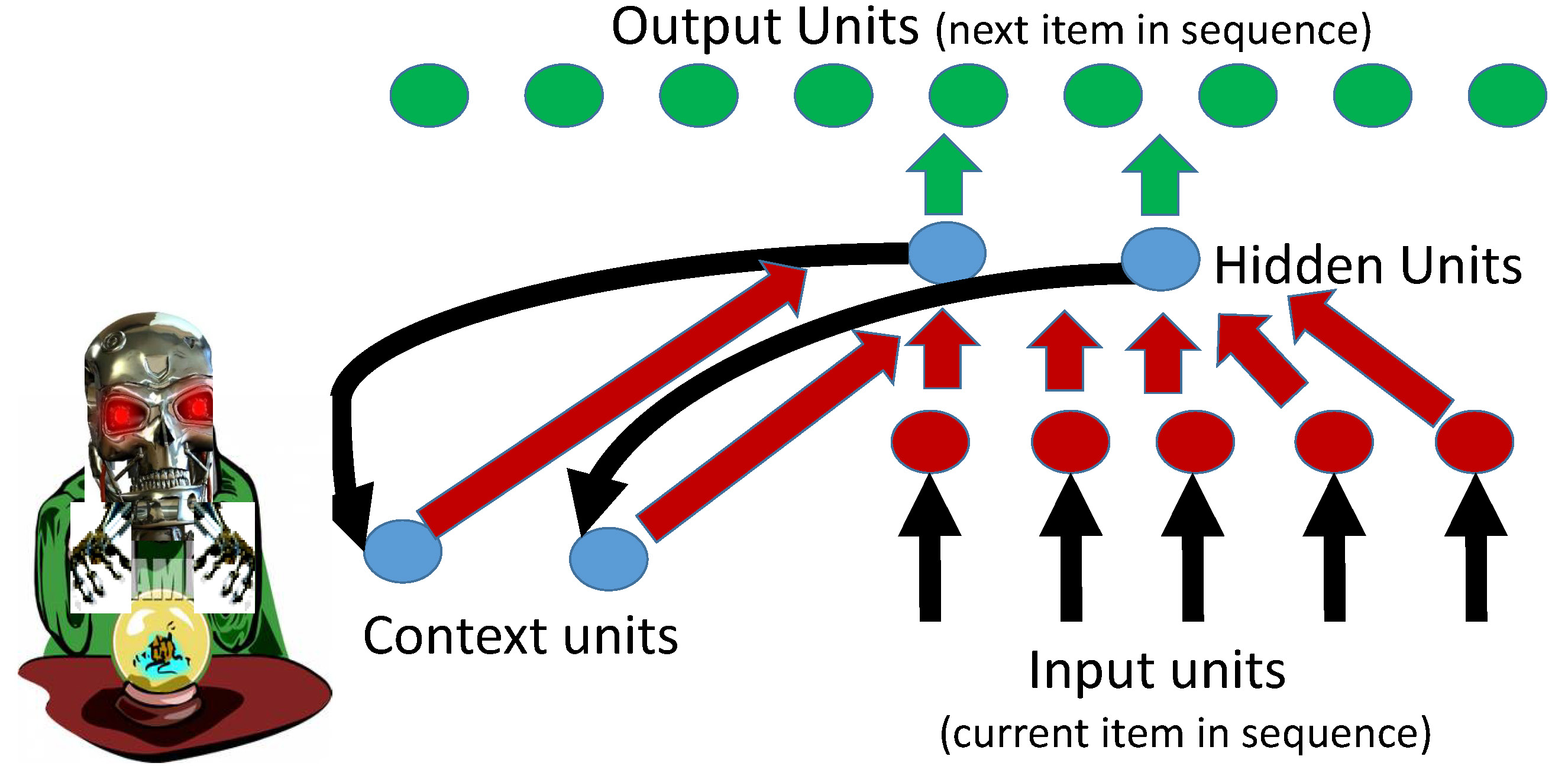

In this episode, we discuss the problem of predicting the future from not only recent events but also from the distant past using Recurrent Neural Networks (RNNs). A example RNN is described which learns to label images with simple sentences. A learning machine capable of generating even simple descriptions of images such as these could be used to help the blind interpret images, provide assistance to children and adults in language acquisition, support internet search of content in images, and enhance search engine optimization websites containing unlabeled images. Both tutorial notes and advanced implementational notes for RNNs can be found in the show notes at: www.learningmachines101.com .

S1 Ep 35LM101-035: What is a Neural Network and What is a Hot Dog?

In this episode, we address the important questions of “What is a neural network?” and “What is a hot dog?” by discussing human brains, neural networks that learn to play Atari video games, and rat brain neural networks. Check out: www.learningmachines101.com for videos of a neural network that learns to play ATARI video games and transcripts of this podcast!!! Also follow us on twitter at: @lm101talk See you soon!!

S1 Ep 34LM101-034: How to Use Nonlinear Machine Learning Software to Make Predictions (Feedforward Perceptrons with Radial Basis Functions)[Rerun]

Welcome to the 34th podcast in the podcast series Learning Machines 101 titled "How to Use Nonlinear Machine Learning Software to Make Predictions". This particular podcast is a RERUN of Episode 20 and describes step by step how to download free software which can be used to make predictions using a feedforward artificial neural network whose hidden units are radial basis functions. This is essentially a nonlinear regression modeling problem. Check out: www.learningmachines101.comand follow us on twitter: @lm101talk

S1 Ep 33LM101-033: How to Use Linear Machine Learning Software to Make Predictions (Linear Regression Software)[RERUN]

Normal 0 false false false EN-US X-NONE X-NONE /* Style Definitions */ table.MsoNormalTable {mso-style-name:"Table Normal"; mso-tstyle-rowband-size:0; mso-tstyle-colband-size:0; mso-style-noshow:yes; mso-style-priority:99; mso-style-qformat:yes; mso-style-parent:""; mso-padding-alt:0in 5.4pt 0in 5.4pt; mso-para-margin-top:0in; mso-para-margin-right:0in; mso-para-margin-bottom:10.0pt; mso-para-margin-left:0in; line-height:115%; mso-pagination:widow-orphan; font-size:11.0pt; font-family:"Calibri","sans-serif"; mso-ascii-font-family:Calibri; mso-ascii-theme-font:minor-latin; mso-fareast-font-family:"Times New Roman"; mso-fareast-theme-font:minor-fareast; mso-hansi-font-family:Calibri; mso-hansi-theme-font:minor-latin;} In this episode will explain how to download and use free machine learning software which can be downloaded from the website: www.learningmachines101.com. The software can be used to make predictions using your own data sets. Although we will continue to focus on critical theoretical concepts in machine learning in future episodes, it is always useful to actually experience how these concepts work in practice.This is a rerun of Episode 13.

S1 Ep 32LM101-032: How To Build a Support Vector Machine to Classify Patterns

In this 32nd episode of Learning Machines 101, we introduce the concept of a Support Vector Machine. We explain how to estimate the parameters of such machines to classify a pattern vector as a member of one of two categories as well as identify special pattern vectors called “support vectors” which are important for characterizing the Support Vector Machine decision boundary. The relationship of Support Vector Machine parameter estimation and logistic regression parameter estimation is also discussed. Check out this and other episodes as well as supplemental references to these episodes at the website: www.learningmachines101.com. Also follow us at twitter using the twitter handle: lm101talk.

S1 Ep 31LM101-031: How to Analyze and Design Learning Rules using Gradient Descent Methods (RERUN)

In this rerun of Episode 16, we introduce the important concept of gradient descent which is the fundamental principle underlying learning mechanisms in a wide range of machine learning algorithms. Check out the transcripts of this episode and related references and software at: www.learningmachines101.com !!!

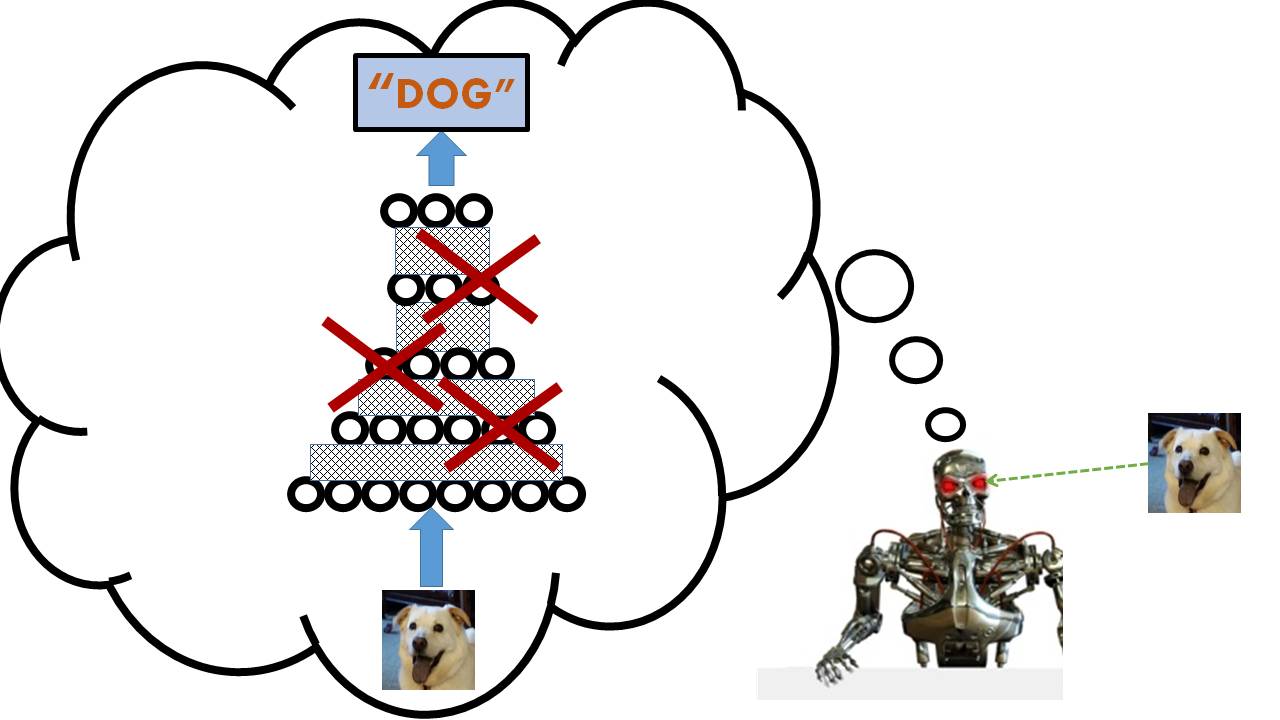

S1 Ep 30LM101-030: How to Improve Deep Learning Performance with Artificial Brain Damage (Dropout and Model Averaging)

Deep learning machine technology has rapidly developed over the past five years due in part to a variety of actors such as: better technology, convolutional net algorithms, rectified linear units, and a relatively new learning strategy called "dropout" in which hidden unit feature detectors are temporarily deleted during the learning process. This article introduces and discusses the concept of "dropout" to support deep learning performance and makes connections of the "dropout" concept to concepts of regularization and model averaging. For more details and background references, check out: www.learningmachines101.com !

S1 Ep 29LM101-029: How to Modernize Deep Learning with Rectilinear units, Convolutional Nets, and Max-Pooling

This podcast discusses talks, papers, and ideas presented at the recent International Conference on Learning Representations 2015 which was followed by the Artificial Intelligence in Statistics 2015 Conference in San Diego. Specifically, commonly used techniques shared by many successful deep learning algorithms such as: rectilinear units, convolutional filters, and max-pooling are discussed. For more details please visit our website at: www.learningmachines101.com!

S1 Ep 28LM101-028: How to Evaluate the Ability to Generalize from Experience (Cross-Validation Methods)[RERUN]

This rerun of an earlier episode of Learning Machines 101 discusses the problem of how to evaluate the ability of a learning machine to make generalizations and construct abstractions given the learning machine is provided a finite limited collection of experiences. Check out: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 27LM101-027: How to Learn About Rare and Unseen Events (Smoothing Probabilistic Laws)[RERUN]

In this episode of Learning Machines 101 we discuss the design of statistical learning machines which can make inferences about rare and unseen events using prior knowledge. Check out: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 26LM101-026: How to Learn Statistical Regularities (Rerun)

In this rerun of Episode 10, we discuss fundamental principles of learning in statistical environments including the design of learning machines that can use prior knowledge to facilitate and guide the learning of statistical regularities. The topics of ML (Maximum Likelihood) and MAP (Maximum A Posteriori) estimation are discussed in the context of the nature versus nature problem. Check out: www.learningmachines101.com to obtain transcripts of this podcastand access to free machine learning software!

S1 Ep 25LM101-025: How to Build a Lunar Lander Autopilot Learning Machine

In this episode we consider the problem of learning when the actions of the learning machine can alter the characteristics of the learning machine’s statistical environment. We illustrate the solution to this problem by designing an autopilot for a lunar lander module that learns from its experiences! Check out: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 24LM101-024: How to Use Genetic Algorithms to Breed Learning Machines

In this episode we introduce the concept of learning machines that can self-evolve using simulated natural evolution into more intelligent machines using Monte Carlo Markov Chain Genetic Algorithms. Check out: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 23LM101-023: How to Build a Deep Learning Machine

Recently, there has been a lot of discussion and controversy over the currently hot topic of “deep learning”!! Deep Learning technology has made real and important fundamental contributions to the development of machine learning algorithms. Learn more about the essential ideas of "Deep Learning" in Episode 23 of "Learning Machines 101". Check us out at our official website: www.learningmachines101.com !

S1 Ep 22LM101-022: How to Learn to Solve Large Constraint Satisfaction Problems

In this episode we discuss how to learn to solve constraint satisfaction inference problems. The goal of the inference process is to infer the most probable values for unobservable variables. These constraints, however, can be learned from experience. At the end of the episode, we discuss one (unproven) theory from the field of neuroscience that our "dreams" are actually neural simulations of variations of events we have experienced during the day and "unlearning" of these dreams helps us to organize our memory! Visit us at: www.learningmachines101.com to obtain additional references, make suggestions regarding topics for future podcast episodes by joining the learning machines 101 community, and download free machine learning software!

S1 Ep 21LM101-021: How to Solve Large Complex Constraint Satisfaction Problems (Monte Carlo Markov Chain)

We discuss how to solve constraint satisfaction inference problems where knowledge is represented as a large unordered collection of complicated probabilistic constraints among a collection of variables. The goal of the inference process is to infer the most probable values of the unobservable variables given the observable variables. Please visit: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 20LM101-020: How to Use Nonlinear Machine Learning Software to Make Predictions

In this episode we introduce some advanced nonlinear machine software which is more complex and powerful than the linear machine software introduced in Episode 13. Specifically, the software implements a multilayer nonlinear learning machine, however, whose inputs feed into hidden units which in turn feed into output units has the potential to learn a much larger class of statistical environments. Download the free software from: www.learningmachines101.com now!

S1 Ep 19LM101-019 (Rerun): How to Enhance Intelligence with a Robotic Body (Embodied Cognition)

Embodied cognition emphasizes the design of complex artificially intelligent systems may be both vastly simplified and vastly enhanced if we view the robotic bodies of artificially intelligent systems as important contributors to intelligent behavior. Check out: www.learningmachines101.com to obtain transcripts of this podcast and download free machine learning software!

S1 Ep 18LM101-018: Can Computers Think? A Mathematician's Response (Rerun)

In this episode, we explore the question of what can computers do as well as what computers can’t do using the Turing Machine argument. Specifically, we discuss the computational limits of computers and raise the question of whether such limits pertain to biological brains and other non-standard computing machines. This is a rerun of Episode 4. We continue new podcasts in January 2015! For a transcript of this episode, please visit our website: www.learningmachines101.com!!!

S1 Ep 17LM101-017: How to Decide if a Machine is Artificially Intelligent (Rerun)

This episode we discuss the Turing Test for Artificial Intelligence which is designed to determine if the behavior of a computer is indistinguishable from the behavior of a thinking human being. The chatbot A.L.I.C.E. (Artificial Linguistic Internet Computer Entity) is interviewed and basic concepts of AIML (Artificial Intelligence Markup Language) are introduced.

S1 Ep 16LM101-016: How to Analyze and Design Learning Rules using Gradient Descent Methods

In this episode we introduce the concept of gradient descent which is the fundamental principle underlying learning in the majority of machine learning algorithms. For more podcast episodes on the topic of machine learning and free machine learning software, please visit us at: www.learningmachines101.com !!

S1 Ep 15LM101-015: How to Build a Machine that Can Learn Anything (The Perceptron)

In this 15th episode of Learning Machines 101, we discuss the problem of how to build a machine that can learn any given pattern of inputs and generate any desired pattern of outputs when it is possible to do so! It is assumed that the input patterns consists of zeros and ones indicating possibly the presence or absence of a feature. Check out: www.learningmachines101.com to obtain transcripts of this podcast!!!

S1 Ep 14LM101-014: How to Build a Machine that Can Do Anything (Function Approximation)

In this episode, we discuss the problem of how to build a machine that can do anything! Or more specifically, given a set of input patterns to the machine and a set of desired output patterns for those input patterns we would like to build a machine that can generate the specified output pattern for a given input pattern. This problem may be interpreted as an example of solving a supervised learning problem. Checkout the shownotes at: www.learningmachines101.com for a transcript of this show and free machine learning software!

S1 Ep 13LM101-013: How to Use Linear Machine Learning Software to Make Predictions (Linear Regression Software)

Hello everyone! Welcome to the thirteenth podcast in the podcast series Learning Machines 101. In this series of podcasts my goal is to discuss important concepts of artificial intelligence and machine learning in hopefully an entertaining and educational manner. In this episode we will explain how to download and use free machine learning software which can be downloaded from the website: www.learningmachines101.com. Although we will continue to focus on critical theoretical concepts in machine learning in future episodes, it is always useful to actually experience how these concepts work in practice. For these reasons, from time to time I will include special podcasts like this one which focus on very practical issues associated with downloading and installing machine learning software on your computer. If you follow these instructions, by the end of this episode you will have installed one of the simplest (yet most widely used) machine learning algorithms on your computer. You can then use the software to make virtually any kind of prediction you like. However, some of these predictions will be good predictions, while other predictions will be poor predictions. For this reason, following the discussion in Episode 12 which was concerned with the problem of evaluating generalization performance, we will also discuss how to evaluate what your learning machine has “memorized” and additionally evaluate the ability of your learning machine to “generalize” and make predictions about things that it has never seen before.

S1 Ep 12LM101-012: How to Evaluate the Ability to Generalize from Experience (Cross-Validation Methods)

In this episode we discuss the problem of how to evaluate the ability of a learning machine to make generalizations and construct abstractions given the learning machine is provided a finite limited collection of experiences.

S1 Ep 8LM101-008: How to Represent Beliefs Using Probability Theory

Episode Summary: This episode focusses upon how an intelligent system can represent beliefs about its environment using fuzzy measure theory. Probability theory is introduced as a special case of fuzzy measure theory which is consistent with classical laws of logical inference.

S1 Ep 11LM101-011: How to Learn About Rare and Unseen Events (Smoothing Probabilistic Laws)

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: Today we address a strange yet fundamentally important question. How do you predict the probability of something you have never seen? Or, in other words, how can we accurately estimate the probability of rare events? Show Notes: Hello everyone! Welcome to the eleventh podcast in the podcast series Learning Machines 101. In this series of podcasts. Read More » The post LM101-011: How to Learn About Rare and Unseen Events (Smoothing Probabilistic Laws) appeared first on Learning Machines 101.

S1 Ep 10LM101-010: How to Learn Statistical Regularities (MAP and maximum likelihood estimation)

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In this podcast episode, we discuss fundamental principles of learning in statistical environments including the design of learning machines that can use prior knowledge to facilitate and guide the learning of statistical regularities. Show Notes: Hello everyone! Welcome to the tenth podcast in the podcast series Learning Machines 101. In this series of podcasts my goal. Read More » The post LM101-010: How to Learn Statistical Regularities (MAP and maximum likelihood estimation) appeared first on Learning Machines 101.

S1 Ep 9LM101-009: How to Enhance Intelligence with a Robotic Body (Embodied Cognition)

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: Embodied cognition emphasizes the design of complex artificially intelligent systems may be both vastly simplified and vastly enhanced if we view the robotic bodies of artificially intelligent systems as important contributors to intelligent behavior. Show Notes: Hello everyone! Welcome to the ninth podcast in the podcast series Learning Machines 101. In this series of podcasts my. Read More » The post LM101-009: How to Enhance Intelligence with a Robotic Body (Embodied Cognition) appeared first on Learning Machines 101.

S1 Ep 7LM101-007: How to Reason About Uncertain Events using Fuzzy Set Theory and Fuzzy Measure Theory

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In real life, there is no certainty. There are always exceptions. In this episode, two methods are discussed for making inferences in uncertain environments. In fuzzy set theory, a smart machine has certain beliefs about imprecisely defined concepts. In fuzzy measure theory, a smart machine has beliefs about precisely defined concepts but some beliefs are stronger. Read More » The post LM101-007: How to Reason About Uncertain Events using Fuzzy Set Theory and Fuzzy Measure Theory appeared first on Learning Machines 101.

S1 Ep 6LM101-006: How to Interpret Turing Test Results

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In this episode, we briefly review the concept of the Turing Test for Artificial Intelligence (AI) which states that if a computer.s behavior is indistinguishable from that of the behavior of a thinking human being, then the computer should be called .artificially intelligent.. Some objections to this definition of artificial intelligence are introduced and discussed. At. Read More » The post LM101-006: How to Interpret Turing Test Results appeared first on Learning Machines 101.

S1 Ep 5LM101-005: How to Decide if a Machine is Artificially Intelligent (The Turing Test)

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: This episode we discuss the Turing Test for Artificial Intelligence which is designed to determine if the behavior of a computer is indistinguishable from the behavior of a thinking human being. The chatbot A.L.I.C.E. (Artificial Linguistic Internet Computer Entity) is interviewed and basic concepts of AIML (Artificial Intelligence Markup Language) are introduced. Show Notes: Hello everyone!. Read More » The post LM101-005: How to Decide if a Machine is Artificially Intelligent (The Turing Test) appeared first on Learning Machines 101.

S1 Ep 4LM101-004: Can computers think? A mathematician.s response

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In this episode, we explore the question of what can computers do as well as what computers can.t do using the Turing Machine argument. Specifically, we discuss the computational limits of computers and raise the question of whether such limits pertain to biological brains and other non-standard computing machines. Show Notes: Hello everyone! Welcome to the. Read More » The post LM101-004: Can computers think? A mathematician.s response appeared first on Learning Machines 101.

S1 Ep 3LM101-003: How to Represent Knowledge using Logical Rules

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In this episode we will learn how to use .rules. to represent knowledge. We discuss how this works in practice and we explain how these ideas are implemented in a special architecture called the production system. The challenges of representing knowledge using rules are also discussed. Specifically, these challenges include: issues of feature representation, having an. Read More » The post LM101-003: How to Represent Knowledge using Logical Rules appeared first on Learning Machines 101.

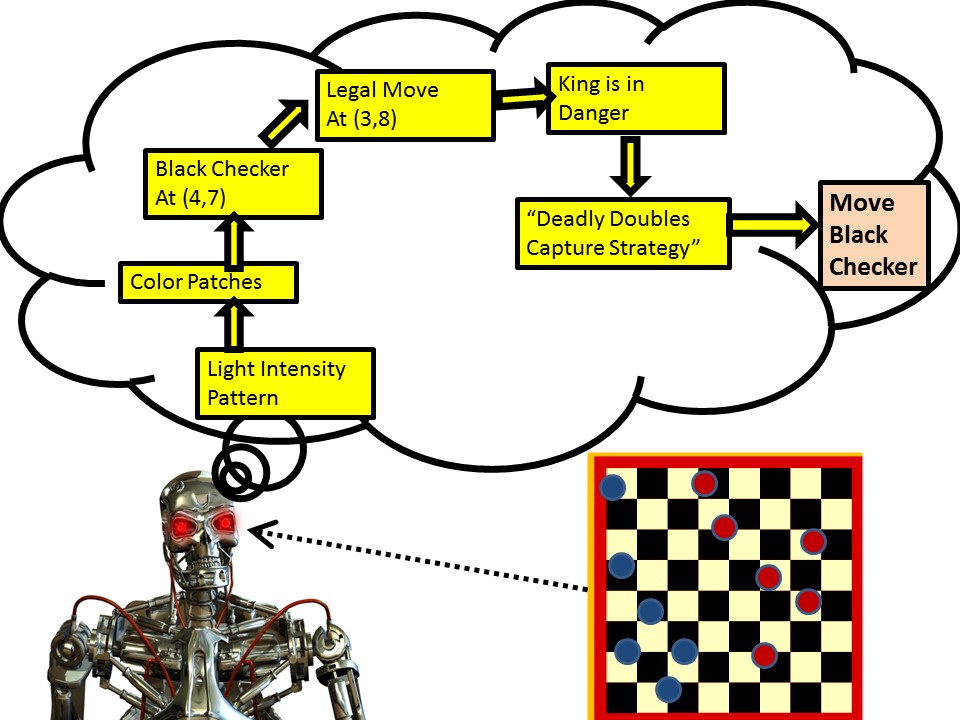

S1 Ep 2LM101-002: How to Build a Machine that Learns to Play Checkers

Learning Machines 101 - A Gentle Introduction to Artificial Intelligence and Machine Learning Episode Summary: In this episode, we explain how to build a machine that learns to play checkers. The solution to this problem involves several key ideas which are fundamental to building systems which are artificially intelligent. Show Notes: Hello everyone! Welcome to the second podcast in the podcast series Learning Machines 101. In this series of podcasts my. Read More » The post LM101-002: How to Build a Machine that Learns to Play Checkers appeared first on Learning Machines 101.