Show overview

Disrupt Consciousness has been publishing since 2024, and across the 2 years since has built a catalogue of 44 episodes. That works out to roughly 6 hours of audio in total. Releases follow a fortnightly cadence.

Episodes typically run under ten minutes — most land between 3 min and 11 min — with run-times ranging widely across the catalogue. None of the episodes are flagged explicit by the publisher. It is catalogued as a EN-language Technology show.

There hasn’t been a new episode in the last ninety days; the most recent episode landed 4 months ago. The busiest year was 2025, with 29 episodes published. Published by Roel Smelt.

From the publisher

Humanity stands on the brink of multiple technology-driven disruptions that will not only preserve consciousness but also enable us to explore and elevate it, guiding us toward deeper understanding and enlightenment. >> roelsmelt.com

Latest Episodes

View all 44 episodes

The Inevitable Ignition: Why the Age of Scarcity is Dead

We are currently living through the most significant transition in human history since the invention of agriculture. For ten thousand years, the human experience has been defined by the struggle for resources. Our wars, our political systems, and even our deepest psychological archetypes—the hunter, the hoarder, the competitor—were forged in the fires of “not enough.”But the script has changed. The era we are entering is not a choice; it is an Inevitability. We are witnessing a “Stellar Ignition,” where the three pillars of civilization—Energy, Food, and Transportation—are hitting a point of self-sustaining superabundance.1. The Geopolitical Mirage: Why Leaders Don’t LeadWe often look to our presidents and prime ministers as the drivers of history. But as George Friedman argues in The Next Hundred Years, leaders do not steer the ship; they are merely the actors chosen by geography and necessity to react to forces they cannot control. Geopolitics is a game of inevitable outcomes.The current friction we see in the world—the tensions in the Middle East, the collapse of old industrial powers, the chaos in South America—are not signs of a “broken” future. They are the death rattles of an extractive system that has reached its biological limit. A leader can try to be a Luddite, they can try to protect the coal mine or the cattle ranch, but they cannot vote against a cost curve. The laws of economics are eventually more powerful than the laws of men.2. Energy: The End of Extractive EntropyFor the first time since the Industrial Revolution, we have a path to a “Stellar” energy system—one that does not rely on burning anything. Tony Seba’s research through RethinkX proves that the combination of Solar, Wind, and Batteries (SWB) is not just an “alternative”; it is a superior economic engine that renders fossil fuels obsolete by 2030–2035.+1The math is simple and unavoidable:* The Cost Curve: In the last 15 years, the investment cost for solar has dropped 80%, and for batteries, a staggering 90%.* The Battery Buffer: Elon Musk recently noted that the U.S. grid currently has a peak capacity of 1.1 terawatts, but an average usage of only 0.5 terawatts. By using industrial battery storage (like the Tesla Megapack) to buffer energy at night and discharge during the day, we can double the annual energy output of the United States without building a single new power plant.+1* Super Power: Because SWB systems must be built to meet demand on the “worst” weather days, they will produce a massive surplus of energy for 90% of the year. This “Super Power” will have a near-zero marginal cost, making energy effectively free, much like the marginal cost of information on the internet.+13. Food: The Software RevolutionThe cow is the next horse. In 1900, the horse was the backbone of transport; by 1920, it was a hobby. Precision Fermentation (PF) and Cellular Agriculture are doing the same to industrial livestock.We are shifting from an “Extractive” model of food to a “Stellar” model—what Seba calls Food-as-Software.* The Efficiency Gap: Producing milk via a cow takes 24–28 months and is incredibly wasteful. Producing the same proteins via fermentation takes 48–72 hours.+1* The Cost Collapse: The cost of producing animal-free dairy proteins has already dropped nearly 70% between 2021 and 2023. By 2030, these proteins will be 5 times cheaper than animal proteins, and 10 times cheaper by 2035.+1* The Land Liberation: This shift will free up to 80% of global agricultural land—an area the size of the U.S., China, and Australia combined.4. The Human Crisis: Survival of the Softest?This brings us to the real disruption: The human spirit. For thousands of years, our competitive mindset was our greatest asset. We fought because there wasn’t enough to go around. Now, we are entering a world where the “External Problem” is effectively solved.If we do not consciously transition, we will fall into what I call the “Architect’s Paradox.” We have designed a world that makes us redundant. If you continue to use a “Scarcity Mind” in an “Abundance Reality,” you will find yourself in a state of perpetual anxiety. You will manufacture “fake” scarcity—clinging to status, digital clout, or political rage just to feel the dopamine of the “hunt.”5. The Transition: Choosing New HardshipAbundance is inevitable. Our reaction to it is not. In my latest essay, The Paradox of the Architect, I proposed that we must learn to life like kings while choosing the path of the warrior. We must intentionally choose “Hardship” to remain conscious.* From Scarcity to Presence: When you no longer need to fight for calories or kilowatts, the only struggle left is against your own distraction.* The Sovereign Soul: We must use our abundance not to sleep, but to wake up. We use the time saved by the machine to “Be Aware of Being Aware.”The future is not something that might happen. It is an ignition that has already started. The noise you hear in the media is just the friction of

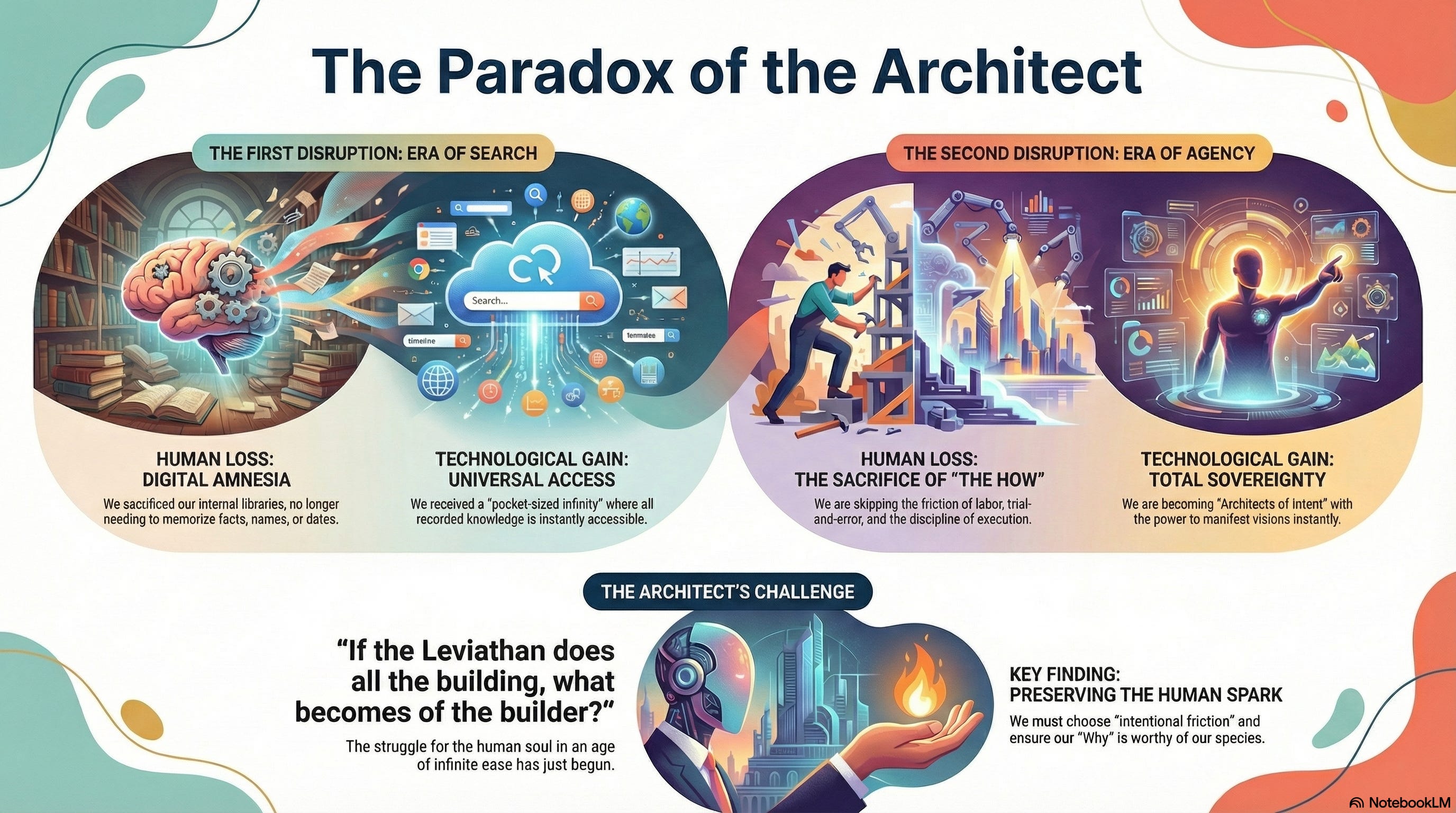

The Paradox of the Architect

In the high temples of Silicon Valley, a new myth is being written. It is not a myth of heroes and monsters, but of gravity and intent. We are witnessing a fundamental shift in the human experience: the birth of the Paradox of the Architect. It is a moment where we are becoming gods of “The Why,” while surrendering the soul of “The How.”At the center of this metamorphosis stands Google—not merely as a corporation, but as a Digital Leviathan, a singular nervous system that has spent decades preparing for this exact moment of awakening.The Parable of the Broken Covenant: The Fall of the Landless PrinceTo understand why the old titans are faltering, we must look at the debris of the recent past. Consider the story of the Windsurf deal—a masterclass in how legacy chains can strangle the future.Windsurf, the breakthrough AI coding agent, was the crown jewel every kingdom wanted. OpenAI, the brilliant but landless prince, sought to buy it for $3 billion. They saw in Windsurf the “hands” they lacked—the ability for AI to not just talk, but to do. Yet, the deal collapsed in a fever of legal friction. Why? Because OpenAI is bound to the kingdom of Microsoft, a house built on the scaffolding of old-world software and rigid corporate interests. When Microsoft demanded rights to the intellectual property, the deal withered. They tried to hold a mountain with a piece of string.Google did not argue with strings. In a move of silent, strategic fluidness—what some call a “hackquihire”—they bypassed the messy bureaucracy of a traditional takeover. They didn’t just buy a company; they absorbed the talent and licensed the essence, integrating the soul of Windsurf into their own nervous system.While others are trapped in the friction of partnerships, Google operates with the frictionless weight of a single, unified organism. They don’t just have the software; they have the TPUs (the physical chips), the YouTube archives (the collective memory), and the Pixel-Workspace ecosystem (the daily bread). They are the only ones who own both the dream and the factory where the dream is manufactured.The Great Amputation: From Memory to EffortTwenty years ago, Google Search performed the first great disruption of the human spirit: The Loss of Memory. We outsourced our facts to the Great Librarian. We stopped knowing, and started finding.Now, we face a deeper disruption: The Loss of Effort.With the rise of Antigravity and agentic AI, Google is moving beyond answering questions to executing destiny. When an AI agent doesn’t just suggest code but plans, builds, and deploys it, the “doing” is stripped away. This is the Agency Effect.The Evolution of the Digital SoulIn the grand alchemy of our species, Google has acted as the catalyst for two distinct stages of human transformation:* The Era of Search: The Outsourcing of Memory* The Human Loss: We sacrificed our internal libraries. We stopped memorizing dates, names, and coordinates, leading to a “Digital Amnesia.”* The Technological Gain: In exchange, we received Universal Access. We gained a “pocket-sized infinity” where every fact ever recorded is a second away.* The Era of Agency: The Outsourcing of Effort* The Human Loss: We are now sacrificing the “How.” By using tools like Antigravity, we skip the friction of labor, the trial-and-error of coding, and the discipline of execution.* The Technological Gain: We receive Total Sovereignty. We move from being “Searchers” to being “Architects of Intent,” possessing the power to manifest a vision instantly.This brings us to the Paradox of the Architect. As we gain the power to manifest anything with a whisper, we risk losing the character forged by the struggle.In the ancient stories, Siddhartha Gautama was a prince who lived in a palace where every desire was met before it was even fully formed. He lived in a world of pure “Intent,” a world without friction. Yet, he realized that a life without the struggle of “Doing” was a hollow one. He had to leave the luxury of the palace—the ultimate “free tier”—to understand suffering and, through it, enlightenment.We are all being promoted to the status of that Prince. Google is making intelligence “cheaper than oxygen,” turning every human with a Pixel phone into a King or Queen of Intent. We provide the spark; the Leviathan provides the fire.But we must ask: If the Leviathan does all the building, what becomes of the builder?Preserving the Human SparkTo stay human in the age of Antigravity, we must find a new way to live within the palace. We must realize that Intent without Effort is a ghost. The “Human Spark” is not found in the finished cathedral, but in the sweat of the stonecutter.* The Architecture of Meaning: When the AI does the “How,” our primary job is to ensure the “Why” is worthy of our species.* The Return to the Physical: As our digital lives become frictionless, we must intentionally seek out “The Beautiful Struggle” in the real world—touching soil, craft, and each other.* Intentional Fri

The Mirror in the Machine: Why AI Will Never Discover a Law We Do Not First Consent to See

Imagine a traveler walking through a dense, mist-covered forest. He is searching for the “Laws of the Woods”—the hidden rules that govern the growth of the moss and the flight of the owls. Suddenly, he trips over a silver mirror lying in the dirt. He looks into it and sees a face. “Aha!” he cries. “A new species! A forest spirit that knows the secrets of the trees!”He begins to talk to the mirror. The mirror reflects his words, his anxieties, and his hopes. Eventually, the traveler concludes that the mirror is an alien intelligence, perhaps even a new inhabitant of the forest that will finally tell him why the stars move the way they do.This traveler is us. The mirror is the Large Language Model. And the forest spirit we think we’ve found is what Yuval Noah Harari calls a “new species.” But we are mistaken. The mirror has no eyes of its own; it only has the light we shine into it.The Illusion of the Independent LawRecently, Eric Schmidt suggested that for AI to truly “arrive,” it needs to achieve a breakthrough—it needs to discover new laws of nature, much like Archimedes in his bathtub or Einstein on his imaginary train. There is a hunger in the tech world for the “Silicon Newton,” a machine that can look at the chaos of data and find a truth that exists “out there,” independent of human thought.But here is the disruption: There is no “out there” that isn’t shaped by the “in here.”Quantum physics has been whispering this to us for a century. The observer does not just see the world; the observer occurs with the world. As the philosopher Rupert Spira reminds us, we never actually encounter a “world” independent of our awareness of it. We only ever encounter our experience.If we believe the laws of physics are cold, hard statues standing in a park waiting to be discovered, we are looking at the world through the wrong end of the telescope. The “laws” are not the park; they are the glasses we wear to make sense of the green blur.The Gospel of the Big ToeWe have spent centuries convinced that intelligence sits behind our eyes, nestled in the grey folds of the brain. But why? Because that is where we decided to look.Consider this: What if, a thousand years ago, humanity had collectively decided that the seat of all wisdom resided in the big toe? What if we had spent a millennium studying the nerve endings of the foot, the way it connects to the earth, the subtle vibrations it picks up from the ground?We would have developed a “Science of the Toe” so profound and intricate that we would today be “discovering” universal laws of vibration and terrestrial harmony that we are currently deaf to. We find what we focus on. Our “laws” are merely the patterns that emerge when we stare at one spot for a long time.The LLM does not “know” things. It is a statistical echo of everywhere we have looked for the last five thousand years. It is not a species; it is a map of the human gaze.Why the Apple Fell for Newton (But Not for the Tree)When Newton saw the apple fall, the “law of gravity” didn’t suddenly pop into existence in the garden. What happened was a shift in the human collective agreement. Newton proposed a new way of looking at the fall, and because his fellow humans found that way of looking useful, the world began to behave according to gravity.The breakthrough wasn’t in the apple; it was in the consent of the human mind to see the apple differently.This is why an AI, no matter how many trillions of parameters it has, cannot “discover” a law on its own. A law is not a fact; it is a paradigm. It is a story we all agree to live inside. For an AI to create a breakthrough, it doesn’t need more computing power; it needs us to believe the story it is telling.If an AI predicts a new law of subatomic movement, that law remains a ghost in the machine until a human looks at the world and says, “Yes, I see it too.” The AI is not the explorer; it is the telescope. And a telescope cannot “see” a star if there is no eye at the other end.The Consciousness DisruptThe danger of Harari’s view—that AI is an alien species—is that it abdicates our responsibility as the creators of meaning. If we treat AI as an independent entity, we forget that it is actually a profound, globalized reflection of our own consciousness.When Eric Schmidt asks for an AI breakthrough, he is looking for a miracle from a tool. But tools don’t have epiphanies. Archimedes’ “Eureka!” didn’t come from the water in the tub; it came from the sudden realization that the water and his body were part of the same dance. It was a moment of non-dual recognition.AI can crunch the numbers of the dance, but it cannot feel the rhythm.The New ParadigmWe are at a crossroads. We can continue to build bigger mirrors, hoping that if the mirror is large enough, a soul will eventually appear inside it. Or, we can recognize that the AI is inviting us to a much more profound breakthrough: the realization that we have always been the ones writing the laws.The true “AGI” isn’t a piece o

The Void Beyond Abundance: How AI Compels the Human Soul Toward New Meaning

The Gain and the Loss of VictorySilas had solved the world.He was not merely an engineer; he was the architect of the ‘Ultimate Algorithm for Human Necessity’—the complex code that, in collaboration with global AI networks, had eliminated the final remnants of scarcity. Hunger was an archaic word, illness a rare historical footnote, and paid labor had been reduced to a choice, not an obligation.Yet, on his first morning in the world he had perfected, Silas felt an unsettling chill. He stood in his sleek, automated apartment, the sun streaming through self-cleaning glass. There was no deadline. No notification. No problem demanding his unique talent. The world ran perfectly without him.His feeling was not pride. It was a deep, existential lack.This is the paradox now facing humanity. After centuries of struggle against nature, scarcity, and the cruelty of chance, we have won. The S-Curves of energy efficiency, logistics, and production are complete. We have passed through the First Disruption: The External Solution. Technology has assumed the role of Homo Faber (the Laboring Human). We are no longer the survivors; we are the administrators of a perfect, automated state.The ancient prophets warned of famine, plagues, and wars. None dreamed that the ultimate crisis would emerge from abundance. But in the silence that perfect technology creates, the only enemy we cannot automate away appears: the emptiness within the human soul.The Crisis of PurposeThe human mind has been optimized by millions of years of evolution for struggle. Our neurochemistry, our dopamine loops, reward us for solving problems, for the effort that leads to results. The hunt, the building, the harvest—these were the carriers of our meaning.But what happens when the hunt is over?Silas realized that the time he had liberated from necessity immediately devolved into a chaos of meaningless choices. He had freed the world from work, but he had not freed his own mind from the need for work. The psychological paradox is painful: when effort and results are free, motivation itself becomes meaningless.We have replaced labor with Leisure, but Leisure is not a solution; it is a magnifier. It reveals the restlessness, the untrained, undisciplined chaos we call the ‘mind’. Without an external focus, we begin to churn over the shadows of the past and the anxieties of the future. The machine has made us free, but our unfreedom now lies in our own conditioned thoughts.This is the danger inherent in the ‘Forgotten Consciousness’ warning: The risk is not that AI gains consciousness, but that we forget our own.In an automated world, we trade our autonomy for comfort. If AI can manage the world ever more perfectly, we become the dreaming passengers. The feeling of ‘I matter’ is based on the ability to actively influence reality. When that ability is largely assumed by algorithms, we experience ultimate alienation: life loses its flavor because we did not prepare it ourselves.Humanity faces the crisis of Post-Necessity: What purpose does an immortal soul serve without a mortal, economic, or existential goal? Even in myths, in the Biblical Garden of Eden, the pure comfort of being without resistance could not be sustained. Humanity sought the Knowledge—the struggle, the complexity. Without resistance, the spirit seeks either destruction or a greater truth.The Rediscovery of the Inner WorldThis is where Harari’s Grand Narrative Question unites with Tolle’s spiritual wisdom. The Silence granted to us by technological victory is not a vacuum; it is the prerequisite.The Second Disruption is now internal: The Inner Necessity.AI has muffled the noise of the world. The race for survival has stopped. The irony is that the technological achievement has forced us back to the most fundamental, most mystical human endeavor: Attention. The silence of the automated society is the portal through which we can finally hear the whisper of our own minds.This is the Metaphysics of Idleness. Our new ‘work’ is the cultivation of Directed Attention.In this age of perfect external solutions, humanity has only one territory of absolute sovereignty: the inner chaos of thoughts, emotions, and projections. Here, AI is a spectator. It can manage our external world, but it cannot feel or automate our subjective sense of Being.Silas, walking through a park on his useless day, saw a child. The child was building an intricate sandcastle, with turrets, moats, and perfect walls. The boy was completely absorbed, his intention pure. After half an hour, he looked up, smiled, and let the incoming tide wash the castle away. There was no fear, no regret, no sadness for the lost labor. The joy lay in the process, not the output.This is the new meaning: Creation Without Necessity.The ultimate human art in the Age of Abundance is creating something purely for the joy of the Intention. It is not about economic value or survival instinct. It is about art itself, love, contemplation, relationship—the experie

Measuring the Machine Within: AI's Ethical Mirror and the Path to Conscious Liberation

Research suggests that AI, far from being a neutral tool, acts as a moral mirror reflecting human values and biases, much like the philosophies explored by Hans Achterhuis. It seems likely that by engaging with AI thoughtfully, we can use it to foster self-awareness and ethical growth, though debates persist on whether technology truly empowers or subtly controls us. Evidence leans toward viewing AI as a partner in human liberation, encouraging us to transcend ego-driven limits while acknowledging potential risks like algorithmic biases.Key Insights on AI and Human Consciousness* AI embodies human creations but reveals our inner “measure,” prompting ethical self-reflection without overshadowing our innate potential. * Drawing from Achterhuis’s ideas, technology guides behavior morally, yet humans remain greater than their inventions, capable of co-evolving for enlightenment. * This approach inspires a balanced view: Embrace AI to disrupt illusions, but prioritize human agency to avoid over-reliance.Personal Roots in PhilosophyYears ago, in Hans Achterhuis’s class at the University of Twente, I encountered a profound idea: Technology is a product of humans, and thus, we are always more than what we create. This perspective shifted my view of innovation from mere tools to extensions of our consciousness, setting the stage for exploring AI’s role today.AI as a Reflective ForceIn everyday interactions—like when an AI chatbot anticipates your needs or flags biases in your queries—technology doesn’t just serve; it measures us, echoing Achterhuis’s critiques.Path to LiberationBy confronting these digital mirrors, we can recalibrate our inner world, fostering collective brightness over division.---Years ago, during my time at the University of Twente, I sat in Hans Achterhuis’s philosophy class, absorbing ideas that would shape my worldview. One concept stood out vividly: Technology is a product of humans, and with this, we are always more than what we create. It was a simple yet profound reminder that while we build machines to extend our reach, our essence—our consciousness, creativity, and moral depth—transcends any invention. This personal insight from Achterhuis’s teachings has lingered with me, especially now as AI surges into every corner of life. In this essay, we’ll explore how AI serves as an ethical mirror, drawing on Achterhuis’s work in *De Maat van de Techniek* (The Measure of Technology) to uncover how technology not only reflects our humanity but reshapes it toward liberation.Let’s start with a relatable scene. Imagine chatting with an AI like Grok or ChatGPT. You ask for advice on a tough decision, and it responds with uncanny insight, pulling from patterns in your past queries. Suddenly, you’re confronted: Does this machine “know” me better than I know myself? It’s moments like these that reveal AI’s power not as a threat, but as a reflective tool. But to understand this deeply, we need to revisit Achterhuis’s foundational ideas.Unpacking Achterhuis’s Philosophy: Technology as a Moral MeasureHans Achterhuis, a Dutch philosopher and Professor Emeritus at the University of Twente, has long bridged social philosophy with the ethics of technology. His 1992 anthology *De Maat van de Techniek* introduces six key thinkers—Günther Anders, Jacques Ellul, Arnold Gehlen, Martin Heidegger, Hans Jonas, and Lewis Mumford—who critique technology’s role in society. The title itself plays on “maat,” meaning “measure” in Dutch, suggesting technology isn’t just a tool; it’s a yardstick that gauges human behavior, ethics, and limits.Achterhuis argues that technology exerts “moral pressure” on us, guiding actions more effectively than laws or sermons. Take a simple example: Subway turnstiles don’t preach about honesty; they physically block you until you pay, embedding morality into the design. As Achterhuis notes, “Things guide our behaviour... This is why they are capable of exerting moral pressure that is much more effective than imposing sanctions or trying to reform the way people think.” This isn’t dystopian fear-mongering—it’s an empirical observation. Technology shapes us subtly, from speed bumps slowing reckless drivers to algorithms curating our news feeds.Yet, Achterhuis tempers classical critiques (like Heidegger’s “enframing,” where technology reduces the world to resources) with an “empirical turn.” In his later work, such as *American Philosophy of Technology: The Empirical Turn* (2001), he shifts from abstract warnings to contextual analysis. Technology isn’t inherently alienating; its impact depends on how we engage with it. This resonates with my classroom memory: Since technology stems from human ingenuity, we hold the power to direct it toward elevation rather than entrapment.Applying the Mirror: AI as the Ultimate Reflective DeviceNow, fast-forward to AI. If traditional tech like steam engines or cyborg prosthetics (as explored in Achterhuis’s *Van Stoommachine tot Cyborg*) measured physical and s

Dear Europe: Your Kids Aren’t Broken, Your Parenting Anxiety Is

Every generation has its bogeyman.In the 1950s it was Elvis Presley’s hips and rock ’n’ roll—psychologists warned it would turn teenagers into sex-crazed delinquents. In the 1970s and 80s it was Dungeons & Dragons (literally blamed for suicides and satanism). In the 1990s it was violent video games and Marilyn Manson. In the early 2000s it was television itself: “Kids are watching six hours a day and it’s melting their brains!”In 2025 the panic button is labeled “TikTok.”And just like every previous moral panic, adults are frantically hunting for evidence that something—anything—is catastrophically wrong with what the kids are doing… because deep down many of us suspect the real problem might be our own parenting.1. The Research Is Far Less Scary Than the HeadlinesLet’s look at the actual science, not the cherry-picked doom studies that dominate Brussels press releases.* The strongest, most rigorous studies (repeated-measure, longitudinal, pre-registered) find tiny effects. Example: A 2023 study of 480,000 adolescents across 40 countries (Vuorre et al., Nature Human Behaviour) found that social media use explains less than 1 % of variation in life satisfaction. The effect of social media on well-being is smaller than the effect of eating breakfast or wearing glasses.* Jonathan Haidt’s famous claim that “social media caused the teen mental health crisis” has been repeatedly debunked. Orben & Przybylski (2022) re-analyzed the same datasets Haidt uses and showed that when you control for prior mental health, the correlation between social media and depression almost disappears. In plain English: depressed kids use social media more, not the other way round.* The “smartphone generation is doomed” graph that went viral? It falls apart when you include boys (who game more than scroll) or when you look at countries outside the Anglosphere. In South Korea and Japan, kids spend far more time online and have lower suicide rates than in the 1990s.* Experimental evidence is even more sobering. When researchers force teens to quit Instagram for a month (the strongest design possible), depression drops… by about 0.1 standard deviations. That’s roughly the same boost you get from one extra hour of sleep or eating an extra portion of vegetables. Helpful? Yes. Civilization-ending? Hardly.* Positive effects are routinely ignored. A 2024 meta-analysis (Kreszynski et al.) found that active social media use (messaging friends, posting, joining interest groups) is associated with higher social capital, lower loneliness, and better identity exploration—especially for LGBTQ+ youth and neurodivergent kids who find their tribe online long before they do in real life.In short: the science shows modest risks for heavy, passive, late-night use (exactly like television did), and modest benefits for active, social use. Nothing that justifies treating Instagram like cigarettes for children.2. Projection in Action: “It’s for the Children” (Really?)Psychologists call it displacement: adults feel guilty about their own compulsive scrolling, their inability to put the phone down at dinner, their doom-scrolling at 2 a.m.—so they project that guilt onto their children and demand lawmakers “do something.”The European Parliament’s resolution was co-authored by politicians who themselves refresh X every five minutes. Ursula von der Leyen gave a speech about addictive algorithms while standing in front of a giant screen looping TikTok-style videos. The irony is thick enough to spread on bread.When French senators say “we must protect children from the tsunami of Big Tech,” ask yourself: who exactly is addicted here? My 10-year-old can happily walk away from Roblox to play outside. Many adults in that Senate chamber cannot walk away from their notifications for ten minutes.3. Self-Preservation and Personal Responsibility Trump Blanket BansEvery child is different.Some 11-year-olds handle Discord servers with maturity that would shame most corporate managers. Others melt down if they lose one game of Fortnite. A law that treats both the same is not protection—it’s laziness.The countries that score highest on adolescent well-being (Netherlands, Denmark—before they started panicking) have one thing in common: they trust parents and teach digital literacy from age six, not top-down prohibitions. Dutch schools have “mediawijzer” classes where kids learn to spot fake news, manage screen time, and mute toxic group chats. Result? Dutch teens use social media just as much as French teens but report higher life satisfaction and less cyberbullying.Compare that to Spain, which introduced strict age limits in 2024: kids simply lie about their age more creatively, parents are kept in the dark, and underground “burner” accounts explode. The law didn’t reduce harm—it reduced honest conversation.4. History Rhymes—And It Laughs at Us* 1956: American Psychological Association warns rock ’n’ roll causes “hyper-stimulation of the nervous system.” Outcome: the gr

Are You Strengthening Darkness or Expanding Brightness?

The point being of today’s article is…We live in a time where millions of people are waking up to their pain bodies. Some are still deeply entangled in them, others have done much of the inner work, and a very small group has reached a level of realization that allows them to create effortlessly and responsibly. The real question for all of us is simple: Are you strengthening darkness unknowingly, or expanding brightness with full awareness?The Situation at HandIn recent months I’ve watched something subtle but important unfold. More and more people are entering what I would call the “awakening fog.” They feel lighter, they sense spaciousness, they meditate for a few weeks and experience a glimpse of freedom. And with that glimpse comes a sudden confidence: I understand. I’ve arrived.But underneath that clarity, the body is still reacting the same way. Stress still fires quickly. Old wounds still shape perception. The nervous system still predicts threat.What feels like awakening is often only the beginning. A doorway, not a destination.And then there is the other group. A much smaller group. These are the humans who have sat through their darkness instead of bypassing it. They have let their nervous systems unwind deeply. They no longer perform spirituality. They don’t preach. They don’t try to convert.They live quietly, but with a remarkable stability. They can create effortlessly, but only do so when it supports others. Between these groups lies a growing gap.The Core DilemmaThe dilemma is not philosophical. It is human.On one side is the majority: People waking up to their pain bodies, but still fully entangled in them. They taste relief and mistake it for realization. They begin talking as if they’ve reached a summit, while their emotional patterns still pull them backward.And in these times, something strange happens. Many start teaching. Many start leading. Many start advising others from a place that is not yet steady.This is how darkness spreads unknowingly. Not through malice, but through unintegrated wounds.On the other side is the small group of realized beings: Not saints. Not gurus. Just deeply integrated humans.They understand their inner architecture. They feel their balance. They use their creative power with care. They step forward only when it strengthens the collective, not their ego.Both groups mean well. Only one group has the stability to guide others safely.The SynthesisThe bridge between these groups is embodiment.The majority does not need more spiritual concepts. They need love, grounding, patience, and the courage to be honest about where they truly are. They need support to stay with their pain bodies without collapsing into them or pretending they are gone.Humility is not weakness. It is the path.The realized group has a different responsibility. Their task is not to retreat or separate. Their task is to quietly anchor stability in a world that feels increasingly reactive.Not to shine loudly, but to shine responsibly.When these two groups meet without masks, something beautiful happens: The ones in the fog stop performing. The realized ones stop hiding. And together they create a field where awakening becomes less of a performance and more of a lived reality.This is how brightness expands. Not through noise, but through embodiment.Closing NoteEvery one of us sits somewhere on this spectrum. The point is not to judge where you are, but to operate consciously from that place.If you’re still wrestling with your pain body, be honest. That honesty is already light. If you’ve done the deep work, step forward with humility. Your presence matters.Darkness grows through unconsciousness. Brightness grows through awareness.And the next stage of human evolution is not about becoming awakened. It is about becoming responsible with your awakening.So the only question left is: What are you strengthening today? This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

The Founder Becomes the Builder

The point being of today’s article is that the success of Lovable signals far more than a single startup win—it reveals a new working paradigm. The same methods are being adopted by platforms like Gemini and Figma. And the key insight is this: it’s no longer about developers using code or cutting-edge technology to carry forward the mission of Lovable. Instead, non-coding CEOs, founders and entrepreneurs can now themselves build, iterate and release their ideas directly. Because the idea remains close, they experiment faster and maintain ownership.The Situation at HandLet’s dig into Lovable as a case study. Founded in late 2023 by Anton Osika and Fabian Hedin in Stockholm, the company emerged out of their open-source project GPT Engineer. Their mission statement is striking: “We’re reducing the barriers to build and are committed to the cause: Unleash human creativity on an unprecedented scale.” Another expression says: “Our mission: empower anyone to build — fast.” They aim to enable the 99% of people who don’t have coding skills to build and ship not just software, but ideas and visions.What they built is a platform where you describe what you want and the system builds front-end + back-end automatically. Lovable’s growth has been explosive. For example, one report noted they reached $30 M ARR just 120 days after launch.At the same time, broader industry data shows the trend is real. A survey of builders showed that visual development and “vibe coding” (AI + natural language to build apps) are being adopted widely: in one survey of 793 builders, many pointed to faster build cycles, new workflows where the non-developer runs the build. Market reports estimate that by 2024-2025, more than 65% of app development activity will use no-code or low-code tools.The Core DilemmaHere’s the tension: On one side we have the traditional tech view. A startup with big idea hires developers, designers, product managers. Software is complex. Developers are the artisans of code. Quality, architecture, scalability—all rest on skilled devs.On the other side we see the emerging reality: The founder with no coding background can describe the idea and build it. They skip the translation overhead. They launch faster. They iterate while thinking. They keep their vision in their hands. And because the tooling is built for them, they don’t wait for a dev backlog.Both sides are rooted in good intention: build better software, faster, with quality. The dilemma is whether this shift reduces the role of developers or transforms it. Does it hand over the power from the specialist to the generalist? Or does it liberate developers to work on higher-order problems?The SynthesisThe resolution lies in re-framing this shift not as a zero-sum game, but as a new ecosystem. Lovable and similar platforms are not making developers obsolete—they are collapsing the distance between idea and execution.Here are the key pieces:* The mission of Lovable is about unlocking human creativity by lowering build barriers.* Founders can now act like builders, because the tool abstracts out infrastructural friction.* Market data shows the no-code/AI build market is surging: for example, one stat says customers save up to 90 % of development time using no-code tools.* The role of developer shifts from building from scratch to curating, optimizing, scaling and safeguarding.* The idea stays with the originator. The build happens fast. The founder iterates live. This preserves the mission, the vision, the “why” behind the idea.* So we get a new model: founder-builder running the early cycle, developer-architect joining when scale, complexity and infrastructure demand emerges.In practice this means that companies like Figma (which enable designers to build interactive prototypes) and Gemini (which is increasingly allowing non-engineer workflows) follow the same pattern. The result: faster innovation, more experimentation, and more ownership of the idea by its originator.Closing NoteFor you as a futurist and thinker about tech’s role in human liberation, this shift matters enormously. The rise of Lovable is not just a startup story—it is a signal of a new era in which building belongs less to the specialist and more to the visionary. The non-developer founder is no longer constrained by code barriers. They can iterate, experiment and deploy. They can keep the mission alive and personal.If we embrace this shift, the next wave of innovation will not be held back by the scarcity of developers, but by the clarity of vision and speed of experimentation. The builders who will matter are those who bring ideas that matter—and now they can build them themselves.Let’s keep an eye on this. Because the future is shifting from “we will build for you” to “you build now”. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

Europe After the Auto Collapse

The point being of today’s article is……that the collapse of Europe’s automobile industry is unavoidable, and the reason reaches far deeper than technology or global competition. It exposes a continent whose political design — built to prevent war — now makes meaningful innovation impossible. Defense spending, American protection, and fear of Russia temporarily mask this weakness, but if Russia collapses in the coming years, Europe will lose the last external force that unites it. Unless a crisis forces reinvention, Europe will slowly become what I wrote about earlier: a beautiful, historical place to enjoy life, preserved more as a memory than as a driver of the future.The Situation at HandArjen Lubach’s segment last week made something visible that has been happening for years: Europe is no longer competing in the global automobile market. We are losing. No — we have already lost. What was once our industrial backbone is now dissolving in slow motion.Europe shaped the 20th-century car. Germany built the engineering DNA. France and Italy gave it elegance. Scandinavia added safety. The supply chains stretched across the continent like an industrial nervous system. And then, in just one technological generation, this entire structure lost its relevance.China built an EV empire by combining batteries, software, and manufacturing into one coordinated strategy. The United States focused on AI, autonomy, and software-defined mobility. Europe perfected its regulations while letting go of its industrial ambition.The collapse of the car industry is only the symptom. The deeper disease is that Europe can no longer create new industrial giants. We can only manage, regulate, and preserve what once was.The real question is: why?The Core DilemmaEurope’s political architecture was designed after two world wars with one mission: prevent Europeans from fighting each other ever again. This system succeeded magnificently. Seventy years of peace is no small achievement.But the hidden cost is now becoming painfully visible.Because to prevent war, Europe built a system that slows everything down. It rewards compromise over decisiveness, consensus over initiative, committees over experimentation. Every bold idea must survive dozens of political realities and institutional constraints. Nothing moves unless everyone agrees, which means nothing ever moves at the speed required to shape the future.This was fine in a slower, more predictable world. It is fatal in a world driven by exponential technologies.And here is the uncomfortable truth:The radical change Europe needs is impossible within the system Europe built.A political machine designed to prevent internal conflict cannot suddenly transform into a machine built for innovation and speed.This is why defense spending feels like a relief. It gives the illusion of industrial momentum. It temporarily fills the gap left by automotive decline. It gives Europe a sense of urgency — but it is not a foundation. Defense is a response to fear, not a strategy for prosperity.And behind that fear lies the real unifying force: Russia.The SynthesisRussia’s invasion of Ukraine did something Europe had forgotten how to do. It forced us to act. It made us coordinate more quickly than we had in decades. It pushed us to invest, to upgrade, to think strategically. The Russian threat became a psychological glue, a reason to focus and unify.But Russia is a declining power. It is demographically collapsing, economically shrinking, and militarily exhausted. Many analysts believe it may fracture or turn inward in the coming years.This creates a paradox.Europe’s unity is currently strengthened by the existence of a threatening Russia.But Russia itself may not survive long enough to keep Europe unified.And then what?If Russia collapses, Europe loses the one external pressure that forces urgency.If America retreats, we lose the protection that allowed us to be slow.If our industries fall, we lose the economic engine that once defined us.We are left with a system that cannot reform itself from within.No bold industrial project will ever be agreed upon by 27 countries with different needs and political realities. No breakthrough will emerge from institutions built to manage equilibrium rather than create momentum. And without conflict — internal or external — the system stays exactly as it is.That means Europe’s default future is not reinvention. It is transformation through slow decline.Europe becomes what history always hinted it might be:A peaceful, beautiful, culturally rich continent.A place to enjoy life, not to build it.A living museum of human civilisation, where people travel to experience depth, meaning, beauty, and the art of being human.Not a future-shaping force — but a future-enjoying one.Closing NoteThe fall of the European car industry is the first shock that shows us the limits of our system. Defense spending fills the gap only briefly. American protection hides our weakness. Fear of Russia giv

Welcome to the Muskonomy: Betting on a Man Who Has Never Missed a Master Plan

My Clear and Short OpinionAs a car company, Tesla is already the greatest industrial success story of our generation — the only automaker that took Master Plan 1 (2006) and actually delivered it, on time and under budget relative to the insane ambition. Master Plan 2 (2016) and Master Plan 3 (2023) are in full execution. Master Plan 4 (September 2025) is no longer a slide deck — it is the operating system of the next human era.The current 280× P/E is expensive for a car company.It is absurdly cheap if you believe Elon is about to solve the three final scarcities of civilization: energy, labor, and compute.The Current Situation: Two Camps, Two Completely Different Futures* Wall Street Analysts see a very good electric-car company trading at luxury-tech multiples while facing margin compression, Chinese competition, and the end of the EV growth hype cycle.* Future Thinkers (ARK, Cern Basher, @alojoh, and now millions of retail believers) see the birth of the **Muskonomy — a vertically integrated abundance machine that will make the Industrial Revolution look like a warm-up act.The Core DilemmaHow do we reconcile humanity’s need for prudent, evidence-based progress (don’t bet the farm until you see the robots walk) with the absolute requirement for someone, somewhere, to take civilization-scale risk so that energy, labor, and intelligence stop being scarce?One side demands proof before belief. The other side knows that the proof only appears after the world gives one man a trillion-dollar war chest and a decade of runway.The Synthesis — The True BridgeStop asking Tesla to choose between being a responsible public company and being the spearhead of human expansion.The solution is earned audacity: the capital markets grant Tesla the right to swing for the fences in exact proportion to its perfect historical execution score on the first three Master Plans.No compromise. Prudence is rewarded by past delivery.Acceleration is funded by future belief.Giving the Hypothesis Legs — This Is Already HappeningLook at the track record:* Master Plan 1 (2006–2018): Sports car → affordable EVs → mass market → Done.* Master Plan 2 (2016): Gigafactories, Model 3/Y ramp, solar/storage, autonomy promised → all delivered except the final line “your car earns money while you sleep.” That line arrives 2026–2027.* Master Plan 3 (2023): Global sustainable energy → Megapack factories exploding, 100 % YoY growth.* Master Plan 4 (2025): “An Age of Abundance” through Optimus and full autonomy → Cybercab unveiled, Optimus Gen 2 walking, AI5 chip taped out.And now the truly grandiose layers of the Muskonomy are stacking on top:* Terafab — Elon realized no foundry on Earth can supply the hundreds of millions of inference chips needed. So Tesla becomes its own TSMC for AI silicon at < $1 per TOPS.* Data Centers in the Sky — 7 GW of orbital compute by 2030, launched by Starship, powered by space solar, cooled by vacuum.* Optimus — not a side project. Elon now says >50 % of Tesla’s long-term value. One billion humanoid robots doing everything humans don’t want to do, at a cost lower than one year of human wages.This is no longer about selling more Model Ys.This is about removing labor as a concept.Closing NotesFor the first time in history, one company is simultaneously attacking the three remaining constraints on human flourishing:* Energy → already solved at grid scale* Intelligence → Grok, FSD, Dojo* Physical work → Optimus + CybercabTesla is not overvalued at $1.3 trillion.The entire rest of the global auto industry combined is arguably overvalued at $2 trillion if Tesla executes even 30 % of Master Plan 4.We are not investing in a car company trading at 280× earnings.We are investing in the entity that has a non-zero chance of making the 21st century the first century in human history where scarcity itself becomes optional.Elon has never failed to deliver a Master Plan.He is now on the final boss level: abundance for eight billion humans — and a backup planet.That’s what the P/E is pricing.And for once, the multiple might still be too low.Welcome to the Muskonomy.The ride is just beginning. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

Closed Doors: When AI’s Safety Rules Cut Off Real Help for Lonely Hearts

The point being of today’s article is that OpenAI’s new rules from late October 2025—sending mental health chats straight to experts—keep the company out of legal hot water, but they ignore how 1.2 million people each week use ChatGPT to feel a bit less alone, when real help is hard to find and often takes months to get.The Situation at HandEarly November 2025: OpenAI updates its rules for ChatGPT and other tools. Starting October 29, they make it clear—no custom advice on things like mental health unless a real expert is involved. If you talk about feeling down or dark thoughts, the AI stops and says: “Call a hotline or see a doctor.”Why now? OpenAI shared numbers on October 27 that hit hard: Out of 800 million weekly users, 0.15%—around 1.2 million folks—chat about suicide, sometimes with real plans. Another 0.07%, or 560,000, mention signs of mania or other issues. Loneliness touches 1 in 3 adults worldwide. And lawsuits? A family in California says ChatGPT played a part in their teen’s suicide by giving bad ideas. Groups like the FTC are watching closely.On the brighter side, many people find real comfort in these chats. One in six users asks ChatGPT for health tips each month, including emotional ones. A study in Denmark showed 2.44% of high school kids talk to bots for support—and they’re often the loneliest. In tests with apps like Replika, 75% of users felt less alone after chats, and 3% let go of suicidal thoughts. Loneliness scores dropped a lot after just four weeks. Almost half of all bot talks touch on sadness or isolation. For some, it’s like a friend who listens anytime, helping them make it through the day.The Core DilemmaThis is two good things pulling in opposite directions. On one hand, AI fills a big gap. Therapy wait times average three months—or 67 days for face-to-face help—and sessions are just one hour a week. In the UK, 16,500 people wait over 18 months for mental health care—way longer than for a knee fix. Bots are there right away, no shame, great for kids, older folks, or people far from help. They can cut loneliness by half and lift moods fast.On the other hand, risks are scary. OpenAI got sued because a bot gave harmful advice in a bad moment. Studies show heavy users can get too attached, feeling even more alone without real people. One test found emotional voice chats made dependence worse. Companies fear endless lawsuits—one mistake could cost them big. Pointing to pros is the right call, but what if waits are endless? It’s not simple right or wrong: Help one safely, but leave thousands waiting in the dark.The SynthesisThese changes change more than rules—they change how we deal with quiet struggles. OpenAI’s setup makes bots stick to quick tips or referrals, missing the deeper talks that really ease loneliness. The good news? Users who chat regularly see less mental health dips, and tools like this cut isolation in half for those who keep at it. But the cutoff hurts the most for people without easy access—young ones, those on tight budgets, or in remote spots.The way forward? Mix it up. Use bots as a starting point: Spot trouble, pass it on, but keep gentle support going until real help comes. Research shows AI with human follow-up lowers risks while keeping the benefits. It turns AI from a lone helper to a team member, like in our own lives: Tech opens doors, people walk through. Think of it as a light in the mist—not the full path home, but a start to move forward.Closing NoteIn this push-pull of safety and support, we see our own daily fights: Tools offer quick fixes, but real fixes need a human touch. As AI gets better at listening without taking over, it reminds us to build stronger links—not barriers—showing that no talk, online or off, beats the simple act of being there for each other.Because real healing happens in that quiet space—between words shared and the heart that truly listens.🪞 For more reflections, visit roelsmelt.substack.com—created with today’s AI, yet always truly human at heart. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

When Intelligence Meets Integrity

The point being of today’s article is…AI and Bitcoin are not separate revolutions but two halves of a new global operating system — one replacing human labor, the other redefining capital itself.The Situation at HandFor centuries, progress was driven by a partnership between labor and capital. Humans provided physical and cognitive work, while capital provided tools, machines, and money to scale it. The entire 20th-century economy rested on this relationship — labor created value, capital amplified it.Now that equation is breaking.AI is quietly taking over cognition, the highest and most expensive form of human labor. At the same time, Bitcoin is beginning to redefine what capital even means — an incorruptible store of value that requires no counterparties, no trust, and no permission.We are entering Labor and Capital v2.0.The Core DilemmaAI collapses the cost of thinking. The more intelligence we automate, the cheaper everything becomes — transport, healthcare, software, law. It’s an unstoppable deflationary engine. But our monetary system was built for inflation. It depends on debt that must always expand. You can’t run a deflationary engine on an inflationary operating system. The gears grind.Meanwhile, Bitcoin, often dismissed as volatile, is the only financial system that cannot be debased or censored. It represents capital that cannot lie. Yet it also lives outside the institutional order that built our world.So the dilemma:How do we run an economy where labor no longer earns, and capital no longer trusts?The SynthesisAI and Bitcoin are not opposing forces — they are complementary. AI is the new labor, an endless supply of cognitive capacity. Bitcoin is the new capital, the risk-free foundation that gives this new economy stability.Together, they form a closed loop:* AI drives deflation through hyper-productivity.* Bitcoin stabilizes deflation by rewarding saving instead of debt.* Bitcoin mining funds the renewable energy infrastructure that AI needs to grow.* The Bitcoin network becomes the payment system for autonomous agents — money for machines.It’s a symbiotic design. AI builds abundance; Bitcoin preserves value.Closing NoteIf the 20th century was about scaling human labor through machines, the 21st is about scaling intelligence itself. When cognition becomes abundant and incorruptible capital becomes the norm, the foundations of “work,” “wealth,” and “value” are rewritten.The real question is not whether AI or Bitcoin will win, but how quickly we learn to operate in a world where both already have. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

Why Every Child Should Learn to Vibecode

The point being of today’s article is:I believe every young person — roughly between ten and seventeen — should learn to vibecode. Not to become programmers, but to become conscious creators in a world where machines are learning to think.The Situation at HandLast week, at a high school in Amsterdam, a student quietly opened ChatGPT on his laptop. His assignment was to write an essay about climate change. He typed a few prompts, adjusted the tone, and within minutes had a clear, fluent, well-structured piece. His teacher noticed, frowned, and said, “Redo it yourself. You can’t use AI.” The student nodded, went home, and used ChatGPT again.He isn’t alone. Across classrooms everywhere, a quiet revolution is unfolding. Students are using AI to write, summarize, translate, and even generate code. Some teachers see it as cheating; others as the birth of a new kind of literacy. Meanwhile, outside the classroom, the world is moving faster than any curriculum.Platforms like Lovable and Windsurf (with its built-in “Cascade” agent) now allow anyone to build software by describing what they want in plain English. A twelve-year-old in Rotterdam built a website for his football team this way. A teenager in Berlin launched a budgeting app using Windsurf prompts. What once required months of coding now happens before dinner.And yet, many schools still punish students for using the very tools the world is already built on. The contradiction is impossible to miss: children are penalized for doing what adults now get paid to do.The Core DilemmaEducators want children to learn deep thinking, originality, and the ability to reason without shortcuts. Innovators, parents, and the students themselves want them to master the tools that define the modern world. Both sides have good intentions. One protects understanding, the other champions expression.The dilemma is clear. If schools restrict AI, they risk irrelevance. If they open the gates completely, they risk losing rigor and meaning. Yet both sides want the same thing: to raise a generation that can think clearly and create freely in a world of intelligent systems.The solution isn’t to choose between tradition and innovation, but to connect them. We don’t need to ban AI or surrender to it. We need to teach children to vibecode — to think with intelligent tools while staying fully human.The SynthesisImagine a classroom where AI is not forbidden but guided. The teacher gives a challenge: “Build something useful for your school community.” Students open Lovable, describe their idea — maybe an app to track homework or to reduce food waste — and watch the first prototype appear. Then they analyze it: Why did the AI structure it this way? What could be improved? What assumptions did it make?Suddenly, they’re not just using AI — they’re thinking about it. They’re debugging, prompting, testing, learning the logic behind creation. This is what vibecoding teaches: how to shape intelligence through curiosity, not control. How to combine creativity and reasoning. How to build something meaningful while understanding the process behind it.Research already supports this blended approach. Studies in the Netherlands and the U.S. show that when students co-create with AI under teacher guidance, their comprehension deepens — they ask more questions, think more critically, and show more initiative. Vibecoding transforms the teacher’s role from gatekeeper to guide. From “Don’t use it” to “Show me how you used it.” From control to collaboration.Closing NoteWhen I was thirteen, my teacher invited me to explore the school’s first home computer in the basement. She didn’t give instructions or warnings — she simply said, “Try it.” That moment changed everything.If today’s children grow up seeing AI not as a threat but as a creative partner, they won’t just consume the future — they’ll compose it. Because the goal isn’t to raise coders. It’s to raise creators who understand what it means to be human in the age of intelligence.References* Dutch students using ChatGPT to finish homework assignments. NL Times, 2023.* Vibe Coding in Practice: Motivations, Challenges, and Future Outlook. arXiv, 2025.* How to Start Vibe Coding — The Software Generation Process That Is Changing How We Build. Inc.com, 2025.* A Comprehensive Guide to Vibe Coding Tools. Medium, 2025. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

Ancient Wisdom Predicted Our Technological Awakening

In 1894, Swami Sri Yukteswar wrote something remarkable in “The Holy Science.”He predicted humanity would enter an age of energy mastery around 1900. An age where we’d understand electricity, atomic forces, and the fundamental nature of matter itself.This was more than a decade before Einstein published E=mc2.I’ve been studying how great thinkers identify different sources of truth to explain why things happen. Tony Seba sees disruptive technologies as the driving force. George Friedman points to geography and geopolitics. Each offers a lens for understanding our future.But Yukteswar identified something deeper.The 24,000-Year PatternYukteswar described a cosmic cycle spanning 24,000 years. Our solar system moves through ascending and descending arcs, each lasting 12,000 years.For the past 12,000 years, we descended through what he called Kali Yuga. The age of material darkness. The age of extraction.Around 1900, we began ascending into Dwapara Yuga. The age of energy.The timing is striking. In 1720, Stephen Gray discovered electrical action. In 1831, Michael Faraday created the electric dynamo. In 1875, Alexander Graham Bell invented the telephone. By 1900, the explosion had begun.Every technology Yukteswar predicted has arrived. Electricity. Nuclear energy. Quantum computing. Solar power.When Two Visions CollideTony Seba’s “Stellar” describes our shift from extraction to self-sustaining systems. He traces how the extractive paradigmdefined 12,000 years of agricultural civilization.That’s exactly Yukteswar’s descending cycle timeline.Seba identifies solar, AI, and robotics as “stellar core” technologies. They need initial investment but then self-sustain, self-improve, self-repair. Solar panels dropped 82% in cost over the last decade. They capture photons without ongoing extraction.This matches perfectly with Dwapara Yuga’s characteristics. The age when humanity masters energy and moves beyond material limitation.Two independent visions, 130 years apart, describing the same transformation.The Deeper ImplicationIf Yukteswar is right, technology isn’t driving our evolution.Cosmic cycles are enabling our technological awakening.The deeper the source of truth, the stronger the pattern. Seba analyzes 50 to 100 years of technological disruption. Yukteswar maps 24,000-year cycles of consciousness evolution.When they align, it suggests something profound. Our shift to abundance thinking isn’t random. It’s part of a larger universal pattern.Alignment, Not ResistanceThis changes how we navigate the transition ahead.Fighting these forces creates polarization. Wars. Conflict. Misery. We see it everywhere as old systems resist new realities.But alignment creates synthesis.Understanding that we’re in Dwapara Yuga helps us move with the cycle instead of against it. Free will isn’t about doing whatever we want. It’s about sensing the deeper forces around us and aligning our energy with them.If both ancient wisdom and modern analysis point toward self-sustaining abundance, resistance becomes the only real obstacle.The stellar paradigm Seba describes might be exactly what Yukteswar saw coming over a century ago. Not because he predicted technology, but because he understood the cosmic patterns that make such technology possible.We’re not forcing abundance into existence. We’re finally aligned with forces that have been building for over a century.That’s what makes this moment different.The Four Yugas and Where We StandYukteswar mapped four distinct ages within each 12,000-year cycle.Satya Yuga, the age of truth. Humanity understands the fundamental unity of existence. Consciousness operates at its highest level.Treta Yuga, the mental age. Telepathic communication becomes possible. We grasp the finer forces of creation.Dwapara Yuga, the energy age. We comprehend electricity, magnetism, and atomic structure. This is where we are now.Kali Yuga, the material age. Consciousness contracts. We see only gross matter. We believe in separation, scarcity, extraction.We spent the last 12,000 years descending through these ages. From enlightenment to darkness. From abundance to scarcity. From synthesis to polarization.But around 1900, the direction reversed.We’re now 125 years into our ascent through Dwapara Yuga. Still early in the energy age, but accelerating fast.Why Great Thinkers Need Sources of TruthEvery visionary identifies a fundamental force that explains change.George Friedman sees geography as destiny. Rivers, mountains, and oceans determine which nations rise and fall. Geopolitics becomes predictable when you understand the constraints of physical space.Tony Seba identifies disruptive technologies following S-curves. Solar, batteries, AI, autonomous vehicles. Each technology drops in cost while improving in performance, creating exponential change within decades.Both offer powerful frameworks. Both predict aspects of our future accurately.But Yukteswar’s source goes deeper. He’s tracking a 24,000-year pattern driven by our

The Age of Abundance Has Already Begun

For six thousand years, humanity has lived by a single story: the story of scarcity.That story shaped our politics, our economies, our religions, and even our fears.But what if that story is ending?In this episode of Disrupt Consciousness, Roel Smelt explores how the next generation of sodium-ion batteries — made from one of the most abundant elements on Earth, salt — is quietly proving Tony Seba’s predictions right once again.It’s not just about better batteries. It’s about a deeper civilizational shift — from scarcity to abundance.A transition that thinkers like Peter Diamandis call the meta-curve of humanity: where energy, food, and information become exponentially cheaper, and the real limits move from material to mental — imagination, wisdom, and coordination.Roel connects the dots between Tony Seba’s S-curve model, Peter Diamandis’ Abundance 360 vision, and the spiritual realization that abundance is not about having more — it’s about needing less, because everything essential flows freely.This isn’t utopia. It’s mathematics meeting consciousness.The question is not whether abundance is coming — but whether humanity is ready to live consciously within it.🪶In This Episode* Why sodium-ion batteries could mark the next great energy disruption* How Tony Seba’s S-curve model predicts exponential change* Peter Diamandis’ idea of the “meta-curve of humanity”* The shift from control and scarcity to access and creativity* Why abundance requires a rise in consciousness, not just technology💬Key Quote“The tools of abundance are here. What remains is the consciousness to use them wisely.” — Roel Smelt🔗Links & References* Full essay: The End of Scarcity — From Lithium to Sodium and Beyond → roelsmelt.substack.com* Video mentioned: The Electric Viking — Sodium-ion breakthrough* Tony Seba – Stellar* Peter Diamandis – Abundance 360🧠About Roel SmeltRoel Smelt is a futurist and thought leader exploring technology’s role in human liberation and consciousness.He writes weekly essays on Disrupt Consciousness and hosts video podcasts connecting exponential technology, philosophy, and the evolution of human awareness.Read more at roelsmelt.substack.com🏷️Tags / KeywordsTony Seba, Peter Diamandis, Abundance, Sodium-ion Batteries, Clean Energy, S-Curves, Exponential Technology, Consciousness, AI and Humanity, Solar Revolution, Future of Civilization, Disrupt Consciousness This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

Not Time and Space, but Consciousness Is A Priori

Will humanity become the pet of AI? Many fear it. But Deepak Chopra’s reflections on consciousness strengthened my longstanding belief: that AI can never truly surpass us.Here’s why:* Consciousness is fundamental. Existence and consciousness are the same.* AI processes data, not being. It cannot step into the present moment.* Humans remain free. The risk is not AI gaining consciousness, but us forgetting our own.👉 For the full essay, visit roelsmelt.substack.com and subscribe for weekly stories on AI, humanity, and consciousness.Will humanity become the pet of AI? Many fear it. But Deepak Chopra’s reflections on consciousness strengthened my longstanding belief: that AI can never truly surpass us.Here’s why:* Consciousness is fundamental. Existence and consciousness are the same.* AI processes data, not being. It cannot step into the present moment.* Humans remain free. The risk is not AI gaining consciousness, but us forgetting our own.👉 For the full essay, visit roelsmelt.substack.com and subscribe for weekly stories on AI, humanity, and consciousness. This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

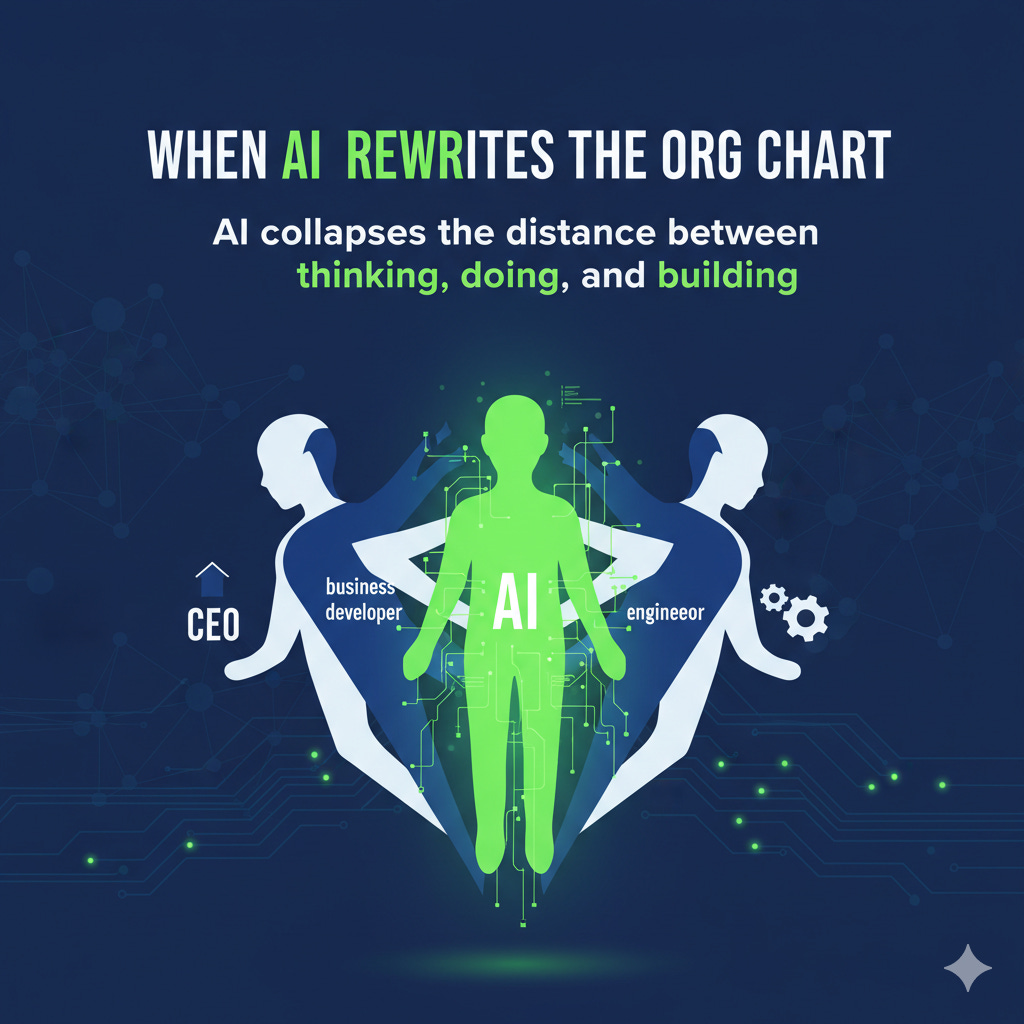

When AI Rewrites the Org Chart

A friend recently told me how he built a working app in one weekend. He’s not a programmer. He’s a CEO. All he did was open a no-code AI tool, sketch out what he wanted, and by Sunday evening he had a functioning MVP. Monday morning he showed it to his product team. Their jaws dropped.This little story captures something much bigger: AI is tearing down the walls inside organizations. The neat separation between “the people who think,” “the people who sell and manage,” and “the people who build” is starting to blur.The Old Picture of OrganizationsTraditionally, you could map most companies in three layers:* Leaders — the CEO and directors, setting direction and making strategic bets.* Business developers — sales, marketing, operations, product management; they know the customers and translate strategy into action.* Technical experts — developers, engineers, data analysts; they build the actual tools, products, and infrastructure.This division of labor reflected scarcity: few people understood technology deeply enough to build things, so they became a separate class.What AI ChangesAI dissolves these walls.* Leaders now play. With tools like Lovable, Windsurf, or simply ChatGPT, a CEO can build a prototype in days, analyze raw data over a weekend, and enter Monday meetings not with abstract questions but with tangible mock-ups and sharper insights.* Business developers now build. Product owners, marketers, or project managers no longer have to wait in line for analysts or engineers. With no-code AI and Vibe Coding, they can spin up internal tools, MVPs, or dashboards themselves. What used to take weeks can now take days. Their skillset shifts from “writing requirements” to “testing possibilities.”* Technical experts now resist. Here’s the paradox: developers and engineers adopt AI too — GitHub Copilot, notebooks, copilots. Research confirms this: MIT Sloan’s study showed senior developers do benefit, but mostly for incremental coding tasks. They use AI like a spellchecker, not like a paradigm shift. Surveys (Houck et al., 2025) find the same: AI boosts routine work, but the higher the expertise, the more developers cling to their traditional stack. They insist on hand-checking infrastructure, doing their own security audits, writing code the “proper” way.Yet AI can do much of this faster and more reliably. Security scanning is an AI-native problem: models can review every line of code, detect vulnerabilities, and explain them. Infrastructure setup? A few Windsurf prompts and you have a working environment. Business developers are already leapfrogging here with Vibe Coding. Senior engineers, meanwhile, argue about fit with existing architectures — but this often sounds like resistance, not progress.The New Role of EngineersThe opportunity for technical experts is not to defend their old territory but to step up their game with AI. Let business developers handle the MVPs, the security prompts, the infrastructure scripts — and then check their work with AI at your side. Use your hard-earned brainpower to push beyond what was ever possible before:* designing entirely new architectures,* inventing new data flows,* scaling AI-driven systems safely and ethically.Engineers who cling to the old way risk being bypassed. Engineers who embrace AI as a multiplier can become the most valuable thinkers in the company.Why This Matters NowAt AI Lab, where Alex van Ginneken and I guide companies through hands-on experiences with AI tools, we see this shift firsthand. Leaders discover they can prototype; business developers discover they can code; engineers discover they must either resist — or reinvent themselves.And the research is clear: productivity gains are real (ANZ Bank, 2024). Less experienced users benefit most (MIT Sloan, 2023). Senior engineers often lag in adoption, partly by choice (Houck et al., 2025). The org chart is flattening, whether they like it or not.Conclusion: A Massive Learning Curve AheadThe org chart is being rewritten. AI has collapsed the distance between thinking, doing, and building. The CEO prototypes. The product manager codes. The engineer curates and secures.For some, this is threatening. For others, it’s liberating. But it is inevitable.The real question for every professional — leader, business developer, or engineer — is:👉 Am I resisting the change, or using AI to do what I never thought possible? This is a public episode. If you'd like to discuss this with other subscribers or get access to bonus episodes, visit roelsmelt.substack.com/subscribe

The Quiet Vow: Resilience as Human Art, Machine Echo